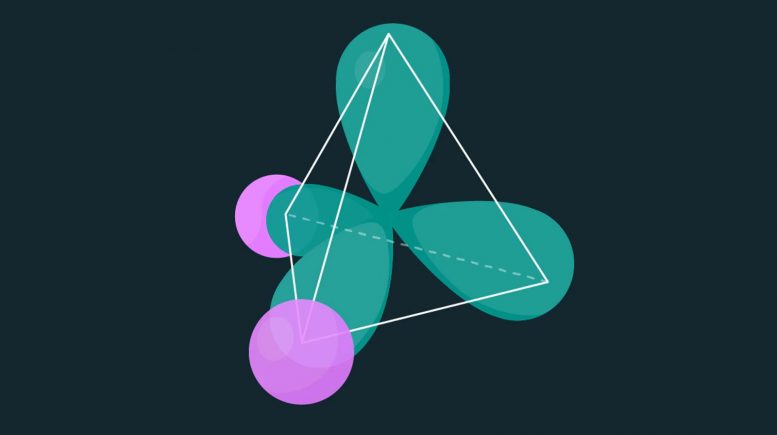

The tetrahedral electronic distribution of a water molecule. The oxygen atom nucleus is at the center of the tetrahedron, and the hydrogen nuclei are in the center of the pink spheres. Credit: Simons Foundation

Machine learning techniques accurately calculate the energy required to make — or break — simple molecules.

A new machine learning tool can calculate the energy required to make — or break — a molecule with higher accuracy than conventional methods. While the tool can currently only handle simple molecules, it paves the way for future insights in quantum chemistry.

“Using machine learning to solve the fundamental equations governing quantum chemistry has been an open problem for several years, and there’s a lot of excitement around it right now,” says co-creator Giuseppe Carleo, a research scientist at the Flatiron Institute’s Center for Computational Quantum Physics in New York City. A better understanding of the formation and destruction of molecules, he says, could reveal the inner workings of the chemical reactions vital to life.

Carleo and collaborators Kenny Choo of the University of Zurich and Antonio Mezzacapo of the IBM Thomas J. Watson Research Center in Yorktown Heights, New York, present their work today (May 12, 2020) in Nature Communications.

The team’s tool estimates the amount of energy needed to assemble or pull apart a molecule, such as water or ammonia. That calculation requires determining the molecule’s electronic structure, which consists of the collective behavior of the electrons that bind the molecule together.

A molecule’s electronic structure is a tricky thing to calculate, requiring the determination of all the potential states the molecule’s electrons could be in, plus each state’s probability.

Since electrons interact and become quantum mechanically entangled with one another, scientists can’t treat them individually. With more electrons, more entanglements crop up, and the problem gets exponentially harder. Exact solutions don’t exist for molecules more complex than the two electrons found in a pair of hydrogen atoms. Even approximations struggle with accuracy when they involve more than a few electrons.

One of the challenges is that a molecule’s electronic structure includes states for an infinite number of orbitals going farther and farther from the atoms. Additionally, one electron is indistinguishable from another, and two electrons can’t occupy the same state. The latter rule is a consequence of exchange symmetry, which governs what happens when identical particles switch states.

Mezzacapo and colleagues at IBM Quantum developed a method for constraining the number of orbitals considered and imposing exchange symmetry. This approach, based on methods developed for quantum computing applications, makes the problem more akin to scenarios where electrons are confined to preset locations, such as in a rigid lattice.

The similarity to rigid lattices was the key to making the problem more manageable. Carleo previously trained neural networks to reconstruct the behavior of electrons confined to the sites of a lattice. By extending those methods, the researchers could estimate solutions to Mezzacapo’s compacted problems. The team’s neural network calculates the probability of each state. Using this probability, the researchers can estimate the energy of a given state. The lowest energy level, dubbed the equilibrium energy, is where the molecule is the most stable.

The team’s innovations made calculating a basic molecule’s electronic structure simpler and faster. The researchers demonstrated the accuracy of their methods by estimating how much energy it would take to pull a real-world molecule apart, breaking its bonds. They ran calculations for dihydrogen (H2), lithium hydride (LiH), ammonia (NH3), water (H2O), diatomic carbon (C2) and dinitrogen (N2). For all the molecules, the team’s estimates proved highly accurate even in ranges where existing methods struggle.

In the future, the researchers aim to tackle larger and more complex molecules by using more sophisticated neural networks. One goal is to handle chemicals like those found in the nitrogen cycle, in which biological processes build and break nitrogen-based molecules to make them usable for life. “We want this to be a tool that could be used by chemists to process these problems,” Carleo says.

Carleo, Choo, and Mezzacapo aren’t alone in tapping machine learning to tackle problems in quantum chemistry. The researchers first presented their work on arXiv.org in September 2019. In that same month, a group in Germany and another at Google’s DeepMind in London each released research using machine learning to reconstruct the electronic structure of molecules.

The other two groups use a similar approach to one another that doesn’t limit the number of orbitals considered. This inclusiveness, however, is more computationally taxing, a drawback that will only worsen with more complex molecules. With the same computational resources, the approach by Carleo, Choo and Mezzacapo yields higher accuracy, but the simplifications made to obtain this accuracy could introduce biases.

“Overall, it’s a trade-off between bias and accuracy, and it’s unclear which of the two approaches has more potential for the future,” Carleo says. “Only time will tell us which of these approaches can be scaled up to the challenging open problems in chemistry.”

Reference: “Fermionic neural-network states for ab-initio electronic structure” by Kenny Choo, Antonio Mezzacapo and Giuseppe Carleo, 12 May 2020, Nature Communications.

DOI: 10.1038/s41467-020-15724-9

Be the first to comment on "Artificial Intelligence Cracks Quantum Chemistry Conundrum"