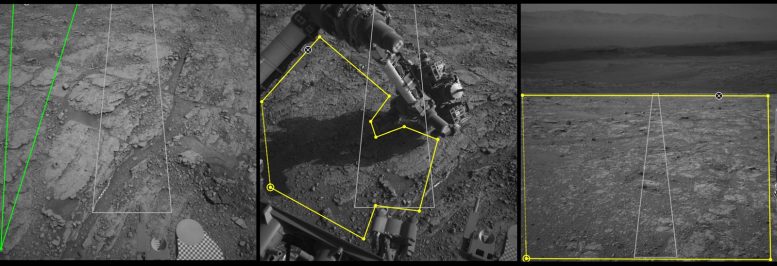

Three images from the tool called AI4Mars show different kinds of Martian terrain as seen by NASA’s Curiosity rover. By drawing borders around terrain features and assigning one of four labels to them, you can help train an algorithm that will automatically identify terrain types for Curiosity’s rover planners. Credit: NASA/JPL-Caltech

Using an online tool to label Martian terrain types, you can train an artificial intelligence algorithm that could improve the way engineers guide the Curiosity rover.

You may be able to help NASA’s Curiosity rover drivers better navigate Mars. Using the online tool AI4Mars to label terrain features in pictures downloaded from the Red Planet, you can train an artificial intelligence algorithm to automatically read the landscape.

Is that a big rock to the left? Could it be sand? Or maybe it’s nice, flat bedrock. AI4Mars, which is hosted on the citizen science website Zooniverse, lets you draw boundaries around terrain and choose one of four labels. Those labels are key to sharpening the Martian terrain-classification algorithm called SPOC (Soil Property and Object Classification).

Developed at NASA’s Jet Propulsion Laboratory, which has managed all of the agency’s Mars rover missions, SPOC labels various terrain types, creating a visual map that helps mission team members determine which paths to take. SPOC is already in use, but the system could use further training.

“Typically, hundreds of thousands of examples are needed to train a deep learning algorithm,” said Hiro Ono, an AI researcher at JPL. “Algorithms for self-driving cars, for example, are trained with numerous images of roads, signs, traffic lights, pedestrians, and other vehicles. Other public datasets for deep learning contain people, animals, and buildings — but no Martian landscapes.”

Once fully up to speed, SPOC will be able to automatically distinguish between cohesive soil, high rocks, flat bedrock, and dangerous sand dunes, sending images to Earth that will make it easier to plan Curiosity’s next moves.

“In the future, we hope this algorithm can become accurate enough to do other useful tasks, like predicting how likely a rover’s wheels are to slip on different surfaces,” Ono said.

The Job of Rover Planners

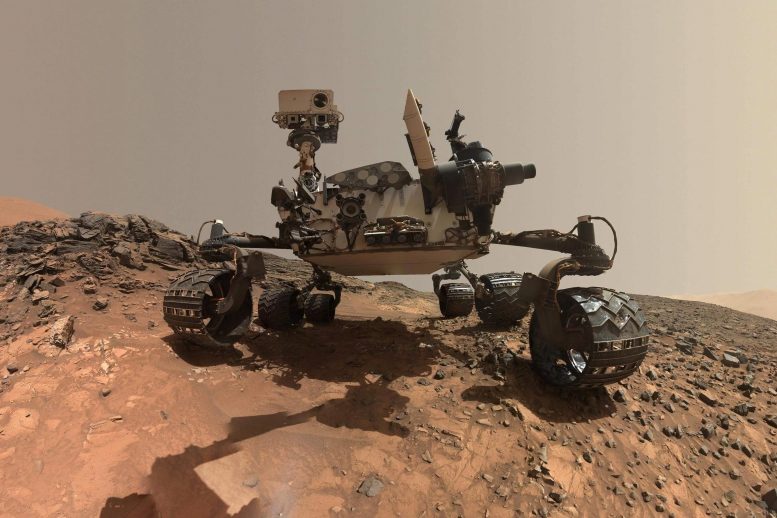

JPL engineers called rover planners may benefit the most from a better-trained SPOC. They are responsible for Curiosity’s every move, whether it’s taking a selfie, trickling pulverized samples into the rover’s body to be analyzed (video below), or driving from one spot to the next.

NASA’s Curiosity rover analyzed its first solid sample of Mars with a variety of instruments, including the Sample Analysis at Mars (SAM) instrument suite. Developed at NASA’s Goddard Space Flight Center in Greenbelt, Md., SAM is a portable chemistry lab tucked inside the Curiosity rover. SAM examines the chemistry of samples it ingests, checking particularly for chemistry relevant to whether an environment can support or could have supported life.

It can take four to five hours to work out a drive (which is now done virtually), requiring multiple people to write and review hundreds of lines of code. The task involves extensive collaboration with scientists as well: Geologists assess the terrain to predict whether Curiosity’s wheels could slip, be damaged by sharp rocks, or get stuck in the sand, which trapped both the Spirit and Opportunity rovers.

Planners also consider which way the rover will be pointed at the end of a drive, since its high-gain antenna needs a clear line of sight to Earth to receive commands. And they try to anticipate shadows falling across the terrain during a drive, which can interfere with how Curiosity determines the distance. (The rover uses a technique called visual odometry, comparing camera images to nearby landmarks.)

How AI Could Help

SPOC won’t replace the complicated, time-intensive work of rover planners. But it can free them to focus on other aspects of their job, like discussing with scientists which rocks to study next.

“It’s our job to figure out how to safely get the mission’s science,” said Stephanie Oij, one of the JPL rover planners involved in AI4Mars. “Automatically generating terrain labels would save us time and help us be more productive.”

The benefits of a smarter algorithm would extend to planners on NASA’s next Mars mission, the Perseverance rover, which launches this summer. But first, an archive of labeled images is needed. More than 8,000 Curiosity images have been uploaded to the AI4Mars site so far, providing plenty of fodder for the algorithm. Ono hopes to add images from Spirit and Opportunity in the future. In the meantime, JPL volunteers are translating the site so that participants who speak Spanish, Hindi, Japanese, and several other languages can contribute as well.

Be the first to comment on "ATTN: NASA’s Mars Curiosity Rover Drivers Need Your Help"