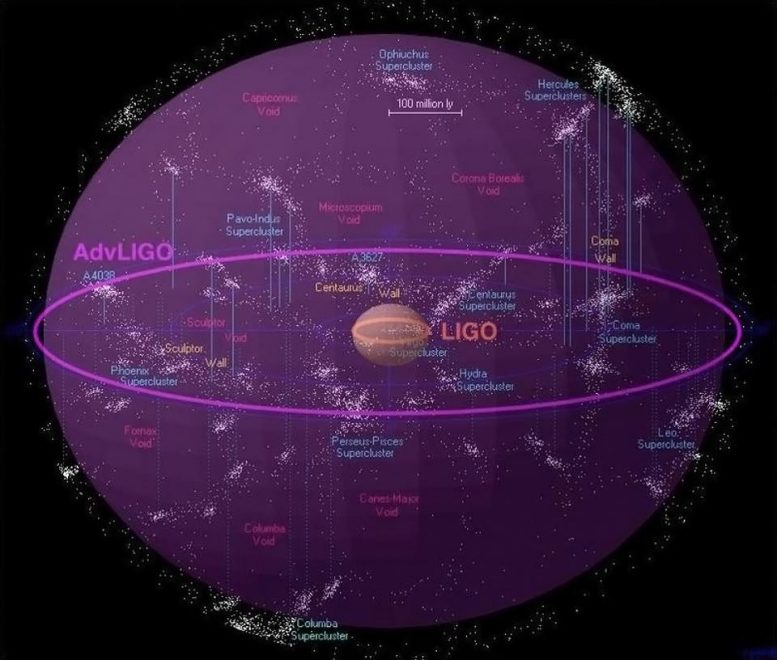

Illustrated here is the range of Advanced LIGO and its capability of detecting merging black holes. Credit: LIGO Collaboration / Amber Stuver / Richard Powell / Atlas of the Universe

The Advanced Laser Interferometer Gravitational-Wave Observatory (Advanced LIGO) detectors and the Virgo detector have directly observed transient gravitational waves (GWs) from compact binary coalescences. After a series of instrument upgrades to further improve the sensitivity—like replacing test masses and optics, increasing laser power, and adding squeezed light—the two LIGO detectors started the third observing run (O3), together with Virgo, on April 1st, 2019 and finished the year-long observation on March 27th, 2020.

The time series of dimensionless strain, defined by the differential changes in the length of the two orthogonal arms divided by the averaged full arm length (~4 km in the two LIGO detectors), is used to determine the detection of a GW signal and infer the properties of the astrophysical source. (The figure shows a conceptual diagram of the optical configuration of the Advanced LIGO interferometers.)

Due to the presence of noise and the desire to maintain the resonance condition of the optical cavities, the detectors do not directly measure the strain. The differential arm displacement is suppressed by the control force allocated to the test masses. The residual differential arm displacement in the control loop is converted into digitized photodetector output signals. Therefore, the strain is reconstructed from the raw digitized electrical output of each detector, with an accurate and precise model of the detector response to the strain. This reconstruction process is referred to as detector ‘calibration.’ The accuracy and precision of the estimated detector response, and hence the reconstructed strain data, are important for detecting GW signals and crucial for estimating their astrophysical parameters.

Understanding, accounting, and/or compensating for the complex-valued response of each part of the upgraded detectors improves the overall accuracy of the estimated detector response to GWs. Calibration systematic error is the frequency-dependent and time-dependent deviation of the estimated detector response from the true detector response. We describe our improved understanding and methods used to quantify the response of each detector, with a dedicated effort to define all places where systematic error plays a role. We use the two LIGO detectors as they stand in the first half (six months) of O3 to demonstrate how each identified systematic error impacts the reconstructed strain and constrain the statistical uncertainty therein. We report the accuracy and precision of the strain data by estimating the 68% confidence interval bounds on the systematic error and uncertainty for the response of each detector (in magnitude and phase).

In the first half of O3, the overall systematic error and uncertainty of the best calibrated data is within 7% in magnitude and 4 degrees(?) in phase in the most sensitive frequency band 20–2000 Hz. The systematic error alone, in the same band, is estimated to be below 2% in magnitude and 2 degrees in phase. Current detection of GW events and estimation of their astrophysical parameters are not yet limited by such levels of systematic error and uncertainty. However, as the global GW detector network sensitivity increases, detector calibration systematic error and uncertainty plays an increasingly important role. Limitations caused by calibration systematics on estimated GW source parameters, precision astrophysics, population studies, cosmology, and tests of general relativity are possible. Efforts are being carried out to better integrate the work presented in this paper into future GW data analyses. Research and development of new techniques are underway to further reduce calibration systematic error and uncertainty below the 1% level, a key milestone towards minimizing impacts of calibration systematics on astrophysical and cosmological results.

Written by Lilli Sun, OzGrav Associate Investigator, ANU

Your main objective is to zero out any/all lithospheric or tectonic activities. Real time exam from all three LIGO signal outs have not yet it seems been integrated for calibration