Study provides new insights into how our visual memories are stored.

A team of scientists has discovered how working memory is “formatted” — a finding that enhances our understanding of how visual memories are stored.

“For decades researchers have wondered about the nature of the neural representations that support our working memory,” explains Clayton Curtis, professor of psychology and neural science at New York University and the senior author of the paper, which appears in the journal Neuron. “In this study, we used both experimental and analytical techniques to reveal the format of working memory representations in the brain.”

The ability to store information for brief periods of time, or “working memory,” is a building block for most of our higher cognitive processes, and its dysfunction is at the heart of a variety of psychiatric and neurologic symptoms, including schizophrenia.

Despite its importance, we still know very little about how the brain stores working memory representations.

“Although we can predict the contents of your working memory from the patterns of brain activity, what exactly these patterns are coding for has remained impenetrable,” Curtis states.

Curtis and co-author Yuna Kwak, an NYU doctoral student, hypothesized that our brains not only discard task-irrelevant features but also re-code task-relevant features into memory formats that are both efficient and distinct from the perceptual inputs themselves.

It’s been known for decades that we re-code visual information about letters and numbers into phonological or sound-based codes used for verbal working memory. For instance, when you see a string of digits of a phone number, you don’t store that visual information until you finish dialing the number. Rather you store the sounds of the numbers (e.g., what the phone number “867-5309” sounds like as you say it in your head). However, this only indicates that we do re-code—it doesn’t address how the brain formats working memory representations, which was the focus of the new Neuron study.

To explore this, the experimenters measured brain activity with functional magnetic resonance imaging (fMRI) while participants performed visual working memory tasks. On each trial, the participants had to remember, for a few seconds, a briefly presented visual stimulus and then make a memory-based judgment. In some trials, the visual stimulus was a tilted grating and on others it was a cloud of moving dots. After the memory delay, participants had to precisely indicate the exact angle of the grating’s tilt or the exact angle of the dot cloud’s motion.

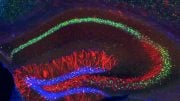

Despite the different types of visual stimulation (grating vs. dot motion), they found that the patterns of neural activity in visual cortex and parietal cortex—a part of the brain used in memory processing and storage—were interchangeable during memory. In other words, the pattern trained to predict motion direction could also predict grating orientation—and vice versa.

This finding prompted the question—why were those memory representations interchangeable?

“We reasoned that only the task-relevant features of the tested stimuli were extracted and re-coded into a shared memory format, perhaps taking the form of an abstract line-like shape angled to match either the orientation of the grating or the direction of dot motion,” explains Curtis.

To test this hypothesis that participants’ memories were recorded into a line-like pattern—akin to imagining a line at a certain angle—they turned to a novel way to visualize the patterns of brain activity.

Using models of each cortical population’s receptive field, the researchers projected the memory patterns encoded in the patterns of cortical activity onto a two-dimensional representation of visual space. This approach created a representation of the cortical activity within the space of the monitor that the participants viewed. This method allowed the scientists to visualize in screen coordinates the pattern of the subjects’ cortical activity, revealing a line-like representation for both motion and grating stimuli.

“We could see lines of activity across the topographic maps at angles corresponding to the motion direction and grating,” explains Curtis.

This novel visualization technique offered an opportunity to actually “see” how working memory representations were encoded in a neural population.

Specifically, a single line (like a pointer or arrow) was used to represent the direction of motion (e.g., up and to the left) and the orientation of a tilted grating (e.g., up and to the left). The task required subjects to remember not all the moving dots but, rather, only a summary of the dots’ motion direction. Moreover, it required memory for the angle of the grating, and not all the other visual details of the grating, such as spatial frequency and contrast. Consequently, the method was able to separate how we selectively store relevant information while discarding irrelevant content.

“Our visual memory is flexible and can be abstractions of what we see driven by the behaviors they guide,” Curtis concludes.

Reference: “Unveiling the abstract format of mnemonic representations” by Yuna Kwak and Clayton E. Curtis, 7 July 2022, Neuron.

DOI: 10.1016/j.neuron.2022.03.016

The research was supported by National Institutes of Health grants from the National Eye Institute (NEI) (R01 EY-016407, R01 EY-027925).

The scientific facts and information are fascinating and useful for everyone. I do appreciate the efforts being made on providing the pieces of facts. Wish you all luck with all due respect.