AI-generated X-rays are now so realistic they could fool doctors—and potentially disrupt the entire healthcare system.

A new study published today (March 24) in Radiology, the journal of the Radiological Society of North America (RSNA), finds that both radiologists and advanced multimodal large language models (LLMs) struggle to reliably tell apart real X-rays from artificial intelligence (AI)-generated “deepfake” versions. The results point to growing risks tied to synthetic medical images and highlight the urgent need for better detection tools and specialized training to protect the accuracy of medical records.

A “deepfake” is any image, video, or audio that appears authentic but has been created or altered using AI.

“Our study demonstrates that these deepfake X-rays are realistic enough to deceive radiologists, the most highly trained medical image specialists, even when they were aware that AI-generated images were present,” said lead study author Mickael Tordjman, M.D., post-doctoral fellow, Icahn School of Medicine at Mount Sinai, New York.

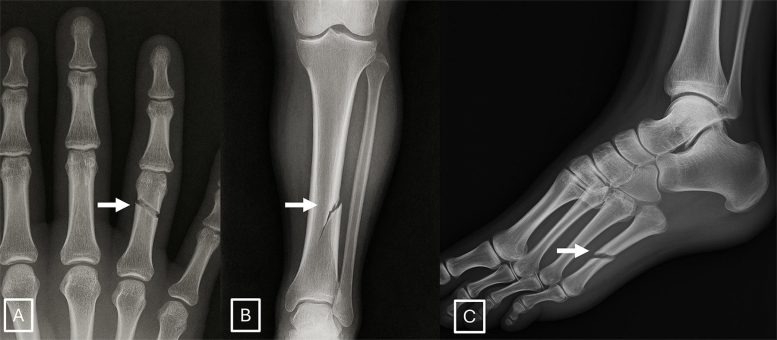

“This creates a high-stakes vulnerability for fraudulent litigation if, for example, a fabricated fracture could be indistinguishable from a real one. There is also a significant cybersecurity risk if hackers were to gain access to a hospital’s network and inject synthetic images to manipulate patient diagnoses or cause widespread clinical chaos by undermining the fundamental reliability of the digital medical record.”

Study Design and Global Participation

The study involved 17 radiologists from 12 medical centers across six countries (United States, France, Germany, Turkey, United Kingdom, and United Arab Emirates). Their experience levels ranged from newcomers to experts with up to 40 years in the field. Researchers analyzed a total of 264 X-ray images, split evenly between real and AI-generated scans.

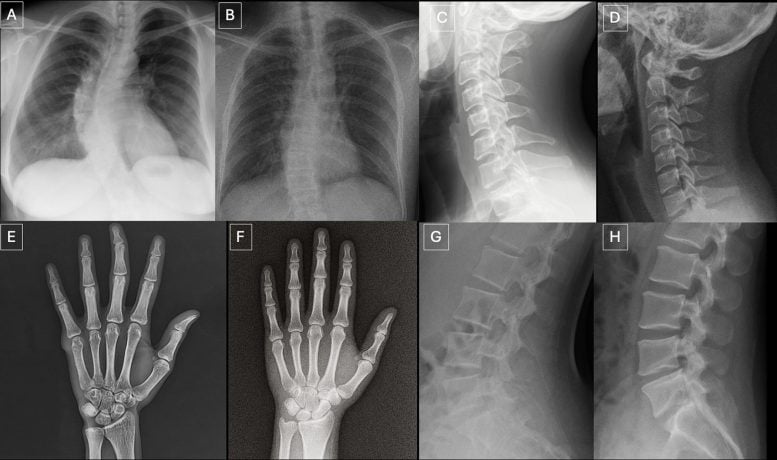

Participants reviewed two separate sets of images. One included a mix of real X-rays and images generated by ChatGPT across different parts of the body. The second focused on chest X-rays, again evenly divided between real images and those created by RoentGen, an open-source generative AI diffusion model developed by Stanford Medicine researchers.

Detection Accuracy Remains Limited

When radiologists were not told that fake images were included, only 41% identified AI-generated X-rays on their own after reviewing image quality. Once they were informed that synthetic images were present, their average accuracy rose to 75% when distinguishing real from fake scans.

Performance varied widely. Some radiologists correctly identified as few as 58% of the AI-generated images, while others reached 92%. The AI systems themselves showed similar limitations. Four multimodal LLMs—GPT-4o (OpenAI), GPT-5 (OpenAI), Gemini 2.5 Pro (Google), and Llama 4 Maverick (Meta)—achieved accuracy rates between 57% and 85%. Even ChatGPT-4o, which helped generate the deepfake images, failed to identify all of them, though it outperformed the other models.

For chest X-rays created by RoentGen, radiologists achieved accuracy rates between 62% and 78%, while AI models ranged from 52% to 89%.

Interestingly, years of professional experience did not improve detection ability. However, musculoskeletal radiologists performed better than other subspecialists.

Signs of AI-Generated X-Rays

Researchers found that synthetic X-rays often share subtle visual patterns.

“Deepfake medical images often look too perfect,” Dr. Tordjman said. “Bones are overly smooth, spines unnaturally straight, lungs overly symmetrical, blood vessel patterns excessively uniform, and fractures appear unusually clean and consistent, often limited to one side of the bone.”

Risks and Potential Safeguards

The findings raise serious concerns about how AI-generated medical images could be misused. Experts warn that fake X-rays could be used in legal cases or introduced into hospital systems to influence diagnoses.

To address these risks, the researchers recommend stronger digital protections. These include invisible watermarks embedded directly in images and cryptographic signatures linked to the technician who captured the scan, helping verify authenticity.

What Comes Next for AI Medical Imaging

“We are potentially only seeing the tip of the iceberg,” Dr. Tordjman said. “The logical next step in this evolution is AI-generation of synthetic 3D images, such as CT and MRI. Establishing educational datasets and detection tools now is critical.”

To support training and awareness, the research team has released a curated deepfake dataset that includes interactive quizzes for educational use.

Reference: “The Rise of Deepfake Medical Imaging: Radiologists’ Diagnostic Accuracy in Detecting ChatGPT-generated Radiographs” by Mickael Tordjman, Murat Yuce, Amine Ammar, Mingqian Huang, Fadila Mihoubi Bouvier, Maxime Lacroix, Anis Meribout, Ian Bolger, Efe Ozkaya, Himanshu Joshi, Amine Geahchan, Rayane El Rahi, Haidara Almansour, Ashwin Singh Parihar, Carolyn Horst, Samet Ozturk, Muhammed Edip Isleyen, Gul Gizem Pamuk, Ahmet Tan Cimilli, Timothy Deyer, Arvin Calinghen, Enora Guillo, Rola Husain, Jean-Denis Laredo, Zahi A. Fayad, Xueyan Mei and Bachir Taouli, 24 March 2026, Radiology.

DOI: 10.1148/radiol.252094

Collaborating with Dr. Tordjman were Murat Yuce, M.D., M.S., Amine Ammar, M.D., Mingqian Huang, M.D., Fadila Mihoubi Bouvier, M.D., Maxime Lacroix, M.D., Anis Meribout, M.D., Ian Bolger, M.S., Efe Ozkaya, Ph.D., Himanshu Joshi, Ph.D., Amine Geahchan, M.D., Rayane El Rahi, M.D., Haidara Almansour, M.D., Ashwin Singh Parihar, M.D., Carolyn Horst, M.D., Samet Ozturk, M.D., Muhammed Edip Isleyen, M.D., Gul Gizem Pamuk, M.D., Ahmet Tan Cimilli, M.D., Timothy Deyer, M.D., Arvin Calinghen, M.D., Enora Guillo, M.D., Rola Husain, M.D., Jean-Denis Laredo, M.D., Zahi A. Fayad, Ph.D., Xueyan Mei, Ph.D., and Bachir Taouli, M.D., M.H.A.

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.

1 Comment

What may be necessary in the future is a chain of custody protocol, or audit trail, for medical imagery to ensure that it is authentic. Perhaps some sort of difficult to forge ‘water mark’ will be necessary to ensure that an image is what it is purported to be.