Researchers from MIT have developed a new algorithm that lets autonomous robots divvy up assembly tasks on the fly, an important step forward in multirobot cooperation.

Today’s industrial robots are remarkably efficient — as long as they’re in a controlled environment where everything is exactly where they expect it to be.

But put them in an unfamiliar setting, where they have to think for themselves, and their efficiency plummets. And the difficulty of on-the-fly motion planning increases exponentially with the number of robots involved. For even a simple collaborative task, a team of, say, three autonomous robots might have to think for several hours to come up with a plan of attack.

This week, at the Institute for Electrical and Electronics Engineers’ International Conference on Robotics and Automation, a group of MIT researchers were nominated for two best-paper awards for a new algorithm that can significantly reduce robot teams’ planning time. The plan the algorithm produces may not be perfectly efficient, but in many cases, the savings in planning time will more than offset the added execution time.

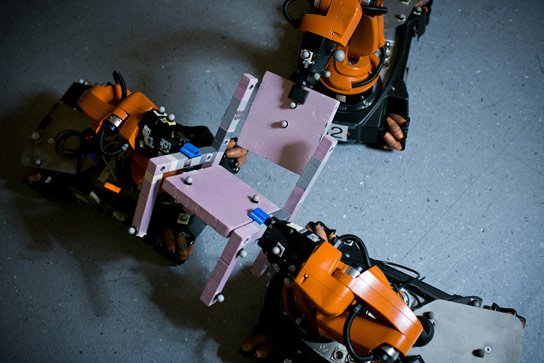

Watch the MIT researchers’ team of robots collaborating to build a chair. The robots autonomously plan how to grasp the parts and how to position their bases. Courtesy of the researchers

The researchers also tested the viability of their algorithm by using it to guide a crew of three robots in the assembly of a chair.

“We’re really excited about the idea of using robots in more extensive ways in manufacturing,” says Daniela Rus, the Andrew and Erna Viterbi Professor in MIT’s Department of Electrical Engineering and Computer Science, whose group developed the new algorithm. “For this, we need robots that can figure things out for themselves more than current robots do. We see this algorithm as a step in that direction.”

Rus is joined on the paper by three researchers in her lab — first author Mehmet Dogar, a postdoc, and Andrew Spielberg and Stuart Baker, both graduate students in electrical engineering and computer science.

Grasping consequences

The problem the researchers address is one in which a group of robots must perform an assembly operation that has a series of discrete steps, some of which require multirobot collaboration. At the outset, none of the robots knows which parts of the operation it will be assigned: Everything’s determined on the fly.

Computationally, the problem is already complex enough, given that at any stage of the operation, any of the robots could perform any of the actions, and during the collaborative phases, they have to avoid colliding with each other. But what makes planning really time-consuming is determining the optimal way for each robot to grasp each object it’s manipulating, so that it can successfully complete not only the immediate task, but also those that follow it.

“Sometimes, the grasp configuration may be valid for the current step but problematic for the next step because another robot or sensor is needed,” Rus says. “The current grasping formation may not allow room for a new robot or sensor to join the team. So our solution considers a multiple-step assembly operation and optimizes how the robots place themselves in a way that takes into account the entire process, not just the current step.”

The key to the researchers’ algorithm is that it defers its most difficult decisions about grasp position until it’s made all the easier ones. That way, it can be interrupted at any time, and it will still have a workable assembly plan. If it hasn’t had time to compute the optimal solution, the robots may on occasion have to drop and regrasp the objects they’re holding. But in many cases, the extra time that takes will be trivial compared to the time required to compute a comprehensive solution.

Principled procrastination

The algorithm begins by devising a plan that completely ignores the grasping problem. This is the equivalent of a plan in which all the robots would drop everything after every stage of the assembly operation, then approach the next stage as if it were a freestanding task.

Then the algorithm considers the transition from one stage of the operation to the next from the perspective of a single robot and a single part of the object being assembled. If it can find a grasp position for that robot and that part that will work in both stages of the operation, but which won’t require any modification of any of the other robots’ behavior, it will add that grasp to the plan. Otherwise, it postpones its decision.

Once it’s handled all the easy grasp decisions, it revisits the ones it’s postponed. Now, it broadens its scope slightly, revising the behavior of one or two other robots at one or two points in the operation, if necessary, to effect a smooth transition between stages. But again, if even that expanded scope proves too limited, it defers its decision.

If the algorithm were permitted to run to completion, its last few grasp decisions might require the modification of every robot’s behavior at every step of the assembly process, which can be a hugely complex task. It will often be more efficient to just let the robots drop what they’re holding a few times rather than to compute the optimal solution.

In addition to their experiments with real robots, the researchers also ran a host of simulations involving more complex assembly operations. In some, they found that their algorithm could, in minutes, produce a workable plan that involved just a few drops, where the optimal solution took hours to compute. In others, the optimal solution was intractable — it would have taken millennia to compute. But their algorithm could still produce a workable plan.

“With an elegant heuristic approach to a complex planning problem, Rus’s group has shown an important step forward in multirobot cooperation by demonstrating how three mobile arms can figure out how to assemble a chair,” says Bradley Nelson, the Professor of Robotics and Intelligent Systems at Swiss Federal Institute of Technology in Zurich. “My biggest concern about their work is that it will ruin one of the things I like most about Ikea furniture: assembling it myself at home.”

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.