Researchers at the Department of Energy’s Oak Ridge National Laboratory have developed a quantum chemistry simulation benchmark to evaluate the performance of quantum devices and guide the development of applications for future quantum computers.

Their findings were published in npj Quantum Information.

Quantum computers use the laws of quantum mechanics and units known as qubits to greatly increase the threshold at which information can be transmitted and processed. Whereas traditional “bits” have a value of either 0 or 1, qubits are encoded with values of both 0 and 1, or any combination thereof, allowing for a vast number of possibilities for storing data.

While still in their early stages, quantum systems have the potential to be exponentially more powerful than today’s leading classical computing systems and promise to revolutionize research in materials, chemistry, high-energy physics, and across the scientific spectrum.

But because these systems are in their relative infancy, understanding what applications are well suited to their unique architectures is considered an important field of research.

“We are currently running fairly simple scientific problems that represent the sort of problems we believe these systems will help us to solve in the future,” said ORNL’s Raphael Pooser, principal investigator of the Quantum Testbed Pathfinder project. “These benchmarks give us an idea of how future quantum systems will perform when tackling similar, though exponentially more complex, simulations.”

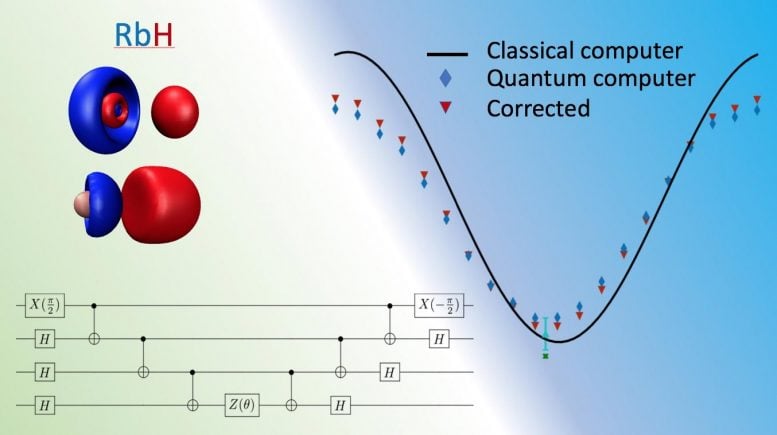

Pooser and his colleagues calculated the bound state energy of alkali hydride molecules on 20-qubit IBM Tokyo and 16-qubit Rigetti Aspen processors. These molecules are simple and their energies well understood, allowing them to effectively test the performance of the quantum computer.

By tuning the quantum computer as a function of a few parameters, the team calculated these molecules’ bound states with chemical accuracy, which was obtained using simulations on a classical computer. Of equal importance is the fact that the quantum calculations also included systematic error mitigation, illuminating the shortcomings in current quantum hardware.

Systematic error occurs when the “noise” inherent in current quantum architectures affects their operation. Because quantum computers are extremely delicate (for instance, the qubits used by the ORNL team are kept in a dilution refrigerator at around 20 millikelvin (or more than -450 degrees Fahrenheit), temperatures and vibrations from their surrounding environments can create instabilities that throw off their accuracy. For instance, such noise may cause a qubit to rotate 21 degrees instead of the desired 20, greatly affecting a calculation’s outcome.

“This new benchmark characterizes the ‘mixed state,’ or how the environment and machine interact, very well,” Pooser said. “This work is a critical step toward a universal benchmark to measure the performance of quantum computers, much like the LINPACK metric is used to judge the fastest classical computers in the world.”

While the calculations were fairly simple compared to what is possible on leading classical systems such as ORNL’s Summit, currently ranked as the world’s most powerful computer, quantum chemistry, along with nuclear physics and quantum field theory, is considered a quantum “killer app.” In other words, it is believed that as they evolve quantum computers will be able to more accurately and more efficiently perform a wide swathe of chemistry-related calculations better than any classical computer currently in operation, including Summit.

“The current benchmark is a first step towards a comprehensive suite of benchmarks and metrics that govern the performance of quantum processors for different science domains,” said ORNL quantum chemist Jacek Jakowski. “We expect it to evolve with time as the quantum computing hardware improves. ORNL’s vast expertise in domain sciences, computer science, and high-performance computing make it the perfect venue for the creation of this benchmark suite.”

ORNL has been planning for paradigm-shifting platforms such as quantum for more than a decade via dedicated research programs in quantum computing, networking, sensing, and quantum materials. These efforts aim to accelerate the understanding of how near-term quantum computing resources can help tackle today’s most daunting scientific challenges and support the recently announced National Quantum Initiative, a federal effort to ensure American leadership in quantum sciences, particularly computing.

Such leadership will require systems like Summit to ensure the steady march from devices such as those used by the ORNL team to larger-scale quantum systems exponentially more powerful than anything in operation today.

Access to the IBM and Rigetti processors was provided by the Quantum Computing User Program at the Oak Ridge Leadership Computing Facility, which provides early access to existing, commercial quantum computing systems while supporting the development of future quantum programmers through educational outreach and internship programs. Support for the research came from DOE’s Office of Science Advanced Scientific Computing Research program.

“This project helps DOE better understand what will work and what won’t work as they forge ahead in their mission to realize the potential of quantum computing in solving today’s biggest science and national security challenges,” Pooser said.

Next, the team plans to calculate the exponentially more complex excited states of these molecules, which will help them devise further novel error mitigation schemes and bring the possibility of practical quantum computing one step closer to reality.

Reference: “Quantum chemistry as a benchmark for near-term quantum computers” by Alexander J. McCaskey, Zachary P. Parks, Jacek Jakowski, Shirley V. Moore, Titus D. Morris, Travis S. Humble and Raphael C. Pooser, 15 November 2019, npj Quantum Information.

DOI: 10.1038/s41534-019-0209-0

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.

1 Comment

… it look like it would be good if this happened 20-ty years ago..