Scientific research is based on the relationship between the reality of nature, as it is observed, and a representation of this reality, formulated by a theory in mathematical language. If all the consequences of the theory are experimentally proven, it is considered as validated. This approach, which has been used for nearly four centuries, has built a consistent body of knowledge. But these advances have been made thanks to the intelligence of human beings who, despite all, can still hold onto their preexisting beliefs and biases. This can affect the progress of science, even for the greatest minds.

The first mistake

In Einstein’s master work of general relativity, he wrote the equation describing the evolution of the universe over time. The solution to this equation shows that the universe is unstable, not a huge sphere with constant volume with stars sliding around, as was believed at the time.

At the beginning of the 20th century, people lived with the well-established idea of a static universe where the motion of stars never varies. This is probably due to Aristotle’s teachings, stating that the sky is immutable, unlike Earth, which is perishable. This idea caused a historical anomaly: in 1054, the Chinese noticed the appearance of a new light in the sky, but no European document mentions it. Yet it could be seen in full daylight and lasted for several weeks. It was a supernova, that is, a dying star, the remnants of which can still be seen as the Crab Nebula. Predominant thought in Europe prevented people from accepting a phenomenon that so utterly contradicted the idea of an unchanging sky. A supernova is a very rare event, which can only be observed by the naked eye once a century. The most recent one dates back to 1987. So Aristotle was almost right in thinking that the sky was unchanging – on the scale of a human life at least.

To remain in accordance with the idea of a static universe, Einstein introduced a cosmological constant into his equations, which froze the state of the universe. His intuition led him astray: in 1929, when Hubble demonstrated that the universe is expanding, Einstein admitted that he had made “his biggest mistake.”

Quantum randomness

Quantum mechanics developed around the same time as relativity. It describes the physics at the infinitely small scale. Einstein contributed greatly to the field in 1905, by interpreting the photoelectric effect as being a collision between electrons and photons – that is, infinitesimal particles carrying pure energy. In other words, light, which has traditionally been described as a wave, behaves like a stream of particles. It was this step forward, not the theory of relativity, that earned Einstein the Nobel Prize in 1921.

But despite this vital contribution, he remained stubborn in rejecting the key lesson of quantum mechanics – that the world of particles is not bound by the strict determinism of classical physics. The quantum world is probabilistic. We only know how to predict the probability of an occurrence among a range of possibilities.

In Einstein’s blindness, once again we can see the influence of Greek philosophy. Plato taught that thought should remain ideal, free from the contingencies of reality – a noble idea, but one that does not follow the precepts of science. Knowledge demands perfect consistency with all predicted facts, whereas belief is based on likelyhood, produced by partial observations. Einstein himself was convinced that pure thought was capable of fully capturing reality, but quantum randomness contradicts this hypothesis.

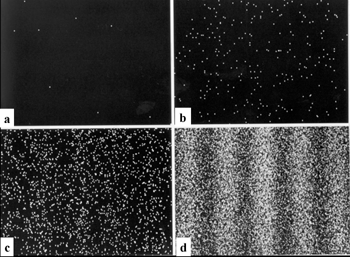

In practice, this randomness is not a pure noise, as it is constrained by Heisenberg’s uncertainty principle. This principle imposes collective determinism on groups of particles – an electron is free by itself, as we do not know how to calculate its trajectory when leaving a hole, but a million electrons draw a diffraction figure, showing dark and light fringes that we do know how to calculate.

Einstein did not accept this fundamental indeterminism, as summed up by his provocative verdict: “God does not play dice with the universe.” He imagined the existence of hidden variables, i.e., yet-to-be-discovered numbers beyond mass, charge, and spin that physicists use to describe particles. But the experiment did not support this idea. It is undeniable that a reality exists that transcends our understanding – we cannot know everything about the world of the infinitely small.

The fortuitous whims of the imagination

Within the process of the scientific method, there is still a stage that is not completely objective. This is what leads to conceptualizing a theory, and Einstein, with his thought experiments, gives a famous example of it. He stated that “imagination is more important than knowledge.” Indeed, when looking at disparate observations, a physicist must imagine an underlying law. Sometimes, several theoretical models compete to explain a phenomenon, and it is only at this point that logic takes over again.

“The role of intelligence is not to discover, but to prepare. It is only good for service tasks.” (Simone Weil, “Gravity and Grace”)

In this way, the progress of ideas springs from what is called intuition. It is a sort of jump in knowledge that goes beyond pure rationality. The line between objective and subjective is no longer completely solid. Thoughts come from neurons under the effect of electromagnetic impulses, some of them being particularly fertile, as if there was a short circuit between cells, where chance is at work.

But these intuitions, or “flowers” of the human spirit, are not the same for everybody – Einstein’s brain produced “E=mc2”, whereas Proust’s brain came up with an admirable metaphor. Intuition pops up randomly, but this randomness is constrained by each individual’s experience, culture, and knowledge.

The benefits of randomness

It should not come as shocking news that there is a reality not grasped by our own intelligence. Without randomness, we are guided by our instincts and habits, everything that makes us predictable. What we do is limited almost exclusively to this first layer of reality, with ordinary concerns and obligatory tasks. But there is another layer of reality, the one where obvious randomness is the trademark.

“Never will an administrative or academic effort replace the miracles of chance to which we owe great men.” (Honoré de Balzac, “Cousin Pons”)

Einstein is an example of an inventive and free spirit, yet he still kept his biases. His “first mistake” can be summed up by saying, “I refuse to believe in a beginning of the universe.” However, experiments proved him wrong. His verdict on God playing dice means, “I refuse to believe in chance.” Yet quantum mechanics involves obligatory randomness. His sentence begs the question of whether he would believe in God in a world without chance, which would greatly curtail our freedom, as we would then be confined in absolute determinism. Einstein was stubborn in his refusal. For him, the human brain should be capable of knowing what the universe is. With a lot more modesty, Heisenberg teaches us that physics is limited to describing how nature reacts in given circumstances.

Quantum theory demonstrates that total understanding is not available to us. In return, it offers randomness which brings frustrations and dangers, but also benefits.

“Man can only escape the laws of this world for a flash of time. Instants of pausing, of contemplating, of pure intuition… It’s with these flashes that he is capable of the superhuman.” (Simone Weil, “Gravity and Grace”)

Einstein, a legendary physicist, is the perfect example of an imaginative being. His refusal of randomness is therefore a paradox, because randomness is what makes intuition possible allowing for creative processes in both science and art.

Written by François Vannucci, Professeur émérite, chercheur en physique des particules, spécialiste des neutrinos, Université de Paris.

Translated from the French by Rosie Marsland.

Adapted from an article originally published on The Conversation.

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.

15 Comments

Perhaps a further evolved human brain will be capable of knowing what the universe is.

I think the self consistent LCDM cosmology that was discovered 2000ish-2020ish is a pretty good start: it lets us know the matter-energy content of the universe to 100 %, at something like 1 % uncertainty.

As a scientist I am shocked such dribble is even being posted. Pointing out Einstein’s Failures when the Atom wasn’t even split until 1938, when the laser wasn’t fired until the 1960’s, when xray spectroscopy nor the electron microscope wasn’t even invented. By pure thought Einstein deduced these universal truths while working as a lowly clerk in a patent office and without the data from super colliders. Yet most of his work has stood the test of time. It was in the 1990’s that 2 scientists used xenon atoms sprayed onto a liquid helium chilled crystal of nickel in order to arrange single atoms into the logo I.B.M., giving man a good first look at atoms. To the author of this dribble, show us your ground breaking, history making discoveries? Unlike Einstein, you, like a fart in the wind, will be instantly forgotten. Unlike you, who spends your time bashing the dead, I and others have been working on how concretion occurs in space. I have even sent my conclusions to the best Physicists in the USA. To give you a taste (in layman’s terms) please read on… when super massive black holes eat gasses and matter from stars and planets; said gasses and matter are converted to black hole matter which is hyper dense, and super heavy (1 teaspoon of a neutron star material is estimated to weigh 10 million tons). From recent satellite data we now know black holes spin at upto 81% the speed of light. And when black holes eat too much, the recently converted matter is sloughed off the super massive black holes and are ejected from the poles of the black hole and into space at a high esacpe velocity. The ejected black hole matter maintains all of its black hole properties and forms globes of black hole material. When several of these small globes of black hole material encounters gasses and matter from asteroids and comets, they then start to attract these gasses and matter to themselves (concretion) thus seeding the universe with new suns and planets. New cutting edge data suggests these small globes of black hole matter maintain a quantum interaction that lead to new solar systems being formed. The quantum interaction is the same seen in quantum computers. This quantum interaction leads to organized solar systems that has occured billions of times. Depending on the size of the globe of black hole material will lead to either a sun, or planet being made. The larger globes suck in most of the gasses and by its hyper rotation and gravity and radiation being produced then by insane frictional forces, ignites the condensing gasses to start a sun’s life by the process of fusion. That is what is observed in the eagle nebula, which is a star factory…. Let’s now see your best conclusions.

Did you even read the article? How do you reckon that the author “spends your time bashing the dead?”

He talks about “Einstein’s master work of general relativity,” his “inventive and free spirit” and calls him “a legendary physicist.”

He certainly isn’t claiming that he is better than Einstein, just that Einstein wasn’t perfect.

You, on the other hand, seem to be nominating yourself as a great physicist, yet don’t even know how to make a paragraph.

Ok, Manny, but the article is specifically about how humans are faulty by nature, Einstein is considered one of the greatest ever, which is why he is the perfect example for showing this.

Additionally, no one here is gonna care about your paper, if you’re looking for validation you ought to submit it to a scientific journal.

Nature is fixed and hence validated.. Being randomly,therefore, Universe is not part of scientific studies whereas time and space concept is realised

I can explain what you are unable to.

Somebody, please tell me what is random? Does it mean an individual particle has a will of its own and an energy source to change its direction at that will, without needing a cause, in order to break away from determinism?

The location of a small mass cannot be determined exactly. Instead you can only talk about the probabilities of finding it in different locations. E.g, 1/4 at A, 1/2 at B and 1/4 at C. (Probabilities must add up to 1)

This doesn’t mean the mass has a will of its own – it has to be at A or B or C, but never at D. It cannot change the probabilities.

An analogy is that a dice must settle in 1 of 6 possible states. It is probabilistic, not deterministic, but the dice doesn’t decide which way to land.

Thank you so much for your reply. I’m not asking to determine the position of a particle, I’m asking if you don’t observe it, does it still strictly obey deterministic laws,ie cause and effect. I understand that when you try to measure it, it will only have probabilities of being in a certain place. In other words is the universe completely deterministic right down to the smallest quantum particle or quantum field or fluctuation if you don’t observe things. Also meaning that Einstein was right as long as you don’t try to measure things.

As I noted in my comment below, I rather place the “deterministic state, random observation” properties into the relativistic quantum theory that is modern quantum field theory. Then you have ripples, but you also know that the field theory is effective – scale dependent – so philosophic “determinism” is rejected. But that wasn’t even Einstein’s problem, he did not like the random observation since then philosophic “realism” is also rejected.

It leaves you with empirical determinism – classical deterministic approximations – and empirical realism – if you hit it, it hits back.

What Einstein considered his biggest mistake, was putting a spacetime curvature constant to balance the FLRW type cosmological equations. They are still in unstable balance that must either collapse or expand, so was a huge mistake in that sense. But it is now known as dark energy by being put on the stress-energy side of the equations, and is what is needed to make a self consistent cosmology.

What rejects hidden variables in quantum physics is non-local entanglement, another concept that Einstein did not like and called “action at a distance”. But I rather abstract that and the “deterministic state, random observation” properties into the relativistic quantum theory that is modern quantum field theory that among other things make away with the classic quantum physics ‘paradox’ of either particle or wave. (Since particles are natural resonant ripples in the corresponding field – you can observe either property, just not at the same time.) Then relativity admits us to see physics laws evolve according to local light cone causality, while quantum physics take back all the non-locality it can by entanglement correlations that doesn’t break causality.

Which brings me to the observed fact from LHC well testing of standard particle quantum field theory 2012-2017, that there are too weak exotic interaction to make us anything else than biochemical machines. I’m not sure if Vannucci wants to make a philosophical and/or religious point outside of the science at hand by asserting that randomness has something to do with evolved creativity. But in any case we have no choice but to act out according to determined laws.

Einstein’s Two Big Mistakes?

(or half the way due to understanding)

TOPICS:EinsteinThe Conversation

By FRANÇOIS VANNUCCI, UNIVERSITY OF PARIS MAY 31, 2020

“HALF THE WAY DUE TO UNDERSTANDING”

Posted on Linkedin

by Giorgios (Gio) Vassiliou

-Inventor and Founder of Transcendental Surrealism-

“I’m only hyman” say the famous pop song by Rag’n’Bone Man, and this is so true according to human’s position in the universe. No matter how well we understand the cosmic laws or the interconections between them, there will always be new thins to discover. This makes the example of Albert Einstein’s life so exciting to our site, because he proved that no matter how huge is IQ, we will always remain humans with unsurpassed limitations.

This is far more exciting if we concider that during his trememdous career, he transfored himself from a clerk in Zurich to the most celebrated scientist of 20th century ! Of course he made mistakes. In his mail with the great Nils Bohr, its obvious that according to details or so, he was almost eliminated by the great Danish quantum giant. But although his defeat, in the big plan – the universal conseption, Einstein was far beyond his time, and even in some cases, far beyond today!!

We have to expand our understanding to exceed our intelectual limits, to accept that as a human being Einstein had the rightfull honor to make mistakes.

Aftet all, mistakes somehow works like the salt into the food preparation. Sometimes we put more salt and others less. This is the cosmic game. The balance axis of equilibrium is not always achieved. The example of Hook’s pentulum can prove my claim, for all of us including Einstein!

The thing is that Einstein had to balance vast things and newly invented cosmic laws, in such a way that could bring harmony to his cosmic conseption. In order to achieve that, he had to take a mental position, as close as possible to the ideal equlibrium axis.(Believe me there is no other way!) By doing, so his state was to accept some pieces of the cosmic plan and others to be rejected consequently.

He knew very well, that a single human mind, cannot discribe with crystal clarity the universe and by doing so he projected, his essential human part -that of making mistakes-to us all. Remember his personal life and concider how many mistakes he had done repeatedly!

There is one thing that i must mention, if there is a cosmic scale thay balances the super structure of our hologrammatic universe, then the only place we must take, to understand as much as we can, is that of the equilibrium axis. I dont realy know if that axis exists somewhere in the center or else.

But it is very propable to be found around that area.Otherwise the whole structure will look like a buckling skyscraper. If Einstein had to do that, he knew that around the center he could find a place of stability like Archimides ment with his famous words : Δωσ μοι πα στω και ταν γαν κινασω”-Give me a stable place and i shall move the Earth! (in Ancient Greek- Doric Dialectus)

The center of mass cannot be found anywhere else but in the center or in middle distance of an object’s matter. This law is applied even to the matterial universe that we all live in.

If not to the exact center but somewhere close to its original place. By that contition Einstein was trying to balance his mind, knowing that mistakes cannot be avoided. The human presence with the universe, consist a unity, but a unity that is interactive. This interaction coinsides the wright and the wrong, as possibilities that can become reality. The universal laboratory that executes billions of interactions every second is the proof of my proposal. Also if Einstein was looking for his stable place – like Archimides-to understand the universal laws, he had taken at least the wright direction. He understood clearly as a crystal, that humam nature cannot exceed mistakes.

Although he had to go all the way, due to understanding, he knew in his depths, that understanding rest somewhwere close or exactly to the equilibrium axis that we all must found-including universe! And by doing so, he placed his position close to the ideal center -in othet words to the half way due to understanding. So from this point of view and by that contition, even with huge mistakes, prooved himself 100% wright.

What drivel.

… No, that is not correct he had only one error, he was thinking that he is right, that was pointed to him at the ceremony as well…

… It takes The God to understand the workings of a God, in another words, just another try…