A team of physicists is the first to successfully realize a quantum walk in two dimensions and believe by simulating these quantum walks, physicists could learn new insights into how electrons travel from one atom to the next.

Tourists who drift aimlessly during a sightseeing tour are moving randomly — just like electrons that move from one atom to the next. To obtain a better understanding of these random motions it is often useful to reduce their complexity. Physicists do this by simulating random walks. These simulations can bring new insights in the quantum world as well. Researchers at the Max Planck Institute for the Science of Light and the University of Paderborn and their colleagues are now the first to successfully realize an arrangement for a quantum walk in two dimensions. The experimental setup can be used to investigate many quantum phenomena.

If a tourist on a sightseeing tour were to be guided by chance through a city, he could toss a coin at each crossroads to decide which direction to take. If a friend later sets out to look for him, she has no way of knowing where the random walker is at that moment. The more crossroads the tourist guided by the coin has already passed, the greater the number of places he could be. If one of the places is located on several paths, there is a higher probability that the friend can find him there; his position would provide no information on the path chosen, however. If the random walker eventually tells his friend the exact route, this obviously does not change anything about the probability with which the possible destinations are reached.

This changes, however, if the walker obeys the laws of quantum physics. Surprisingly, the quantum walker must then have been at all the places that are on all the possible routes to a destination, because a quantum rambler keeps all possible route options open, and also covers them simultaneously, as if he had a multiple identity. In physics, this multiple identity is called a superposition state. The strange walker can even cancel himself out at points along the route which he must have passed twice. This is why the probability, with which such a quantum walker reaches his destination, is different from that of a random rambler who is subject to classical physics.

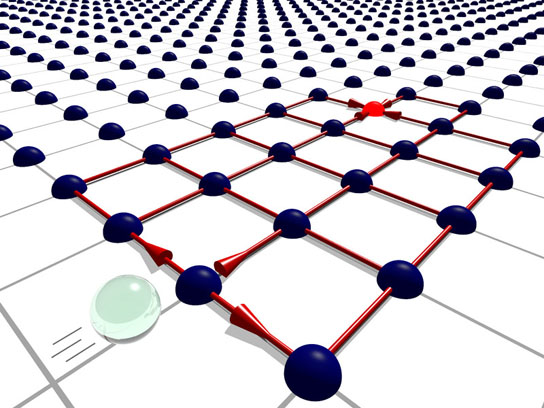

A light pulse travels through a network of optical fibers with 169 nodes

He remains a quantum walker only when he does not tell anyone about his route. Informing his friend about the route taken, which corresponds to a measurement in physics, forces him to decide on a route retrospectively, as it were. Such phenomena always occur in physics when objects can be described as a wave in one case, and as a particle in another.

Simulating such quantum walks could bring physicists new insights, such as how electrons travel from one atom to the next, for example. Up to now, physicists were only able to simulate them in one dimension, i.e. with two decision options. However, researchers at the Max Planck Institute for the Science of Light and the University of Paderborn, together with colleagues from the Czech Republic, Hungary, and Australia, have now realized, for the first time, a quantum walk in two dimensions: they sent a short light pulse on its travels in a network of optical fibers with 169 nodes. At a node, chance decides in which of four possible directions the pulse will continue: forwards, backwards, to the right or to the left. In the next step, the light pulse can therefore move from a white square to one of four adjacent black squares, like a piece on a chessboard. The researchers conducted the experiment with twelve steps. They then measured the probability of the light pulse arriving at each of the 169 possible positions at the end of its journey.

“Light waves make it possible to simulate the behavior of quantum objects and their random motions, something which can be difficult to achieve in experiments otherwise,” explains Andreas Schreiber, who put the project into experimental practice as part of his doctoral work. Since the light takes several paths simultaneously in the superposition state, the experiment can be interpreted in two ways. One way is that a quantum walker walks through a network of paths with four possible decisions at every crossroads; alternately, it can also be understood as two quantum walkers who walk through a network with two possible decisions at every crossing.

What is the connection between classical physics and quantum physics?

The setup can also be used to gain insight into whether and how two objects mutually influence each other on their quantum walk. The properties of the objects can even be controlled via the light pulses: if the light pulses are set up in such a way that they behave like two non-distinguishable walkers, for example, there is a high probability of them staying together if they meet en route. For certain atoms, this would mean that a reciprocal influence leads to the formation of a molecule.

“The experiment shows that nature provides us with tricks that make it easier to understand complex quantum mechanical systems,” says Christine Silberhorn, who headed the Integrated Quantum Optics research group at the Max Planck Institute for the Science of Light in Erlangen until recently and is currently establishing a new group in this field of work at the University of Paderborn. And the experimental setup has even more in store: it could also be used to investigate the behavior of other quantum objects, such as electrons. The physicists could also experiment with individual light particles, photons, instead of light pulses, or also experiment with a larger number of quantum walkers — and thus investigate the interplay between highly complex quantum mechanical systems and further clarify the connections between classical physics and quantum physics. And since a quantum walker is present at many locations simultaneously, he could also search all these places at the same time. On this principle, databases could one day be searched in a quantum computer of the future — much faster than is possible with today’s computer technology.

Reference: “A 2D Quantum Walk Simulation of Two-Particle Dynamics” by Andreas Schreiber, Aurél Gábris, Peter P. Rohde, Kaisa Laiho, Martin Štefaňák, Václav Potoček, Craig Hamilton, Igor Jex and Christine Silberhorn, 8 March 2012, Science.

DOI: 10.1126/science.1218448

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.