A new AI breakthrough helps scientists uncover the hidden forces shaping the world around us.

Engineers at the University of Pennsylvania have developed a new AI-based technique that could help scientists solve some of the most difficult mathematical problems used to study the natural world.

The approach, called “Mollifier Layers,” is designed to handle inverse partial differential equations (PDEs), a class of equations that allows researchers to work backward from visible patterns to uncover the hidden processes that created them. These problems appear in fields ranging from genetics and materials science to weather forecasting.

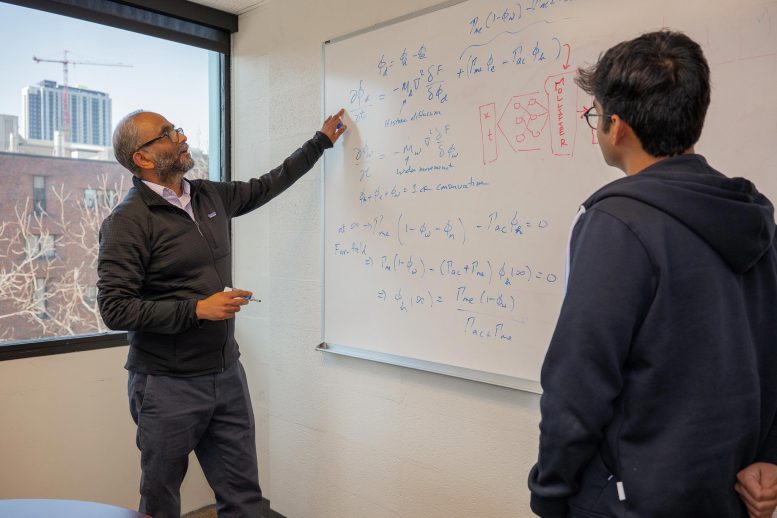

“Solving an inverse problem is like looking at ripples in a pond and working backward to figure out where the pebble fell,” says Vivek Shenoy, Eduardo D. Glandt President’s Distinguished Professor in Materials Science and Engineering (MSE) and senior author of a study published in Transactions on Machine Learning Research (TMLR), which will be presented at the Conference on Neural Information Processing Systems (NeurIPS 2026). “You can see the effects clearly, but the real challenge is inferring the hidden cause.”

Rather than relying on larger and more power-hungry AI systems, the researchers focused on improving the mathematics behind the process itself.

“Modern AI often advances by scaling up computation,” says Vinayak Vinayak, a doctoral candidate in MSE and co-first author of the study. “But some scientific challenges require better mathematics, not just more compute.”

Why Inverse PDEs Are So Difficult

Differential equations help scientists describe how things change over time. They are used to model everything from population growth and chemical reactions to heat transfer.

Partial differential equations, or PDEs, take this a step further by describing changes across both time and space. Researchers use them to study highly complex systems such as weather patterns, material behavior, and even the organization of DNA inside cells.

Inverse PDEs are especially challenging because they reverse the usual process. Instead of starting with known rules to predict outcomes, scientists begin with observed data and try to uncover the hidden dynamics responsible for it.

“For years, we’ve used these equations to study how chromatin, which is the folded state of DNA inside the nucleus, organizes itself inside living cells,” says Shenoy. “But we kept running into the same problem: We could see the structures and model their formation, but we could not reliably infer the epigenetic processes driving this system, namely the chemical changes that help control which genes are active. The more we tried to optimize the existing approach, the clearer it became that the mathematics itself needed to change.”

A New Way for AI To Handle Complex Equations

At the heart of the challenge is differentiation, a mathematical process used to measure change. Simple derivatives show how quickly something increases or decreases, while higher-order derivatives capture more complicated patterns.

Most AI systems that tackle inverse PDEs rely on a process called recursive automatic differentiation. This repeatedly calculates changes throughout a neural network, which forms the backbone of modern AI models.

However, that approach becomes unstable when dealing with higher-order systems or noisy data. It can also require enormous amounts of computing power.

The researchers compare the issue to repeatedly zooming in on a jagged line. Each step magnifies imperfections and noise, making the final calculation less reliable. The team realized they needed a way to smooth the data before measuring those changes.

The Science Behind “Mollifier Layers”

Their solution was inspired by “mollifiers,” mathematical tools first described in the 1940s by German American mathematician Kurt Otto Friedrichs, who later received the National Medal of Science. Mollifiers are designed to smooth rough or noisy functions.

By adapting this idea for AI, the team created a “mollifier layer” that smooths signals before the system calculates derivatives.

“We initially assumed the issue had to do with neural network’s architecture,” says Ananyae Kumar Bhartari, a graduate of Penn Engineering’s Scientific Computing master’s program and the paper’s other co-first author. “But, after carefully adjusting the network, we eventually realized the bottleneck was recursive automatic differentiation itself.”

According to the researchers, the new layer dramatically reduced noise and improved computational efficiency.

Implementing a ‘mollifier layer,’ which smoothed the signal before measuring it, radically diminished both the noisiness and the power consumption scaling. “That let us solve these equations more reliably, without the same computational burden,” says Bhartari.

Understanding DNA and Disease

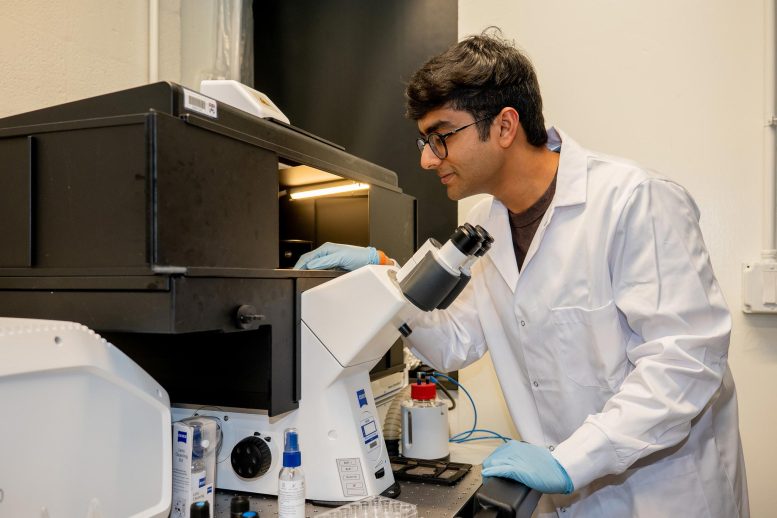

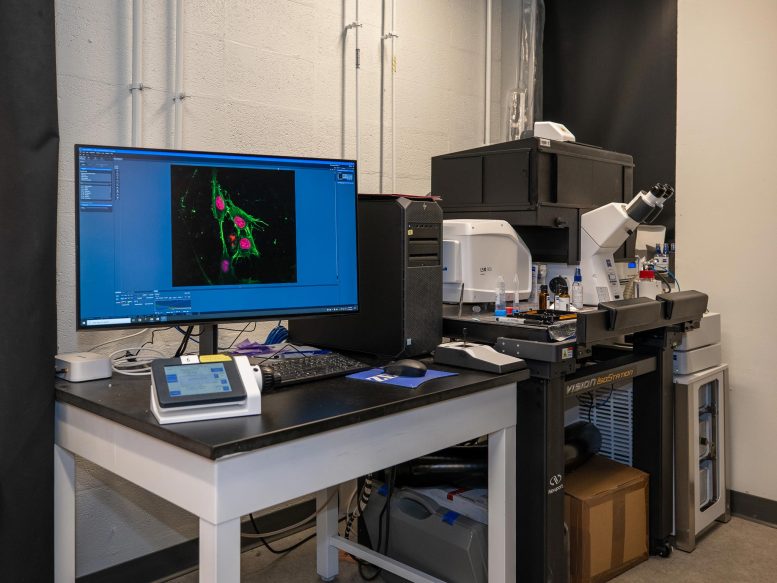

One of the first applications for the new method involves chromatin, the tightly packed combination of DNA and proteins inside cells that controls access to genetic information.

The Shenoy Lab studies tiny chromatin domains that help regulate gene activity. These structures are incredibly small, measuring about 100 nanometers across, but they play an enormous role in health and disease.

“These domains are just 100 nanometers in size,” says Shenoy, “but because accessibility determines gene expression, and gene expression governs cell identity, function, aging, and disease, these domains play a critical role in biology and health.”

The new AI framework could help scientists infer the epigenetic reaction rates that drive these changes, revealing how chromatin evolves over time and influences gene expression.

“If we can track how these reaction rates evolve during aging, cancer, or development,” adds Vinayak, “this creates the potential for new therapies: If reaction rates control chromatin organization and cell fate, then altering those rates could redirect cells to desired states.”

Potential Uses Beyond Biology

The researchers believe mollifier layers could be useful in many other areas of science as well. Complex systems in materials science, fluid mechanics, and scientific machine learning often involve noisy data and higher-order equations.

The framework may provide a more stable and computationally efficient way to uncover hidden parameters in these systems.

“Ultimately, the goal is to move from observing complex patterns to quantitatively uncovering the rules that generate them,” says Shenoy. “If you understand the rules that govern a system, you now have the possibility of changing it.”

Reference: “Mollifier Layers: Enabling Efficient High-Order Derivatives in Inverse PDE Learning” by Vinayak Vinayak, Ananyae Kumar bhartari and Vivek Shenoy, 9 March 2026, TMLR.

Submission Number: 6096

This study was conducted at the University of Pennsylvania School of Engineering and Applied Science and supported by National Cancer Institute (NCI) Award U54CA261694 (V.B.S.); National Science Foundation (NSF) Center for Engineering Mechanobiology (CEMB) Grant CMMI -154857 (V.B.S.); NSF Grant DMS -2347834 (V.B.S.); National Institute of Biomedical Imaging and Bioengineering (NIBIB) Awards R01EB017753 (V.B.S) and R01EB030876 (V.B.S.) and National Institute of General Medical Sciences (NIGMS) Award R01GM155943 (V.B.S).

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.

2 Comments

thanks for this

The folded chromatin states may well be mediated by quantum level changes heretofore unobservable by state of the art interrogation. It will be interesting to follow the science as these states disclose the action within the cell environment. New fields of force may well be recognized.