Researchers have developed a deep-learning-based surrogate model that dramatically speeds up simulations of nonlinear optical processes used in advanced laser systems.

Simulating the complex optical behavior behind ultrafast laser systems requires enormous computing power, creating a major challenge for experiments that depend on rapid feedback.

Researchers from Stanford University, the University of California, Los Angeles (UCLA), and SLAC National Accelerator Laboratory have now developed a deep learning surrogate model that dramatically speeds up these simulations while still maintaining high accuracy across a wide variety of laser pulse shapes.

Nonlinear Optics and X-Ray Production

The research focuses on second-order nonlinear optics, also known as χ² processes. In these interactions, light waves exchange energy inside specially designed crystals, producing new frequencies and customized pulse shapes.

These processes are critical in particle accelerator facilities. At SLAC’s upgraded Linac Coherent Light Source (LCLS-II), infrared laser pulses are first converted into green light and then into ultraviolet (UV) light. The UV pulse strikes a cathode, releasing an electron bunch that is later accelerated and shaped to generate powerful X-ray pulses.

The timing and shape of the UV pulse directly affect the behavior of the electron bunch and the quality of the resulting X-rays used for scientific experiments. The new surrogate model for this nonlinear χ² frequency conversion process was reported in Advanced Photonics.

Traditional simulations rely on solving the nonlinear Schrödinger equation with the split-step Fourier method (SSFM). Although highly accurate, the approach is computationally expensive because it repeatedly switches between time-domain and frequency-domain calculations during each propagation step. In full laser simulations, this stage accounts for roughly 95 percent of the total runtime.

Deep Learning Replaces the Slowest Step

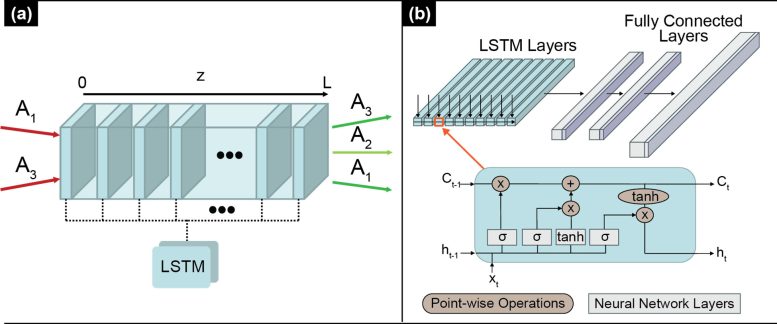

To address this bottleneck, the researchers adapted long short-term memory (LSTM) neural networks, a type of recurrent neural network previously used for modeling pulse propagation in fiber optics. The new system was designed specifically for the more complex χ² environment involving multiple interacting optical fields.

The team tested the model using noncollinear sum-frequency generation (SFG), a process in which three coupled optical fields evolve simultaneously across many different pulse conditions. This setup provided a demanding benchmark for evaluating performance.

One important design choice was to keep the calculations entirely within a compressed frequency-domain representation. By avoiding repeated transformations between domains, the model significantly reduced computational cost.

Millisecond Simulations With High Accuracy

The surrogate model successfully reproduced both temporal and spectral pulse profiles under a wide range of conditions, including cases with strong phase modulation and pronounced spectral holes.

Using batched GPU inference, the average simulation time dropped to only a few milliseconds per instance, making the system orders of magnitude faster than conventional techniques. The researchers also found that when the model accurately predicted the main SFG output, the secondary optical fields closely matched traditional simulations as well.

The broader goal is to integrate these surrogate models directly into operating laser systems. The modular design allows individual physical processes to be represented by separate trained surrogate blocks, creating predictive models that can work alongside real-time experiments.

In the future, combining fast machine learning surrogates with live experimental systems could support digital twins, adaptive control methods, and tighter integration with diagnostic tools across many types of laser-driven research facilities.

Reference: “Deep learning-assisted modeling for χ(2) nonlinear optics” by Jack Hirschman, Erfan Abedi, Minyang Wang, Hao Zhang, Abhimanyu Borthakur, Justin Baker, Andrea L. Bertozzi, Randy Lemons and Sergio Carbajo, 6 May 2026, Advanced Photonics.

DOI: 10.1117/1.AP.8.3.036004

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.