MIT researchers develop a comfortable, form-fitting fabric that accurately recognizes its wearer’s activities, like walking, running, and jumping.

Using a novel fabrication process, scientists at MIT have produced smart textiles that snugly conform to the body so they can precisely sense the wearer’s posture and motions.

By incorporating a special type of plastic yarn and using heat to slightly melt it — a process known as thermoforming — the researchers were able to significantly improve the precision of pressure sensors woven into multilayered knit textiles, which they call 3DKnITS.

Using this process they created a “smart” shoe and mat, and then developed a hardware and software system to measure and interpret data from the pressure sensors in real-time. The machine-learning system predicted motions and yoga poses performed by an individual standing on the smart textile mat with about 99 percent accuracy.

Taking advantage of digital knitting technology, their fabrication process enables rapid prototyping and can be easily scaled up for large-scale manufacturing, says Irmandy Wicaksono, a research assistant in the MIT Media Lab and lead author of a paper presenting 3DKnITS.

Applications in Healthcare and Rehabilitation

The technique could have many applications, especially in health care and rehabilitation. For example, it could be used to produce smart shoes that track the gait of someone who is learning to walk again after an injury, or socks that monitor pressure on a diabetic patient’s foot to prevent the formation of ulcers.

“With digital knitting, you have this freedom to design your own patterns and also integrate sensors within the structure itself, so it becomes seamless and comfortable, and you can develop it based on the shape of your body,” Wicaksono says.

He wrote the paper with MIT undergraduate students Peter G. Hwang, Samir Droubi, and Allison N. Serio through the Undergraduate Research Opportunities Program; Franny Xi Wu, a recent graduate of Wellesley College; Wei Yan, assistant professor at the Nanyang Technological University; and senior author Joseph A. Paradiso, the Alexander W. Dreyfoos Professor and director of the Responsive Environments group within the Media Lab. The research will be presented at the IEEE Engineering in Medicine and Biology Society Conference.

“Some of the early pioneering work on smart fabrics happened at the Media Lab in the late ’90s. The materials, embeddable electronics, and fabrication machines have advanced enormously since then,” Paradiso says. “It’s a great time to see our research returning to this area, for example through projects like Irmandy’s — they point at an exciting future where sensing and functions diffuse more fluidly into materials and open up enormous possibilities.”

Knitting Know-How

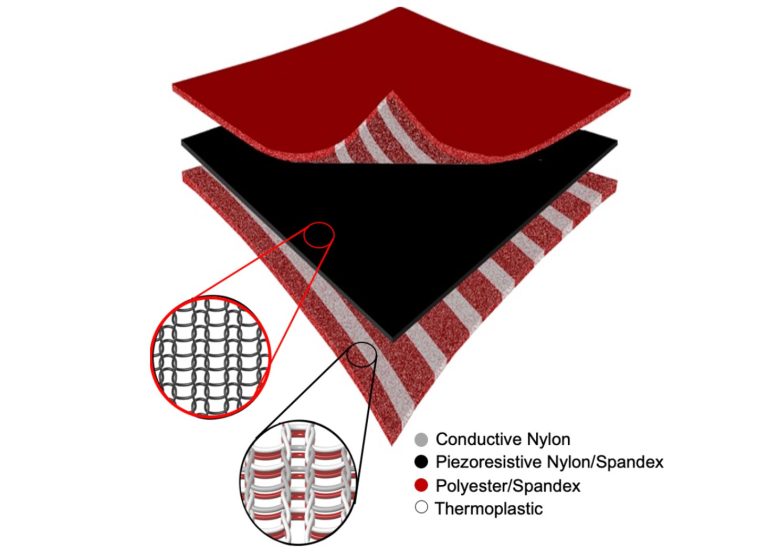

To produce a smart textile, the researchers use a digital knitting machine that weaves together layers of fabric with rows of standard and functional yarn. The multilayer knit textile is composed of two layers of conductive yarn knit sandwiched around a piezoresistive knit, which changes its resistance when squeezed. Following a pattern, the machine stitches this functional yarn throughout the textile in horizontal and vertical rows. Where the functional fibers intersect, they create a pressure sensor, Wicaksono explains.

But yarn is soft and pliable, so the layers shift and rub against each other when the wearer moves. This generates noise and causes variability that make the pressure sensors much less accurate.

Wicaksono came up with a solution to this problem while working in a knitting factory in Shenzhen, China, where he spent a month learning to program and maintain digital knitting machines. He watched workers making sneakers using thermoplastic yarns that would start to melt when heated above 70 degrees Celsius (158 degrees Fahrenheit), which slightly hardens the textile so it can hold a precise shape.

He decided to try incorporating melting fibers and thermoforming into the smart textile fabrication process.

“The thermoforming really solves the noise issue because it hardens the multilayer textile into one layer by essentially squeezing and melting the whole fabric together, which improves the accuracy. That thermoforming also allows us to create 3D forms, like a sock or shoe, that actually fit the precise size and shape of the user,” he says.

Once he perfected the fabrication process, Wicaksono needed a system to accurately process pressure sensor data. Since the fabric is knit as a grid, he crafted a wireless circuit that scans through rows and columns on the textile and measures the resistance at each point. He designed this circuit to overcome artifacts caused by “ghosting” ambiguities, which occur when the user exerts pressure on two or more separate points simultaneously.

Inspired by deep-learning techniques for image classification, Wicaksono devised a system that displays pressure sensor data as a heat map. Those images are fed to a machine-learning model, which is trained to detect the posture, pose, or motion of the user based on the heat map image.

Analyzing Activities

Once the model was trained, it could classify the user’s activity on the smart mat (walking, running, doing push-ups, etc.) with 99.6 percent accuracy and could recognize seven yoga poses with 98.7 percent accuracy.

They also used a circular knitting machine to create a form-fitted smart textile shoe with 96 pressure sensing points spread across the entire 3D textile. They used the shoe to measure pressure exerted on different parts of the foot when the wearer kicked a soccer ball.

The high accuracy of 3DKnITS could make them useful for applications in prosthetics, where precision is essential. A smart textile liner could measure the pressure a prosthetic limb places on the socket, enabling a prosthetist to easily see how well the device fits, Wicaksono says.

He and his colleagues are also exploring more creative applications. In collaboration with a sound designer and a contemporary dancer, they developed a smart textile carpet that drives musical notes and soundscapes based on the dancer’s steps, to explore the bidirectional relationship between music and choreography. This research was recently presented at the ACM Creativity and Cognition Conference.

“I’ve learned that interdisciplinary collaboration can create some really unique applications,” he says.

Now that the researchers have demonstrated the success of their fabrication technique, Wicaksono plans to refine the circuit and machine learning model. Currently, the model must be calibrated to each individual before it can classify actions, which is a time-consuming process. Removing that calibration step would make 3DKnITS easier to use. The researchers also want to conduct tests on smart shoes outside the lab to see how environmental conditions like temperature and humidity impact the accuracy of sensors.

“It’s always amazing to see technology advance in ways that are so meaningful. It is incredible to think that the clothing we wear, an arm sleeve or a sock, can be created in ways that its three-dimensional structure can be used for sensing,” says Eric Berkson, assistant professor of orthopedic surgery at Harvard Medical School and sports medicine orthopedic surgeon at Massachusetts General Hospital, who was not involved in this research. “In the medical field, and in orthopedic sports medicine specifically, this technology provides the ability to better detect and classify motion and to recognize force distribution patterns in real-world (out of the laboratory) situations. This is the type of thinking that will enhance injury prevention and detection techniques and help evaluate and direct rehabilitation.”

References:

“3DKnITS: Three-dimensional Digital Knitting of Intelligent Textile Sensor for Activity Recognition and Biomechanical Monitoring” by I. Wicaksono, P. G. Hwang, S. Droubi, F. X. Wu, A. N. Serio, W. Yan and J. A. Paradiso, 1 July 2022, IEEE in Medicine and Biology Society.

Link

“Tapis Magique: Machine-knitted Electronic Textile Carpet for Interactive Choreomusical Performance and Immersive Environments” by Irmandy Wicaksono, Don Derek Haddad and

Joseph Paradiso, 20 June 2022, C&C ’22: Creativity and Cognition.

DOI: 10.1145/3527927.3531451

This research was supported, in part, by the MIT Media Lab Consortium.

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.