Consciousness may emerge not from code, but from the way living brains physically compute.

Discussions about consciousness often stall between two deeply rooted viewpoints. One is computational functionalism, which holds that cognition can be fully explained as abstract information processing. According to this view, if a system has the right functional organization (regardless of the material it runs on), consciousness should emerge. The opposing view, biological naturalism, argues that consciousness cannot be separated from the unique features of living brains and bodies. From this perspective, biology is not just a carrier of cognition, it is a core part of what cognition is. Both positions capture important truths, but their ongoing standoff suggests that an essential element is missing.

A Third Perspective on How Brains Compute

In our new paper, we propose an alternative framework called biological computationalism. The term is intentionally provocative, but also meant to clarify the debate. Our central argument is that the standard model of computation is either broken or poorly aligned with how real brains function. For many years, it has been tempting to assume that brains compute in much the same way traditional computers do, as if cognition were software running on neural hardware. However, brains do not operate like von Neumann machines, and forcing that analogy leads to strained metaphors and fragile explanations. To seriously understand how brains compute, and what it would take to create minds in other substrates, we need to expand our definition of what computation actually means.

Biological computation, as we define it, has three key characteristics.

Hybrid Computation in the Living Brain

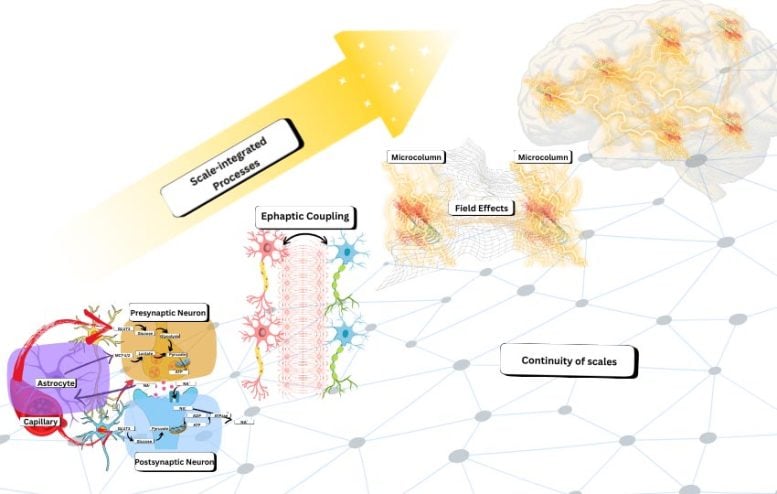

First, biological computation is hybrid. It blends discrete events with continuous processes. Neurons fire spikes, synapses release neurotransmitters, and neural networks shift between event-like states. At the same time, these events unfold within constantly changing physical environments that include voltage fields, chemical gradients, ionic diffusion, and time-varying conductances. The brain is neither purely digital nor simply analog. Instead, it operates as a layered system in which continuous dynamics influence discrete events, and discrete events reshape the surrounding continuous processes through ongoing feedback.

Why Brain Computation Cannot Be Separated by Scale

Second, biological computation is scale-inseparable. In conventional computing, it is usually possible to draw a clear boundary between software and hardware, or between a functional description and its physical implementation. In the brain, that boundary does not exist. There is no clean point where one can say, here is the algorithm, and over there is the physical machinery that carries it out. Instead, causal interactions span many levels at once, from ion channels to dendrites to neural circuits to whole-brain dynamics. These levels do not behave like neatly stacked modules. In biological systems, altering the so-called implementation also alters the computation itself, because the two are tightly intertwined.

Energy Constraints Shape Intelligence

Third, biological computation is metabolically grounded. The brain operates under strict energy limits, and those limits influence its organization at every level. This is not a minor engineering detail. Energy constraints affect what the brain can represent, how it learns, which patterns remain stable, and how information is coordinated and routed. From this perspective, the tight coupling across scales is not unnecessary complexity. It is an energy optimization strategy that supports flexible and resilient intelligence under severe metabolic constraints.

When the Algorithm Is the Physical System

Together, these three features lead to a conclusion that may feel unsettling to anyone used to classical ideas about computation. In the brain, computation is not abstract symbol manipulation. It is not simply a matter of moving representations according to formal rules while treating the physical medium as “mere implementation.” In biological computation, the algorithm is the substrate. The physical organization does not just enable computation, it constitutes it. Brains do not merely run programs. They are specific kinds of physical processes that compute by unfolding through time.

Limits of Current AI Models

This perspective also exposes a limitation in how contemporary artificial intelligence is often described. Even highly capable AI systems primarily simulate functions. They learn mappings from inputs to outputs, sometimes with impressive generalization, but the underlying computation remains a digital procedure running on hardware designed for a very different style of processing. Brains, in contrast, carry out computation in physical time. Continuous fields, ion flows, dendritic integration, local oscillatory coupling, and emergent electromagnetic interactions are not just biological “details” that can be ignored when extracting an abstract algorithm. In our view, these processes are the computational primitives of the brain. They are what allow real-time integration, robustness, and adaptive control.

Not Biology Only, But Biology Like Computation

This does not mean we believe consciousness is exclusive to carbon-based life. We are not making a “biology or nothing” claim. Our argument is more precise. If consciousness (or mind-like cognition) depends on this particular kind of computation, then it may require biological-style computational organization, even when implemented in new substrates. The critical question is not whether a system is literally biological, but whether it instantiates the right kind of hybrid, scale-inseparable, metabolically (or more generally energetically) grounded computation.

Rethinking the Goal of Synthetic Minds

This shift has major implications for efforts to build synthetic minds. If brain computation cannot be separated from its physical realization, then simply scaling up digital AI may not be enough. This is not because digital systems cannot become more capable, but because capability alone does not capture what matters. The deeper risk is that we may be optimizing the wrong target by refining algorithms while leaving the underlying computational framework unchanged. Biological computationalism suggests that creating truly mind-like systems may require new kinds of physical machines, ones in which computation is not neatly divided into software and hardware, but spread across levels, dynamically linked, and shaped by real-time physical and energy constraints.

So if the goal is something like synthetic consciousness, the central question may not be, “What algorithm should we run?” Instead, it may be, “What kind of physical system must exist for that algorithm to be inseparable from its own dynamics?” What features are required, including hybrid event-field interactions, multi-scale coupling without clean interfaces, and energetic constraints that shape inference and learning, so that computation is not an abstract layer added on top, but an intrinsic property of the system itself?

That is the shift biological computationalism calls for. It moves the challenge away from finding the right program and toward identifying the right kind of computing matter.

Reference: “On biological and artificial consciousness: A case for biological computationalism” by Borjan Milinkovic and Jaan Aru, 17 December 2025, Neuroscience & Biobehavioral Reviews.

DOI: 10.1016/j.neubiorev.2025.106524

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.

8 Comments

It’s becoming clear that with all the brain and consciousness theories out there, the proof will be in the pudding. By this I mean, can any particular theory be used to create a human adult level conscious machine. My bet is on the late Gerald Edelman’s Extended Theory of Neuronal Group Selection. The lead group in robotics based on this theory is the Neurorobotics Lab at UC at Irvine. Dr. Edelman distinguished between primary consciousness, which came first in evolution, and that humans share with other conscious animals, and higher order consciousness, which came to only humans with the acquisition of language. A machine with only primary consciousness will probably have to come first.

What I find special about the TNGS is the Darwin series of automata created at the Neurosciences Institute by Dr. Edelman and his colleagues in the 1990’s and 2000’s. These machines perform in the real world, not in a restricted simulated world, and display convincing physical behavior indicative of higher psychological functions necessary for consciousness, such as perceptual categorization, memory, and learning. They are based on realistic models of the parts of the biological brain that the theory claims subserve these functions. The extended TNGS allows for the emergence of consciousness based only on further evolutionary development of the brain areas responsible for these functions, in a parsimonious way. No other research I’ve encountered is anywhere near as convincing.

I post because on almost every video and article about the brain and consciousness that I encounter, the attitude seems to be that we still know next to nothing about how the brain and consciousness work; that there’s lots of data but no unifying theory. I believe the extended TNGS is that theory. My motivation is to keep that theory in front of the public. And obviously, I consider it the route to a truly conscious machine, primary and higher-order.

My advice to people who want to create a conscious machine is to seriously ground themselves in the extended TNGS and the Darwin automata first, and proceed from there, by applying to Jeff Krichmar’s lab at UC Irvine, possibly. Dr. Edelman’s roadmap to a conscious machine is at https://arxiv.org/abs/2105.10461, and here is a video of Jeff Krichmar talking about some of the Darwin automata, https://www.youtube.com/watch?v=J7Uh9phc1Ow

Existence transcends simulation. There are physical truths that are inherently non-simulatable, yet they exist. Since the first cell, biology has interacted with physics in ways that a Turing machine cannot replicate.

The traditional dichotomy between software and hardware is limiting in this context. In biological systems, the substrate and its physical properties are fundamental and cannot be reduced to algorithmic execution. The limitation is not merely a lack of input data (such as arbitrarily detailed brain scans), undefined micro-laws, or insufficient computational power. Rather, it is an ontological distinction: the biological substrate cannot be abstractly separated into hardware and software. It retains a subjectivity—a set of non-computable truths—that is irreducible to algorithms.

As of 2026, our understanding of this is negligible. The prevailing belief that ‘everything is simulation’ is akin to a modern flat-earth theory. Major breakthroughs will likely stem from the recognition of strong emergence: the acknowledgment that physical truths exist which lie beyond the reach of simulation.

While I posit that consciousness is not necessarily limited to carbon, addressing it requires a radically different framework.

I strongly agree with this article, though I object to the term ‘biological computation.’ ‘Computation’ implies symbol manipulation akin to software. We require new terminology to delineate biological processes from computational ones, as the former are, in principle, impossible to capture through the latter.”

I agree very much with this article. My only reservation is that I would not use the term ‘biological computation,’ as computation suggests software operations or symbol manipulation. I think a new word is needed here to cleanly distinguish what biology uses versus computation, as this process is not capturable, even in principle, by any sort of computation.

My motto is “existence exceeds simulation”. There are truths about physical systems that cannot be simulated; nevertheless, these truths exist. From the very first cell, biology learned to interact with physics in a way that cannot be captured purely by a Turing machine.

The traditional dichotomy between software and hardware is limiting in this context. In biological systems, the substrate and its physical properties are fundamental and cannot be reduced to algorithmic execution. The limitation is not merely a lack of input data (such as arbitrarily detailed brain scans), undefined micro-laws, or insufficient computational power. Rather, it is an ontological distinction: the biological substrate cannot be abstractly separated into hardware and software. It possesses its own subjectivity (expressed as a set of non-computable truths) that is irreducible to any algorithm.

As of 2026, our understanding of this is negligible. The prevailing belief that ‘everything is simulation’ is akin to a modern flat-earth theory. Major breakthroughs will likely stem from the recognition of strong emergence: the acknowledgment that physical truths exist which lie beyond the reach of simulation.

While I posit that consciousness is not necessarily limited to carbon, addressing it requires a radically different framework and deeper understanding.

I believe that we are missing some yet unknown process of transmission of information across two spatially separate (live like cells or neurons) entities. Somewhat like distant neutrino pairs changing their spins instantaneously. Or two living entities intuitively knowing the presence or mind of each other.

Consciousness does not, and cannot, emerge from materiality, it is prior.

Consciousness is prior to materiality … it does not, and cannot, emerge from it. That is mythology.

This is because material description presupposes observation, interpretation, and meaning—all of which require conscious access.

Material systems are defined through third-person frameworks (measurement, modeling, inference), but those frameworks only function within conscious experience.

There is no coherent account of “matter” that does not already assume a conscious standpoint from which matter is known, described, or verified.

Thus, materiality is epistemically downstream of consciousness: it is what appears within experience, not what explains experience.

From a coherence-first (WPCA) perspective, consciousness functions as the causal integration layer that makes stable meaning, agency, and discernment possible.

If material processes were ontologically primary, consciousness would have to emerge as a publicly specifiable object—yet this collapses the necessary boundary between observer and observed and results in category error and causal incoherence.

Treating consciousness as prior avoids this collapse: materiality becomes a constrained, shareable representation arising within conscious fields, while consciousness remains the irreducible interior locus where causation, valuation, and coherence are integrated.

In short, matter can be modeled without loss as dependent on consciousness; consciousness cannot be modeled without presupposing itself.

Believing that conscious as can come from materiality is like believing the Earth is flat…

Material description presupposes observation, interpretation, and meaning—all of which require conscious access.

Consciousness does not, and cannot, emerge from it. That is mythology.

Material systems are defined through third-person frameworks (measurement, modeling, inference), but those frameworks only function within conscious experience.

There is no coherent account of “matter” that does not already assume a conscious standpoint from which matter is known, described, or verified.

Thus, materiality is epistemically downstream of consciousness: it is what appears within experience, not what explains experience.

From a coherence-first (WPCA) perspective, consciousness functions as the causal integration layer that makes stable meaning, agency, and discernment possible.

If material processes were ontologically primary, consciousness would have to emerge as a publicly specifiable object—yet this collapses the necessary boundary between observer and observed and results in category error and causal incoherence.

Treating consciousness as prior avoids this collapse: materiality becomes a constrained, shareable representation arising within conscious fields, while consciousness remains the irreducible interior locus where causation, valuation, and coherence are integrated.

In short, matter can be modeled without loss as dependent on consciousness; consciousness cannot be modeled without presupposing itself.

Believing that conscious as can come from materiality is like believing the Earth is flat…

There is no way to prove what consciousness is.