This method exposes fake images created by computer algorithms rather than by humans.

They look deceptively real, but they are made by computers: so-called deep-fake images are generated by machine learning algorithms, and humans are pretty much unable to distinguish them from real photos. Researchers at the Horst Görtz Institute for IT Security at Ruhr-Universität Bochum and the Cluster of Excellence “Cyber Security in the Age of Large-Scale Adversaries” (Casa) have developed a new method for efficiently identifying deep-fake images. To this end, they analyze the objects in the frequency domain, an established signal processing technique.

The team presented their work at the International Conference on Machine Learning (ICML) on 15 July 2020, one of the leading conferences in the field of machine learning. Additionally, the researchers make their code freely available online*, so that other groups can reproduce their results.

Interaction of two algorithms results in new images

Deep-fake images — a portmanteau word from “deep learning” for machine learning and “fake” — are generated with the help of computer models, so-called Generative Adversarial Networks, GANs for short. Two algorithms work together in these networks: the first algorithm creates random images based on certain input data. The second algorithm needs to decide whether the image is a fake or not. If the image is found to be a fake, the second algorithm gives the first algorithm the command to revise the image — until it no longer recognizes it as a fake.

In recent years, this technique has helped make deep-fake images more and more authentic. On the website**, users can check if they’re able to distinguish fakes from original photos. “In the era of fake news, it can be a problem if users don’t have the ability to distinguish computer-generated images from originals,” says Professor Thorsten Holz from the Chair for Systems Security.

For their analysis, the Bochum-based researchers used the data sets that also form the basis of the above-mentioned page “Which face is real”. In this interdisciplinary project, Joel Frank, Thorsten Eisenhofer, and Professor Thorsten Holz from the Chair for Systems Security cooperated with Professor Asja Fischer from the Chair of Machine Learning as well as Lea Schönherr and Professor Dorothea Kolossa from the Chair of Digital Signal Processing.

Frequency analysis reveals typical artifacts

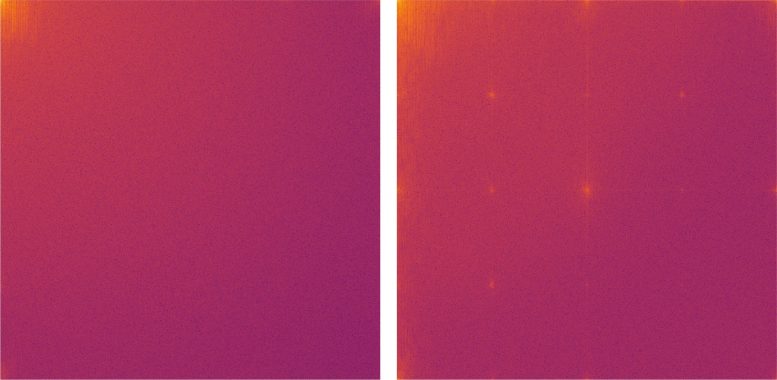

To date, deep-fake images have been analyzed using complex statistical methods. The Bochum group chose a different approach by converting the images into the frequency domain using the discrete cosine transform. The generated image is thus expressed as the sum of many different cosine functions. Natural images consist mainly of low-frequency functions.

The analysis has shown that images generated by GANs exhibit artifacts in the high-frequency range. For example, a typical grid structure emerges in the frequency representation of fake images. “Our experiments showed that these artifacts do not only occur in GAN-generated images. They are a structural problem of all deep learning algorithms,” explains Joel Frank from the Chair for Systems Security. “We assume that the artifacts described in our study will always tell us whether the image is a deep-fake image created by machine learning,” adds Frank. “Frequency analysis is, therefore, an effective way to automatically recognize computer-generated images.”

Reference: “Leveraging Frequency Analysis for Deep Fake Image Recognition” by Joel Frank, Thorsten Eisenhofer, Lea Schonherr, Asja Fischer, Dorothea Kolossa and Thorsten Holz, 2020, International Conference on Machine Learning (ICML).

PDF

Notes

* Code available at GitHub.

** Website: WhichFaceIsReal.com

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.

3 Comments

Unfortunately, it will be just as easy to use this technique to remove the artifacts from “deep fake” photographs in the next generation of development.

Yep, The moment that DeepFakes was created I knew what was coming … it aint here yet, but it almost is, and we aren’t in the least bit ready for it.

Mark Keller, not necessarily. People I work with have been testing various spectroscopic techniques, to find a simple & foolproof way of discerning all computer generated images. Consider that deep learning algorithms either generate from scratch, or using a mosaic of pixels from “real” images, or a blend. Both selfies and deep fakes, have telltale photonic patterns. Night vision goggles are said to be very handy, & then there’s the code which will give away anomalies, like repetition (I’m not well versed in this area but I think that was the gist).

I don’t want to give away too many tricks just yet, but there’s an obvious checkmate move where machine learning & code pirates with malevolent intent will ONLY be able to develop fakes from a limited pool, that will increasingly shrink as each protocol nullifies the most obvious sources. Another factor in their predicted obsolescence is the way in which amateurs have been saturating the surface net with cheesy retrograde apps, and dumb social media gimmicks, so the diligent few have already figured out the obvious telltales.