The development of robotic avatars could benefit from an improvement in how computers detect objects in low-resolution images.

A team at RIKEN has improved computer vision recognition capabilities by training algorithms to better identify objects in low-resolution images. Inspired by human brain memory formation techniques, the model degrades the quality of high-resolution images to train the algorithm in self-supervised learning, enhancing object recognition in low-quality images. The development is expected to benefit not only traditional computer vision applications but also the creation of cybernetic avatars and terahertz imaging technology.

Robotic Avatar Vision Enhancement Inspired by Human Perception

Just making a small tweak to algorithms typically used to enhance images could dramatically boost computer vision recognition capabilities in applications ranging from self-driving cars to cybernetic avatars. This is demonstrated by new research from scientists at RIKEN in Japan.

Unconventional Approach to Computer Vision

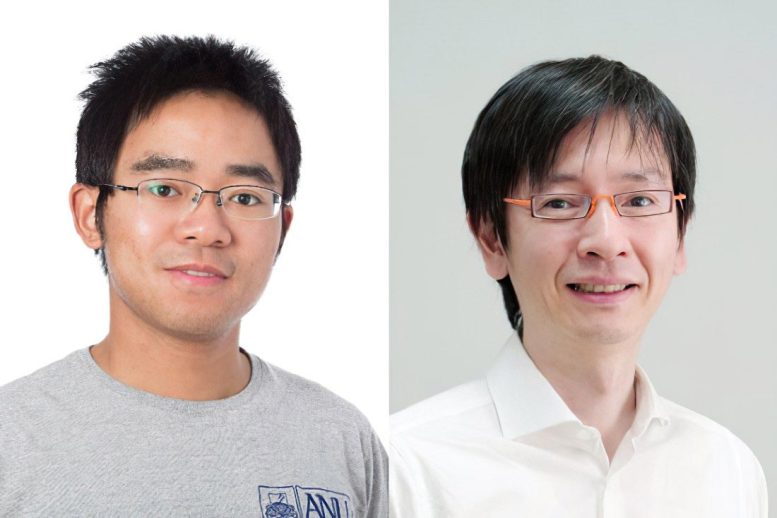

Distinctly different from most artificial intelligence (AI) experts, Lin Gu from the RIKEN Center for Advanced Intelligence Project began his career as a therapist. This background gave him unique insight into scale variance—a critical issue facing computer vision that refers to the difficulty of accurately detecting objects at different scales in an image. Because most AI systems are trained on high-resolution images, realistic low-quality pictures with blurry or distorted features pose a challenge to recognition algorithms.

The situation reminded Gu of Alice in Wonderland syndrome, a distorted vision condition that causes objects to appear smaller or larger than they actually are. “Human vision has size constancy, meaning we perceive objects as being the same size despite how the retinal image changes,” says Gu. “In contrast, existing computer vision algorithms lack that constancy, like Alice.”

A Novel Approach to Image Recognition

Now, inspired by hippocampal replay techniques used by the brain to form memories, Gu and his colleagues have developed a model that randomly degrades the resolution, blurriness, and noise of a high-resolution image—searching for features that stay the same after repeated changes.

By training on the generated data, the algorithm can perform self-supervised learning: helping other image-processing algorithms figure out what objects are in the image and where they are located without human intervention. The result: a more computationally efficient method of encoding and restoring the critical details in an image.

“In typical self-supervised learning methods, training data is modified by either masking part of the image or changing contrast before learning the supervisory signal,” explains Gu. “We propose using resolution as a self-supervision clue for the first time.”

Future Implications and Collaborations

Aside from typical computer vision uses, Gu notes that perceptual constant representation will be a fundamental part of technologies related to cyborgs and avatars. As an example, he cites his participation in a futuristic project by Japanese science agencies to create a realistic digital version of a government minister that can interact with citizens.

“For the artificial memory mechanism, representations that are invariant to resolution changes can act as a keystone,” says Gu. “I’m working with neuroscientists in RIKEN to explore the relation between artificial perpetual constant representation and the real one in the brain.”

This method is also being applied to terahertz imaging—an emerging non-destructive imaging technique with much potential in biomedicine, security and materials characterization. “As part of an ongoing collaboration with Michael Johnston’s team at Oxford University, we’re developing a new generation of terahertz imaging devices by using AI to enhance its quality and resolution,” Gu says.

Reference: “Exploring Resolution and Degradation Clues as Self-supervised Signal for Low Quality Object Detection” by Ziteng Cui, Yingying Zhu, Lin Gu, Guo-Jun Qi, Xiaoxiao Li, Renrui Zhang, Zenghui Zhang and Tatsuya Harada, 6 November 2022, European Conference on Computer Vision.

DOI: 10.1007/978-3-031-20077-9_28

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.