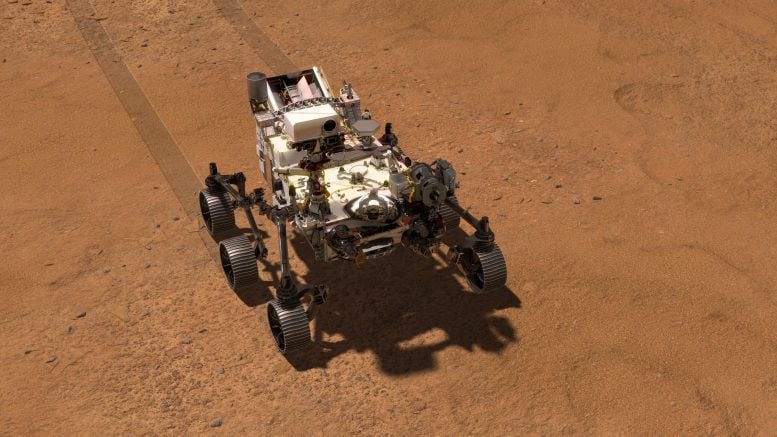

An AI just helped guide a NASA rover across Mars, marking a major leap in autonomous space exploration.

The team behind NASA’s six-wheeled robotic explorer tested a vision-enabled artificial intelligence system to plan a safe driving route across the surface of Mars without relying on human route planners.

NASA’s Perseverance rover has now completed the first drives on another planet that were planned by artificial intelligence. The demonstration took place on Dec. 8 and 10 and was led by NASA’s Jet Propulsion Laboratory in Southern California. During the test, generative AI handled the task of selecting waypoints for the rover, a detailed planning process that has traditionally been carried out by human rover drivers.

“This demonstration shows how far our capabilities have advanced and broadens how we will explore other worlds,” said NASA Administrator Jared Isaacman. “Autonomous technologies like this can help missions to operate more efficiently, respond to challenging terrain, and increase science return as distance from Earth grows. It’s a strong example of teams applying new technology carefully and responsibly in real operations.”

NASA’s Perseverance used its navigation cameras to capture its two-hour 30-minute drive along Jezero Crater’s rim on December 10, 2025. The navcam images were combined with rover data and placed into a 3D virtual environment, resulting in this reconstruction with virtual frames inserted about every 4 inches (0.1 meters) of drive progress. Credit: NASA/JPL-Caltech

How Vision AI Guided the Rover

To carry out the demonstration, the team used a form of generative artificial intelligence known as vision-language models. These models analyzed existing information from JPL’s surface mission dataset, drawing on the same images and data that human planners normally use.

Based on this analysis, the AI generated waypoints — fixed locations where the rover takes up a new set of instructions — allowing Perseverance to move safely across difficult Martian terrain.

The effort was coordinated from JPL’s Rover Operations Center (ROC) and carried out in collaboration with Anthropic, using the company’s Claude AI models.

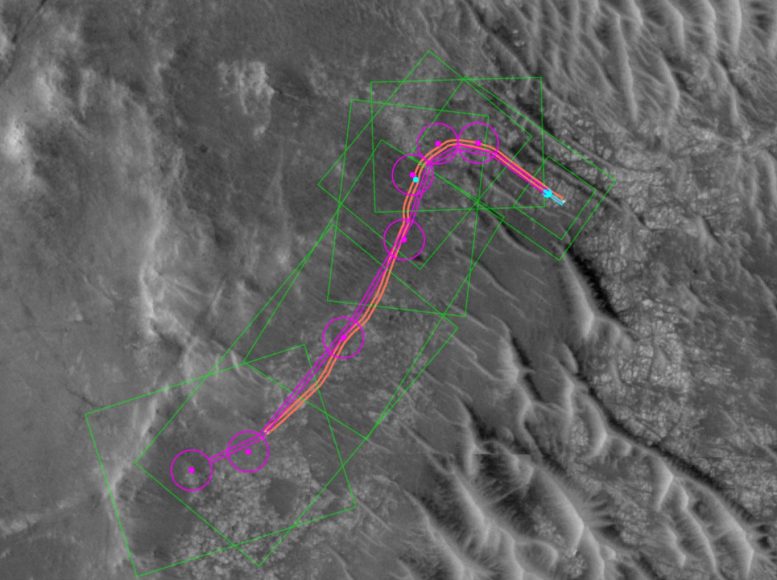

This animation was created using data acquired during Perseverance’s Dec. 10, 2025, drive on Jezero Crater’s rim. Pale blue lines depict the track the rover’s wheels take. Black lines snaking out in front of the rover show the path options the rover is considering. The white terrain is a height map based on rover data. The blue circle that appears near the end of the animation is a waypoint. Credit: NASA/JPL-Caltech

Why Mars Rover Driving Is So Challenging

Mars lies an average of about 140 million miles (225 million kilometers) from Earth. Because of this distance, communication delays prevent real-time remote control — or “joy-sticking” — of a rover. Instead, for the past 28 years and across multiple missions, human rover drivers have carefully planned routes in advance.

These drivers study terrain images and system data to design paths made up of waypoints, typically spaced no more than 330 feet (100 meters) apart to reduce the risk of hazards. Once finalized, the routes are transmitted through NASA’s Deep Space Network, and the rover executes the instructions on its own.

Generative AI Takes Over Route Planning

For Perseverance’s drives on the 1,707 and 1,709 Martian days, known as sols, the mission team shifted route planning to generative AI. The system analyzed high-resolution orbital images from the HiRISE (High Resolution Imaging Science Experiment) camera aboard NASA’s Mars Reconnaissance Orbiter, along with terrain slope data derived from digital elevation models.

After identifying key surface features — bedrock, outcrops, hazardous boulder fields, sand ripples, and the like — the AI produced a continuous driving route that included all required waypoints.

Before sending the commands to Mars, engineers ran the AI-generated instructions through JPL’s “digital twin” (virtual replica of the rover). This process verified more than 500,000 telemetry variables to ensure the plan was fully compatible with the rover’s flight software.

On December 8, Perseverance drove 689 feet (210 meters) using the AI-generated route. Two days later, the rover traveled another 807 feet (246 meters).

What This Means for Future Exploration

“The fundamental elements of generative AI are showing a lot of promise in streamlining the pillars of autonomous navigation for off-planet driving: perception (seeing the rocks and ripples), localization (knowing where we are), and planning and control (deciding and executing the safest path),” said Vandi Verma, a space roboticist at JPL and a member of the Perseverance engineering team. “We are moving towards a day where generative AI and other smart tools will help our surface rovers handle kilometer-scale drives while minimizing operator workload, and flag interesting surface features for our science team by scouring huge volumes of rover images.”

“Imagine intelligent systems not only on the ground at Earth, but also in edge applications in our rovers, helicopters, drones, and other surface elements trained with the collective wisdom of our NASA engineers, scientists, and astronauts,” said Matt Wallace, manager of JPL’s Exploration Systems Office. “That is the game-changing technology we need to establish the infrastructure and systems required for a permanent human presence on the Moon and take the U.S. to Mars and beyond.”

More About Perseverance

Managed for NASA by Caltech, JPL is home to the Rover Operations Center (ROC). The laboratory also oversees daily operations of the Perseverance rover for NASA’s Science Mission Directorate as part of the agency’s Mars Exploration Program portfolio.

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.