Discovery boosts understanding of how the human brain learns speech.

A father holds up his newborn, their faces only inches apart, and slowly repeats the syllables “da” and “dee.” After months of hearing these sounds, the baby begins to babble and gradually “da da da” is refined into the word “Daddy.”

Speech is learned.

These are critical steps in our intellectual development, yet many of the components of vocal learning remain a mystery. How does the brain encode the memories needed to imitate our parents’ speech? And can scientists intervene when the process goes awry?

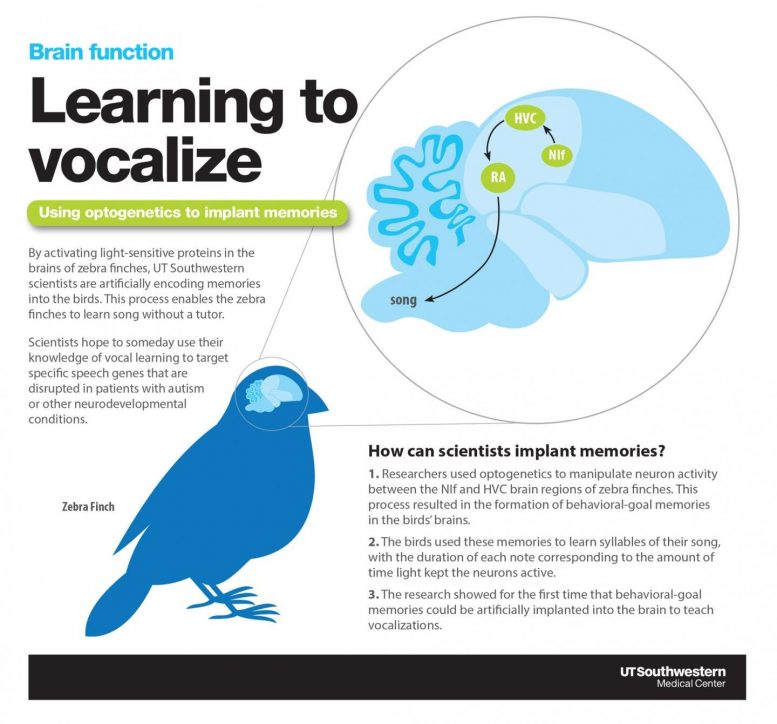

Researchers at UT Southwestern have begun to answer these questions in a new songbird study that shows memories can be implanted in the brain to teach vocalizations — without any lessons from the parent. Although the findings have no immediate implications for treating patients, they do provide compelling clues about where to look in the human brain to better understand autism and other conditions that affect language.

“This is the first time we have confirmed brain regions that encode behavioral-goal memories — those memories that guide us when we want to imitate anything from speech to learning the piano,” said Dr. Todd Roberts, a neuroscientist with UT Southwestern’s O’Donnell Brain Institute. “The findings enabled us to implant these memories into the birds and guide the learning of their song.”

Activating neurons

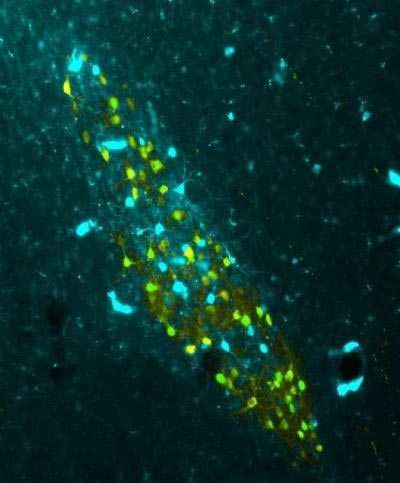

The study published in Science outlines how scientists activated a circuit of neurons through optogenetics — a relatively new tool that uses light to monitor and control brain activity.

The researchers used zebra finches because they share many of the human stages of vocal development: Early in life, the birds hear their fathers sing, eventually memorizing the notes. They learn to replicate the behavior after practicing tens of thousands of times.

By controlling the interaction between two regions of the brain, Dr. Roberts’ team encoded memories in zebra finches that had no tutoring experience from their fathers. The birds used these memories to learn syllables of their song, with the duration of each note corresponding to the amount of time the light kept the neurons active. The shorter the light exposure, the shorter the note.

“We’re not teaching the birds everything it needs to know — just the duration of syllables in its song,” Dr. Roberts said. “The two brain regions we tested in this study represent just one piece of the puzzle.”

Nevertheless, the discovery is notable because it opens new avenues of research to identify more brain circuits that influence other aspects of vocalization, such as pitch and the order of each sound.

“If we figure out those other pathways, we could hypothetically teach a bird to sing its song without any interaction from its father,” Dr. Roberts said. “But we’re a long way from being able to do that.”

Targeting speech disorders

The discovery is the latest in a string of findings from the Roberts laboratory, which specializes in documenting how the brain functions during vocal learning. By mapping the neural processes involved as birds learn mating songs, scientists hope to someday use that knowledge to target specific speech genes that are disrupted in patients with autism or other neurodevelopmental conditions.

Among other recent projects, Dr. Roberts’ team identified a network of neurons that plays a vital role in learning vocalizations by aiding communication between motor and auditory regions of the brain. His lab is also leading an ongoing study funded by the federal BRAIN Initiative research program.

Implanting memories

The findings described in the Science study break new ground in establishing how behavioral-goal memories are formed and their role in learning vocalizations.

“It has been hard to study these kinds of memories in the lab because we haven’t known where they’re encoded,” Dr. Roberts said.

His team found some of those answers by testing connections between sensory-motor areas of the brain. Specifically, researchers used optogenetics to manipulate neuron activity in the NIf brain region and to control the information it sends to the HVC, another brain area implicated in learning from auditory experience.

Besides documenting the NIf’s role in forming syllable-specific memories, Dr. Roberts’ team found that these memories were being stored somewhere else in the brain after their formation. Scientists showed this by cutting off communication between the NIf and HVC at different points of the learning process: Zebra finches that had already formed the memory could still perform the song, while those that were tutored only after the neural communication was severed could not copy the song.

Dr. Roberts said his lab will examine other brain regions that carry different kinds of information to the HVC in hopes of gaining a fuller understanding of how additional properties of behavioral-goal memories are formed.

“The human brain and the pathways associated with speech and language are immensely more complicated than the songbird’s circuitry,” Dr. Roberts said. “But our research is providing strong clues of where to look for more insight on neurodevelopmental disorders.”

###

Dr. Roberts is an Assistant Professor of Neuroscience and a Thomas O. Hicks Scholar in Medical Research in UT Southwestern’s Peter O’Donnell Jr. Brain Institute. The Science study was supported with grants from the U.S. National Institutes of Health and the National Science Foundation.

Reference: “Inception of memories that guide vocal learning in the songbird” by Wenchan Zhao, Francisco Garcia-Oscos, Daniel Dinh and Todd F. Roberts, 4 October 2019, Science.

DOI: 10.1126/science.aaw4226

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.