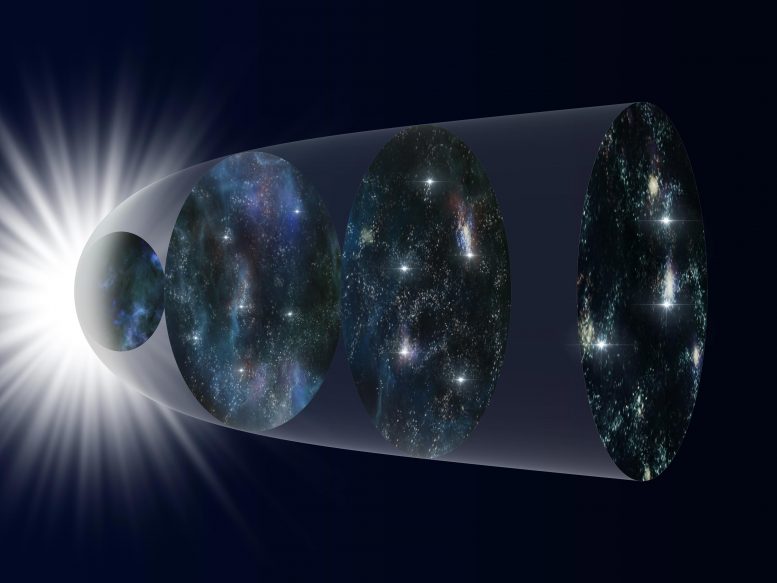

New research suggests the Hubble constant may change over time, offering a better fit for supernova data than traditional models.

An international research team analyzed a database of more than 1000 supernova explosions and found that models for the expansion of the Universe best match the data when a new time dependent variation is introduced. If proven correct with future, higher-quality data from the Subaru Telescope and other observatories, these results could indicate still unknown physics working on the cosmic scale.

Edwin Hubble’s observations over 90 years ago showing the expansion of the Universe remain a cornerstone of modern astrophysics. But when you get into the details of calculating how fast the Universe was expanding at different times in its history, scientists have difficulty getting theoretical models to match observations.

Rethinking the Hubble Constant

To solve this problem, a team led by Maria Dainotti (Assistant Professor at the National Astronomical Observatory of Japan and the Graduate University for Advanced Studies, SOKENDAI in Japan and an affiliated scientist at the Space Science Institute in the U.S.A.) analyzed a catalog of 1048 supernovae which exploded at different times in the history of the Universe. The team found that the theoretical models can be made to match the observations if one of the constants used in the equations, appropriately called the Hubble constant, is allowed to vary with time.

There are several possible explanations for this apparent change in the Hubble constant. A likely but boring possibility is that observational biases exist in the data sample. To help correct for potential biases, astronomers are using Hyper Suprime-Cam on the Subaru Telescope to observe fainter supernovae over a wide area. Data from this instrument will increase the sample of observed supernovae in the early Universe and reduce the uncertainty in the data.

But if the current results hold-up under further investigation, if the Hubble constant is in fact changing, that opens the question of what is driving the change. Answering that question could require a new, or at least modified, version of astrophysics.

Reference: “On the Hubble Constant Tension in the SNe Ia Pantheon Sample” by M. G. Dainotti, B. De Simone, T. Schiavone, G. Montani, E. Rinaldiand G. Lambiase, 17 May 2021, Astrophysical Journal.

DOI: 10.3847/1538-4357/abeb73

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.

13 Comments

The variability of the Hubble constant is an apparent finding because it did not take into account the contraction of time with the expansion of the universe, time varies in proportion to the square root of universal expansion.

file:///C:/Users/O%20Meu%20PC/Downloads/ijp-9-2-5%20(4).pdf

http://www.sciepub.com/IJP/abstract/13173

Since obsrevations and so relativity agree on time dilation for different observers at most, its hypothesized “contraction” non-existence was accounted for and the change of expansion rate is a century old find [“Scale factor (cosmology)”, Wikipedia].

Your self promoted pseudoscience [ https://predatoryjournals.com/publishers/#S ] links do not consist of evidence testing the puprorted hypothesis.

“obsrevations ” := observations

“puprorted” := purported

This is true that a theory can be presented to find out expansion ŕatè established from òbservation of supernova Type 1a.Calculations in this method requires introdùction of new physics to explain facts completly.

Note that, as the article suggest, this is an outstanding question.

Nobody knows.

A Theory can be presented for higher expansion rate òf galaxiès in ùnìveŕsè by the method implementing obßervational data of Type 1a supernova.But this method to explain the resuĺt of calculations needs to introdùce New Physìcs.

Seeking an expansion constant is only a valid exercise *if* we make the assumption that the entire universe is influenced by a single common force acting uniformly across its entirety. However- and as the evidence thus far suggests- Cosmological expansion is neither uniform nor constant, therefore it is not caused by any single common force but by apparently random and chaotic forces acting independently of one another that collectively give the appearance -at large scales- of a uniform expansion.

Let the evidence speak for itself and let’s stop trying to bash it into our preconceptual mould!

The “Hubble constant” is a parameter of a rate function, and is referenced to “now”, so you have to define carefully what you mean by “constant” [ https://en.wikipedia.org/wiki/Scale_factor_(cosmology) ]. (It is easier and less error prone to refer to the expansion rate.)

The cosmic background radiation shows that the universe at the time was homogeneous and isotropic with fluctuations to 1 part in 100,000. Few cosmologists speculate in local (or worse) inhomogeneities.

“The cosmic microwave background radiation is an emission of uniform, black body thermal energy coming from all parts of the sky. The radiation is isotropic to roughly one part in 100,000: the root mean square variations are only 18 µK,[9] after subtracting out a dipole anisotropy from the Doppler shift of the background radiation.”

[ https://en.wikipedia.org/wiki/Cosmic_microwave_background ]

…

“scientists have difficulty getting theoretical models to match observations”

If we assume our math is correct it could mean only three things:

* our theoretical knowledge is wrong,

* our observation is wrong,

* or our observation is wrong and our theoretical knowledge is wrong.

Eater way we need a priest to streiten those scientist and put some sense into them, in order to move toward more consistent theory…

…

Preprint here: https://arxiv.org/pdf/2103.02117.pdf .

Essentially they do a lot of fits on various data sets without making statistical tests if fits are suitable or best in some sense, and put a lot of statistical weight on their low-z supernova sample. It is p-hacking of fits.

I don’t see much of a resolution in that. But they add supernova data, and that is welcome.

… is that like some implication that there might be a large dimension, and that will keep string theory alive, …

… tha evidance for a Supersymmetry please,…

Could universe respiration solve the flat/curve tension? Also explain problem of early smb quasars and old stars that don’t fit models including “big bang”?

It is all relative to time? For example when matter first appeared if there was expansion it would have been slow and stayed that way for along time it must have or matter would cease to exist. So we know that blacksphere’s make galaxies so the reason the blacksphere’s at the center of galaxies are so big is when the first blacksphere appeared time was moving very slow and that may be the reason they think there wasn’t enough time for them to grow that big. But if time was slower when things first started then it could take as long as it took and we wouldn’t know the difference and would always end up with the same conclusion there wasn’t enough time? So when we say it’s speeding up what we’re really saying is it’s not the beginning or the end but that it’s the end of the beginning. The uni must have been packed with gas in all forms liquid frozen vapor so we don’t count the time it took for the first star to appear it must have been gigantic compared to whats out there now the size of galaxies I’m sure. So time is the key there used to be lots but the clocks been ticking for along time now so not so much anymore. Goodluck solving all the mysteries but don’t forget to look around once in awhile or you might miss the Forrest for the tree’s. Thanks for the video and keep up the hard works.