New research suggests supernova velocity measurements may be flawed, contributing to disagreements in the Hubble constant. The problem may go deeper, hinting at inconsistencies in the Universe’s composition.

Ever since the astronomer Edwin Hubble demonstrated that the farther apart two galaxies are, the faster they move away from each other, researchers have measured the expansion rate of the Universe (the Hubble constant) and the history of this expansion. Recently, a new puzzle has emerged, as there seems to be a discrepancy between measurements of this expansion using radiation in the early Universe and using nearby objects. Researchers from the Cosmic Dawn Center, at the Niels Bohr Institute, University of Copenhagen, have now contributed to this debate by focusing on velocity measurements. The result has been published in Astrophysical Journal.

The researchers at the Cosmic Dawn Center found that the measurements of velocity used for determining the expansion rate of the Universe may not be reliable. As stated in the publication, this doesn’t resolve the discrepancies, but rather hints at an additional inconsistency in the composition of the Universe.

Measuring the Expansion Rate of the Universe

Currently, astronomers measure the expansion of the Universe using two very different techniques. One is based on measuring the relationship between distance and velocity of nearby galaxies, while the other stems from studying the background radiation from the very early universe. Surprisingly, these two approaches currently find different expansion rates. If this discrepancy is real, a new and rather dramatic reinterpretation of the development of the Universe will be the consequence. However, it is also possible that the difference in the Hubble constant could be from incorrect measurements. It is difficult to measure distances in the Universe, so many studies have focused on improving and recalibrating distance measurements. But in spite of this, over the last 4 years, the disagreement has not been resolved.

The Velocity of the Remote Galaxies Is Easy To Measure – or so We Thought

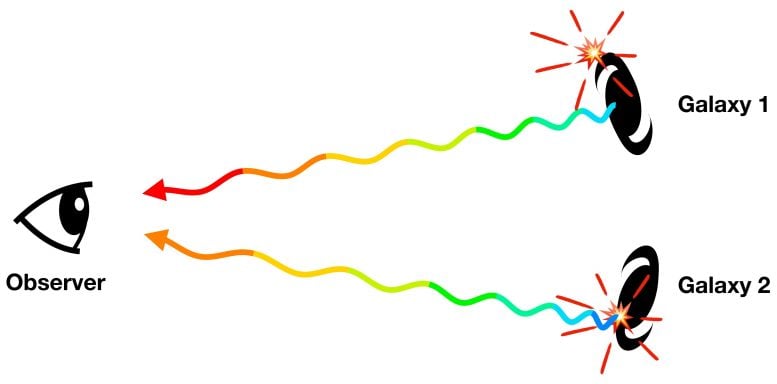

In a recent scientific article, the researchers from the Cosmic Dawn Center now attempt to shine a light on a related problem: the measurement of velocity. Depending on the velocity with which a remote object moves away from us, its light shifts to redder colors. With this so-called redshift it is possible to measure the velocity from a spectrum of a remote galaxy. Unlike measurements of distance, until now it was assumed that velocities were relatively easy to measure.

However, when the researchers recently examined distance and velocity measurements from more than 1000 supernovae (exploding stars) collected during the last 25 years, they found a surprising discrepancy in their results. Albert Sneppen, Masters student at the Niels Bohr Institute explains: “We’ve always believed that measuring velocities was fairly straightforward and precise, but it turns out that we are actually dealing with two types of redshifts.”

The first type, measuring the velocity with which the host galaxy moves away from us, is considered the most reliable. The other type of redshift measures instead the velocity of matter ejected from the exploding star inside the galaxy. Or, more precisely, the matter from the supernova moving towards us with a few percent of the velocity of light (illustration 1). After compensating for this extra movement the redshift – and velocity – of the host galaxy can be determined. But this compensation requires a precise model for the explosion. The researchers were able to determine that the results from these two different techniques result in two different expansion histories for the Universe, and therefore two different compositions as well.

Are Things “Broken in an Interesting Way?”

So, does this mean that the measurements of the early Universe and newer measurements are ultimately a question of imprecise measurements of velocity? Probably not, says Bidisha Sen, one of the authors of the article. “Even if we only use the more reliable redshifts, the supernova measurements not only continue to disagree with the Hubble constant measured from the early Universe – they also hint at a more general discrepancy regarding the composition of the Universe,” she says.

Associate professor at the Niels Bohr Institute Charles Steinhardt, is intrigued by these new results. “If we are actually dealing with two disagreements, it means that our current model would be “broken in an interesting way,” he says. “In order to solve two problems, one regarding the composition of the Universe and one regarding the expansion rate of the Universe, rather different physical explanations are required than if we only want to explain a single discrepancy in the expansion rate.”

The Scientific Work Continues at the Nordic Optical Telescope

With the Nordic Optical Telescope in Gran Canaria the researchers are now acquiring new redshifts from the host galaxies. When they compare these results with the supernova-based redshifts, they will be able to see if the two techniques remain different. “We have learned that these sensitive measurements require precise measurements of velocity, and these will be attainable with fresh observations,” Steinhardt explains.

Reference: “Effects of Supernova Redshift Uncertainties on the Determination of Cosmological Parameters” by Charles L. Steinhardt, Albert Sneppen and Bidisha Sen, 8 October 2020, The Astrophysical Journal.

DOI: 10.3847/1538-4357/abb140

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.

11 Comments

The clear results from the paper is that the universe is flat and that you need really precise supernova redshifts, < 10^-3 uncertainties [!], to derive unbiased cosmological parameters from them.

The hypothesis that these measurements may suggest new physics hinges on finding another tension in their data. The problem is that they cluster their data so it is hard to tell if it is a real tension.

As a comparison, this paper on supernova physics comes to another conclusion since they see different populations of supernovas, suggesting other types of clustering as well:

"Could a not-so-standard candle jeopardize those discoveries? “It means something, but not that dark energy goes away,” Woosley says. Dark energy has been confirmed using other methods, so he’s not worried about that. But he thinks cosmologists will run into trouble as they put their theories to more rigorous tests that require more precise standard candles. “Supernovae could be less useful for precision cosmology,” he says.

Astronomers already knew the peak brightness of type Ia supernovae isn’t perfectly consistent. To cope, they have worked out an empirical formula, known as the Phillips relation, that links peak brightness to the rate at which the light fades: Flashes that decay slowly are overall brighter than those that fade quickly. But more than 30% of type Ia supernovae stray far from the Phillips relation. Perhaps low-mass D6 explosions can explain these oddballs, Shen says. For now, those who wield the cosmic yardstick will need to “throw away anything that looks weird,” Gaensicke says, and hope for the best.

Andy Howell, a supernova watcher at Las Cumbres Observatory, thinks type Ia supernovae could still be reliable tools for cosmology if astronomers could separate the different varieties of type Ia that are now lumped together. “If we knew there were two populations, we could make the measurements even better,” he says."

[ https://www.sciencemag.org/news/2020/06/galaxy-s-brightest-explosions-go-nuclear-unexpected-trigger-pairs-dead-stars ]

If there is a real tension in the expansion rate observations after these population and uncertainty issues have been taken care of, the solution can be as simple as considering the magnetic fields that we observe between galaxies in the cosmic filaments. ["The Hidden Magnetic Universe Begins to Come Into View", Quanta Magazine]

But if the dark matter tension is real, that could be exciting! The recent paper that use quantum field phsyics to constrain the nature and mass of dark matter suggest that at its simplest it is a scalar field particle which is unstable and is finetuned to last sufficiently long for galaxy formation ["Narrowing Down the Mass of Dark Matter", Universe Today; preprint arxiv 2009.11575].

The universe isn’t flat at all I’ve been to other universes with the help of aliens I can tell you that our universe is all on its own until you get to multiverse ok art of mega space that’s what I call the space outside our universe since I’ve seen it I’ve named it anyways aliens don’t come from our universe the come from much much further can I tell U that our universe is like a grain of sand in comparison to what is out there don’t worry there’s plenty of empty megaverse out there with one or maybe 3 universes out there and trust me you wouldn’t believe what I’ve seen out there it’s amazing and also once we get technology up to their standards of flying space ships people can see how big multiverse is but our universe is seriously like a grain of sand black holes are amazing windy noisey like a vacuum cleaner it’d a whole room in there the sides go on well past the entry point it’s weird but there’s lots of life in the multiverse part of megaverse so far in one direction there’s nothing but empty space and gas giants but in another direction after two hours flying in alien space ship going faster than the speed of light you come to about 1 million universes a universe looks like a star in the far distance then you get close and go through the gas cloud like leaving our universe we passed thru the microwave background truth is it’s left over gas from the big bang but you can go through it with no harm believe me or not but that’s the truth I’ve met greys from America to Australia my yellow alien friends showed me their world with stone temples and a mayen or aztec like pyramid that’s the truth I’m not a scientist but I know what’s out there how they found us I have no idea

It is a simple as this if 2 galaxys consist of the same energy and mass at equal distance they will move at equal space and time. If 1 Galaxy is smallie than the other it will move faster less mass to push.

Cosmological expansion is not galaxies moving through space, it is space expanding in between since we can see that all galaxies separate. Except those galaxies that, unlike say our Local Group, is held together by gravity.

“Ever since the astronomer Edwin Hubble demonstrated that the further apart two galaxies are, the faster they move away from each other, researchers have measured the expansion rate of the Universe (the Hubble constant) and the history of this expansion.”

This pattern is also consistent with what is expected from a general relativistic universe (since a space in equilibrium would be unstable, and a universe can only expand or contract).

An entire universe hangs on Hubble’s interpretation of the red shift as a Doppler shift. Plus of course the General Theory of Relativity. Plus the profound assumption that Planck’s Constant, which is fundamental to the relationship between Energy and Frequency, has remained the same since time began, assuming that the speed of light in a single homogeneous medium (which space-time allegedly hasn’t ever been, hence the red-shift) has remained the same. It is interesting how the “afterglow” of the Big Bang is claimed as proof of the Big Bang when its interpretation as “afterglow” depends basically on Hubble’s interpretation of the red-shift. Does anyone else think this might be a circular argument? I suppose there are stranger things than fish in the sea and one of them is our universe and the fact that something can have come from nothing before nothing existed.

Well, the idea that “a circular argument” would be bad derives from philosophic superstition. Apparently they consider it bad for their empty discussions. Something similar can be said of the idea of “something … from nothing” which derives from religious superstitions. Apparently they consider such an unobserved, untestable notion – that honestly reminds of a baby’s development of object persistence notions – good for their empty discussions.

Science is famously *built* on circularity, since observations found theories and theories predict observations. A “perfect theory” would predict all the observations of its area and only new observations or theory work would improve on it. Science is a tool, and – in principle – who cares how it is constructed if it works?

The redshift can be independently observed in the cosmic background temperature since its emission derives from a plasma glow at ~ 3000 K and now its spectral blackbody temperature is ~3 K. That is a factor z = 1,000 redshift stretch of its optical glow peak into the microwave region peak.

“Plus the profound assumption that Planck’s Constant, which is fundamental to the relationship between Energy and Frequency, has remained the same since time began, assuming that the speed of light in a single homogeneous medium (which space-time allegedly hasn’t ever been, hence the red-shift) has remained the same.”

Planck’s constant is a fundamental property of quantum field wavefunction, it denotes the quantized orbital momentum spin value. It doesn’t matter whether space is filled with a homogeneous medium as long as space has quantum field physics.

But as it happens, we can observe from the cosmic background spectra that the universe started out homogeneous and isotropic to to 1 parts per 10^5 density and velocity fluctuations.

We can also see that space looks perfectly flat, an observation that since 2018 is so robustly tested that it isn’t expected to change according to astrophysicists that I have read (say, Ethan Siegel had some article claiming that). That is key, since a flat universe will have its laws conserved by Noether’s theorem applied to a general relativistic universe.

Perhaps we can consider the proposal by ‘Abdu’l-Bahá (Sir Abbas Effendi) that there are three types of matter, not two (regular and dark). I suggest that we call the third type “darker matter”. The proposal is that around each large mass there is a larger sphere of the second type of matter, and around that a “much larger sphere” of yet another type. This does not conflict with the concept of dark matter, but may explain better the observations that have been interpreted as dark energy. The proposal includes an ethereal matter that fills all space with the ability to transmit vibrations and pulses “for absolute nothingness is an impossibility”. The clever re-branding of the ether as a form of time-space has been necessitated by recent observations and multiple models, but due to the branding the term “ether” as “disproven” there is a PR problem bringing it back. I am more interested it how things work than in supporting old narratives and genuflections. It may be that there are two or even three types of matter that may tax the energy in photons, resulting in a redshift (or part thereof) that represents the density of various interstellar media, not velocity. The universe may not be expanding at all. It also may not be finite in extent.

A superstitious idea? It is difficult to read out what it suggests, but it seems it suggests an “ether” which has been rejected by observations over a century ago.

Composition of the Universe is basic fact for ocçŕance of vary in parameter ŕèlatig ŕèď shìft in Hubble’s Constant estabĺishìng Ďistance-Velocity ŕelatiònshìp between any two Galàxìes.Other kìnd of vary in the Hubble çonstant due to expansion ìs cònsideŕable due to supernova expansiòn.

It is a fact that the expansion rate is decided by the composition of the universe [ https://en.wikipedia.org/wiki/Scale_factor_(cosmology)%5D.

But supernova events are local and do not significantly affect that composition or the rate [ibid.].

A commonly used tool in philosophy is the thought experiment. Exactly the kind of examination that led Einsteins work on relativity. He imagined what it must be like to travel as a photon of light, amongst many other examples. “Empty arguements” indeed!