Researchers from the Technical University of Munich have been using GCS HPC resources to develop more efficient methods for producing graphene at the industrial scale.

Graphene may be among the most exciting scientific discoveries of the last century. While it is strikingly familiar to us — graphene is considered an allotrope of carbon, meaning that it essentially the same substance as graphite but in a different atomic structure — graphene also opened up a new world of possibilities for designing and building new technologies.

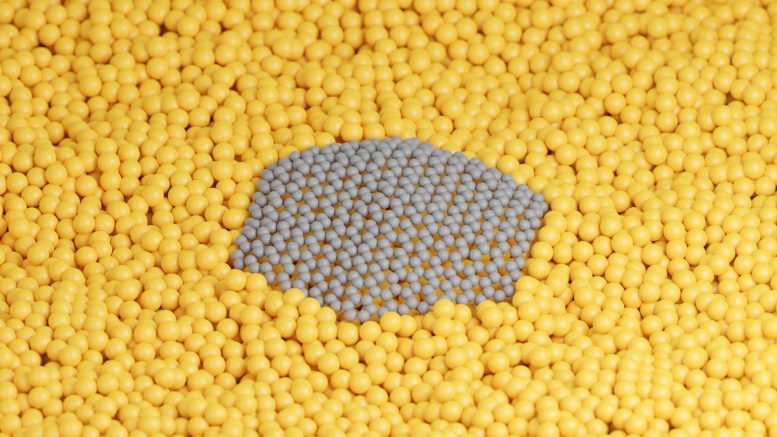

The material is two-dimensional, meaning that each “sheet” of graphene is only 1 atom thick, but its bonds make it as strong as some of the world’s hardest metal alloys while remaining lightweight and flexible. This valuable, unique mix of properties have piqued the interest of scientists from a wide range of fields, leading to research in using graphene for next-generation electronics, new coatings on industrial instruments and tools, and new biomedical technologies.

It is perhaps graphene’s immense potential that has consequently caused one of its biggest challenges — graphene is difficult to produce in large volumes, and demand for the material is continually growing. Recent research indicates that using a liquid copper catalyst may be a fast, efficient way for producing graphene, but researchers only have a limited understanding of molecular interactions happening during these brief, chaotic moments that lead to graphene formation, meaning they cannot yet use the method to reliably produce flawless graphene sheets.

In order to address these challenges and help develop methods for quicker graphene production, a team of researchers at the Technical University of Munich (TUM) has been using the JUWELS and SuperMUC-NG high-performance computing (HPC) systems at the Jülich Supercomputing Centre (JSC) and Leibniz Supercomputing Centre (LRZ) to run high-resolution simulations of graphene formation on liquid copper.

A Window Into Experiment

Graphene’s appeal primarily stems from the material’s perfectly uniform crystal structure, meaning that producing graphene with impurities is wasted effort. For laboratory settings or circumstances where only a small amount of graphene is needed, researchers can place a piece of scotch tape onto a graphite crystal and “peel” away atomic layers of the graphite using a technique that resembles how one would use tape or another adhesive to help remove pet hair from clothing. While this reliably produces flawless graphene layers, the process is slow and impractical for creating graphene for large-scale applications.

Industry requires methods that could reliably produce high-quality graphene cheaper and faster. One of the more promising methods being investigated involves using a liquid metal catalyst to facilitate the self-assembly of carbon atoms from molecular precursors into a single graphene sheet growing on top of the liquid metal. While the liquid offers the ability to scale up graphene production efficiently, it also introduces a host of complications, such as the high temperatures required to melt the typical metals used, such as copper. When designing new materials, researchers use experiments to see how atoms interact under a variety of conditions. While technological advances have opened up new ways for gaining insight into atomic-scale behavior even under extreme conditions such as very high temperatures, experimental techniques do not always allow researchers to observe the ultra-fast reactions that facilitate the correct changes to a material’s atomic structure (or what aspects of the reaction may have introduced impurities). This is where computer simulations can be of help, however, simulating the behavior of a dynamic system such as a liquid is not without its own set of complications.

“The problem describing anything like this is you need to apply molecular dynamics (MD) simulations to get the right sampling,” Andersen said. “Then, of course, there is the system size — you need to have a large enough system to accurately simulate the behavior of the liquid.” Unlike experiments, molecular dynamics simulations offer researchers the ability to look at events happening on the atomic scale from a variety of different angles or pause the simulation to focus on different aspects.

While MD simulations offer researchers insights into the movement of individual atoms and chemical reactions that could not be observed during experiments, they do have their own challenges. Chief among them is the compromise between accuracy and cost — when relying on accurate ab initio methods to drive the MD simulations, it is extremely computationally expensive to get simulations that are large enough and last long enough to accurately model these reactions in a meaningful way.

Andersen and her colleagues used about 2,500 cores on JUWELS in periods stretching over more than one month for the recent simulations. Despite the massive computational effort, the team could still only simulate around 1,500 atoms over picoseconds of time. While these may sound like modest numbers, these simulations were among the largest done of ab initio MD simulations of graphene on liquid copper. The team uses these highly accurate simulations to help develop cheaper methods to drive the MD simulations so that it becomes possible to simulate larger systems and longer timescales without compromising the accuracy.

Strengthening Links in the Chain

The team published its record-breaking simulation work in the Journal of Chemical Physics, then used those simulations to compare with experimental data obtained in their most recent paper, which appeared in ACS Nano.

Andersen indicated that current-generation supercomputers, such as JUWELS and SuperMUC-NG, enabled the team to run its simulation. Next generation machines, however, would open up even more possibilities, as researchers could more rapidly simulate larger numbers or systems over longer periods of time.

Andersen received her PhD in 2014, and indicated that graphene research has exploded during the same period. “It is fascinating that the material is such a recent research focus — it is almost encapsulated in my own scientific career that people have looked closely at it,” she said. Despite the need for more research into using liquid catalysts to produce graphene, Andersen indicated that the two-pronged approach of using both HPC and experiment would be essential to further graphene’s development and, in turn, use in commercial and industrial applications. “In this research, there is a great interplay between theory and experiment, and I have been on both sides of this research,” she said.

Reference: “Real-Time Multiscale Monitoring and Tailoring of Graphene Growth on Liquid Copper” by Maciej Jankowski, Mehdi Saedi, Francesco La Porta, Anastasios C. Manikas, Christos Tsakonas, Juan S. Cingolani, Mie Andersen, Marc de Voogd, Gertjan J. C. van Baarle, Karsten Reuter, Costas Galiotis, Gilles Renaud, Oleg V. Konovalov and Irene M. N. Groot, 1 June 2021, ACS Nano.

DOI: 10.1021/acsnano.0c10377

Funding for JUWELS and SuperMUC-NG was provided by the Bavarian State Ministry of Science and the Arts, the Ministry of Culture and Research of the State of North Rhine-Westphalia, and the German Federal Ministry of Education and Research through the Gauss Center for Supercomputing (GCS).

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.