Stanford researchers have developed a new phase-change memory that could help computers process large amounts of data faster and more efficiently.

We are tasking our computers with processing ever-increasing amounts of data to speed up drug discovery, improve weather and climate predictions, train artificial intelligence, and much more. To keep up with this demand, we need faster, more energy-efficient computer memory than ever before.

Innovations in Memory Technology

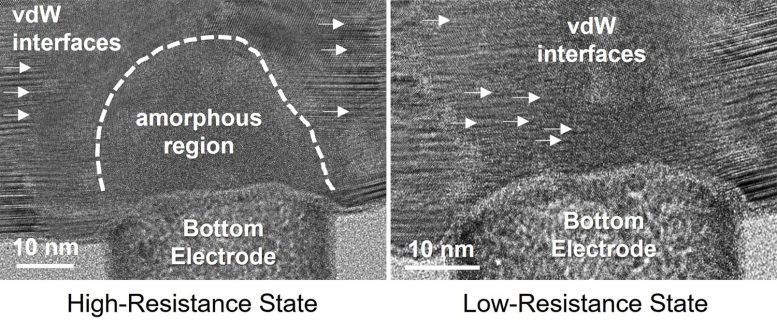

Researchers at Stanford have demonstrated that a new material may make phase-change memory – which relies on switching between high and low resistance states to create the ones and zeroes of computer data – an improved option for future AI and data-centric systems. Their scalable technology, as detailed recently in Nature Communications, is fast, low-power, stable, long-lasting, and can be fabricated at temperatures compatible with commercial manufacturing.

“We are not just improving on a single metric, such as endurance or speed; we are improving several metrics simultaneously,” said Eric Pop, the Pease-Ye Professor of Electrical Engineering and professor, by courtesy, of materials science and engineering at Stanford. “This is the most realistic, industry-friendly thing we’ve built in this sphere. I’d like to think of it as a step towards a universal memory.”

Enhancing Computing Efficiency

Today’s computers store and process data in separate locations. Volatile memory – which is fast but disappears when your computer turns off – handles the processing, while nonvolatile memory – which isn’t as fast but can hold information without constant power input – takes care of the long-term data storage. Shifting information between these two locations can cause bottlenecks while the processor waits for large amounts of data to be retrieved.

“It takes a lot of energy to shuttle data back and forth, especially with today’s computing workloads,” said Xiangjin Wu, co-lead author on the paper and a doctoral candidate co-advised by Pop and Philip Wong, the Willard R. and Inez Kerr Bell Professor in the School of Engineering. “With this type of memory, we’re really hoping to bring the memory and processing closer together, ultimately into one device, so that it uses less energy and time.”

There are many technical hurdles to achieving an effective, commercially viable universal memory capable of both long-term storage and fast, low-power processing without sacrificing other metrics, but the new phase change memory developed in Pop’s lab is as close as anyone has come so far with this technology. The researchers hope that it will inspire further development and adoption as a universal memory.

The Promise of GST467 Alloy

The memory relies on GST467, an alloy of four parts germanium, six parts antimony, and seven parts tellurium, which was developed by collaborators at the University of Maryland. Pop and his colleagues found ways to sandwich the alloy between several other nanometer-thin materials in a superlattice, a layered structure they’ve previously used to achieve good nonvolatile memory results.

“The unique composition of GST467 gives it a particularly fast switching speed,” said Asir Intisar Khan, who earned his doctorate in Pop’s lab and is co-lead author on the paper. “Integrating it within the superlattice structure in nanoscale devices enables low switching energy, gives us good endurance, very good stability, and makes it nonvolatile – it can retain its state for 10 years or longer.”

Setting a New Bar

The GST467 superlattice clears several important benchmarks. Phase change memory can sometimes drift over time – essentially the value of the ones and zeros can slowly shift – but their tests show that this memory is extremely stable. It also operates at below 1 volt, which is the goal for low-power technology, and is significantly faster than a typical solid-state drive.

“A few other types of nonvolatile memory can be a bit faster, but they operate at higher voltage or higher power,” Pop said. “With all these computing technologies, there are tradeoffs between speed and energy. The fact that we’re switching at a few tens of nanoseconds while operating below one volt is a big deal.”

The superlattice also packs a good amount of memory cells into a small space. The researchers have shrunk the memory cells down to 40 nanometers in diameter – less than half the size of a coronavirus. That’s not quite as dense as it could be, but the researchers are exploring ways to compensate by stacking the memory in vertical layers, which is possible thanks to the superlattice’s low fabrication temperature and the techniques used to create it.

“The fabrication temperature is well below what you need,” Pop said. “People are talking about stacking memory in thousands of layers to increase density. This type of memory can enable such future 3D layering.”

Reference: “Novel nanocomposite-superlattices for low energy and high stability nanoscale phase-change memory” by Xiangjin Wu, Asir Intisar Khan, Hengyuan Lee, Chen-Feng Hsu, Huairuo Zhang, Heshan Yu, Neel Roy, Albert V. Davydov, Ichiro Takeuchi, Xinyu Bao, H.-S. Philip Wong and Eric Pop, 22 January 2024, Nature Communications.

DOI: 10.1038/s41467-023-42792-4

Pop is a member of Stanford SystemX Alliance and an affiliate of SLAC and the Precourt Institute for Energy. Wong is a professor of electrical engineering, a member of Stanford Bio-X, the Wu Tsai Neurosciences Institute, and an affiliate of the Precourt Institute for Energy.

Additional co-authors are from Taiwan Semiconductor Manufacturing Company, the National Institute of Standards and Technology, Theiss Research Inc, University of Maryland, and Tianjin University.

This work was funded by the Stanford Non-Volatile Memory Technology Research Initiative, the Semiconductor Research Corporation, the U.S. Department of Commerce, and the National Institute of Standards and Technology.

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.