Newly published research from Yale’s Department of Neuroscience provides some clues to how cells in the visual cortex direct sensory information to different targets throughout the brain.

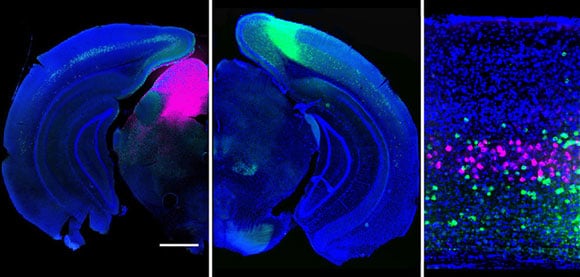

Understanding how the brain manages to process the deluge of information about the outside world has been a daunting challenge. By imaging activity in the mouse brain, the researchers illustrated how neuron types (fluorescently tagged magenta and green) that project to different areas of the brain extract distinct features from a visual scene.

“These results demonstrate how the brain processes multiple sensory inputs in parallel,” Higley said, noting that the findings help reveal the normal flow of information in the brain, opening new avenues for understanding how perturbations of these systems might contribute to abnormal behavior.

Reference: “Projection-Specific Visual Feature Encoding by Layer 5 Cortical Subnetworks” by Gyorgy Lur, Martin A. Vinck, Lan Tang, Jessica A. Cardin and Michael J. Higley, 10 March 2016, Cell Report.

DOI: 10.1016/j.celrep.2016.02.050

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.