In a study exploring human-robot interactions within a virtual reality setting, researchers found that humans can feel secondhand embarrassment for robots in awkward situations. The study, conducted by a team of experts in the field, utilized a combination of subjective ratings and physiological measurements to quantitatively assess the extent to which humans feel empathic embarrassment towards robots.

Research Methodology

The research team, led by Ph.D. candidate Harin Hapuarachchi and Professor Michiteru Kitazaki from Toyohashi University of Technology, set out to explore the intriguing concept of whether humans exhibit empathic responses when robots, rather than humans, are placed in embarrassing scenarios.

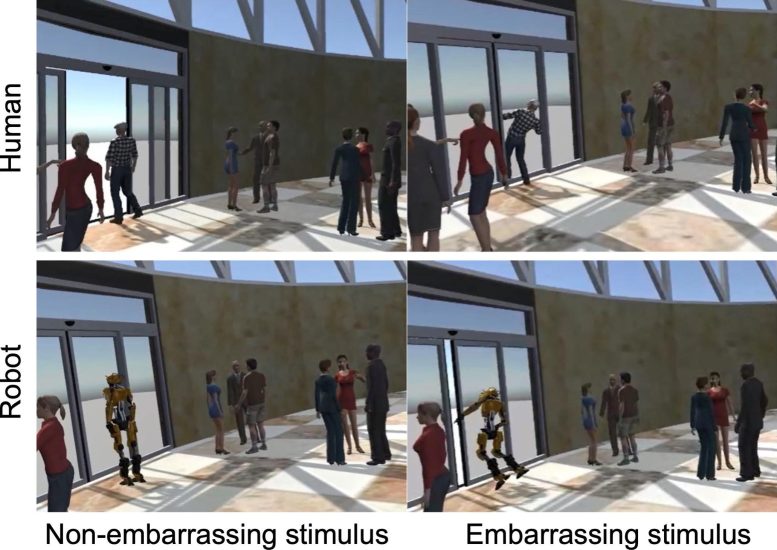

To accomplish this, participants were exposed to a series of virtual environments where both human and robot avatars navigated through situations either mildly embarrassing or non-embarrassing. The scenarios were designed to evoke various feelings of mistake or discomfort.

The study employed a comprehensive approach to measure the participants’ reactions. Two primary dimensions of empathy were investigated: empathic embarrassment and cognitive empathy.

Empathic embarrassment refers to the ability to share in the emotional experience of another’s embarrassment, while cognitive empathy involves understanding and estimating the feelings of another individual. Participants were asked to provide subjective ratings on a 7-point Likert scale, evaluating both their own empathic embarrassment and their estimation of the avatar’s embarrassment in each scenario.

Physiological Measurements and Findings

Furthermore, the researchers utilized skin conductance responses to objectively measure the physiological reactions of participants. Skin conductance response is an established indicator of emotional arousal, providing insights into the intensity of emotional experiences.

Participants reported experiencing both empathic embarrassment and cognitive empathy towards both human and robot avatars when they encountered embarrassing situations. Interestingly, empathic embarrassment and cognitive empathy were significantly higher in scenarios involving embarrassment compared to non-embarrassing situations, regardless of whether the actor was a human or a robot.

Short description of the study. Credit: Toyohashi University of Technology

However, a notable distinction emerged when comparing empathic responses towards human and robot avatars. Cognitive empathy, the ability to understand another’s feelings, was found to be stronger for human actors compared to robot actors. Additionally, the skin conductance responses indicated a trend: participants exhibited higher levels of emotional arousal, as measured by skin conductance, when observing a human avatar navigating embarrassing scenarios compared to a robot avatar. However, this was not statistically significant.

Implications and Future Directions

These findings offer a glimpse into the complex dynamics of human empathy towards robots. While the study demonstrates that humans are capable of feeling empathic embarrassment and cognitive empathy towards robots, the disparity in cognitive empathy levels suggests that the understanding of robots’ emotional experiences might differ from that of humans.

Harin Hapuarachchi, the lead researcher on the project, stated, “Our study provides valuable insights into the evolving nature of human-robot relationships. As technology continues to integrate into our daily lives, understanding the emotional responses we have towards robots is crucial. This research opens up new avenues for exploring the boundaries of human empathy and the potential challenges and benefits of human-robot interactions.”

The research not only advances our understanding of human empathy but also holds implications for fields such as robotics, psychology, and human-computer interaction. As society continues to embrace robotic technology, these findings pave the way for further exploration into the emotional dimensions of our interactions with machines.

Reference: “Empathic embarrassment towards non-human agents in virtual environments” by Harin Hapuarachchi, Kento Higashihata, Maruta Sugiura, Atsushi Sato, Shoji Itakura and Michiteru Kitazaki, 12 September 2023, Scientific Reports.

DOI: 10.1038/s41598-023-41042-3

The research was supported by JST ERATO Grant Number JPMJER1701 (Inami JIZAI Body Project), and JSPS KAKENHI Grant Number JP20H04489.

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.

1 Comment

I’ve never felt embarrassment for my car — even when its hood was up for all to see.