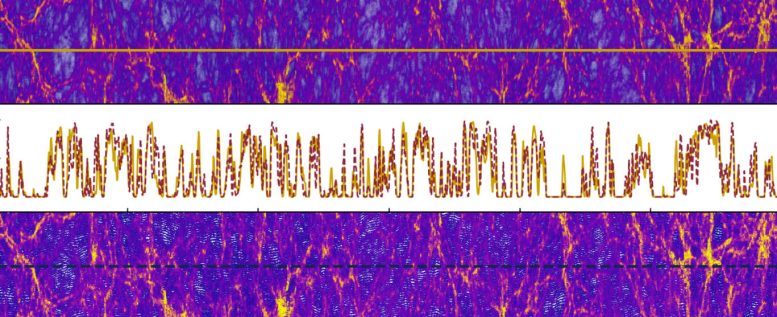

The simulations of the Lyman-𝛼 forest spectral data conducted by the PRIYA supercomputer, the largest-ever of their kind, illustrate the large-scale structure of the universe.

Distant quasars shine like cosmic beacons, producing the brightest light in the universe. These quasars outshine even our entire Milky Way galaxy in terms of light emission. This immense light originates from matter being torn apart as it is consumed by a supermassive black hole. Cosmological parameters serve as crucial numerical tools for astronomers, allowing them to track the universe’s evolution billions of years following the Big Bang.

Quasar light reveals clues about the large-scale structure of the universe as it shines through enormous clouds of neutral hydrogen gas formed shortly after the Big Bang on the scale of 20 million light-years across or more.

Advancements in Simulation Technology

Using quasar light data, the National Science Foundation (NSF)-funded Frontera supercomputer at the Texas Advanced Computing Center (TACC) helped astronomers develop PRIYA, the largest suite of hydrodynamic simulations yet made for simulating large-scale structure in the universe.

“We’ve created a new simulation model to compare data that exists at the real universe,” said Simeon Bird, an assistant professor in astronomy at the University of California, Riverside.

Bird and colleagues developed PRIYA, which takes optical light data from the Extended Baryon Oscillation Spectroscopic Survey (eBOSS) of the Sloan Digital Sky Survey (SDSS). He and colleagues published their work announcing PRIYA October 2023 in the Journal of Cosmology and Astroparticle Physics (JCAP).

“We compare eBOSS data to a variety of simulation models with different cosmological parameters and different initial conditions to the universe, such as different matter densities,” Bird explained. “You find the one that works best and how far away from that one you can go without breaking the reasonable agreement between the data and simulations. This knowledge tells us how much matter there is in the universe, or how much structure there is in the universe.”

The Role of PRIYA in Cosmological Research

The PRIYA simulation suite is connected to large-scale cosmological simulations also co-developed by Bird, called ASTRID, which is used to study galaxy formation, the coalescence of supermassive black holes, and the re-ionization period early in the history of the universe. PRIYA goes a step further. It takes the galaxy information and the black hole formation rules found in ASTRID and changes the initial conditions.

“With these rules, we can we take the model that we developed that matches galaxies and black holes, and then we change the initial conditions and compare it to the Lyman-𝛼 forest data from eBOSS of the neutral hydrogen gas,” Bird said.

The ‘Lyman-𝛼 forest’ gets its name from the ‘forest’ of closely packed absorption lines on a graph of the quasar spectrum resulting from electron transitions between energy levels in atoms of neutral hydrogen. The ‘forest’ indicates the distribution, density, and temperature of enormous intergalactic neutral hydrogen clouds. What’s more, the lumpiness of the gas indicates the presence of dark matter, a hypothetical substance that cannot be seen yet is evident by its observed tug on galaxies.

Refining Cosmological Parameters with PRIYA

PRIYA simulations have been used to refine cosmological parameters in work submitted to JCAP September 2023 and authored by Simeon Bird and his UC Riverside colleagues, M.A. Fernandez and Ming-Feng Ho.

Previous analysis of the neutrino mass parameters did not agree with data from the Cosmic Microwave Background radiation (CMB), described as the afterglow of the Big Bang. Astronomers use CMB data from the Plank space observatory to place tight constraints on the mass of neutrinos. Neutrinos are the most abundant particle in the universe, so pinpointing their mass value is important for cosmological models of large-scale structure in the universe.

“We made a new analysis with simulations that were a lot larger and better designed than anything before. The earlier discrepancies with the Planck CMB data disappeared, and were replaced with another tension, similar to what is seen in other low redshift large-scale structure measurements,” Bird said. “The main result of the study is to confirm the σ8 tension between CMB measurements and weak lensing exists out to redshift 2, ten billion years ago.”

One well-constrained parameter from the PRIYA study is on σ8, which is the amount of neutral hydrogen gas structures on a scale of 8 megaparsecs, or 2.6 million light years. This indicates the number of clumps of dark matter that are floating around there,” Bird said.

Another parameter constrained was ns, the scalar spectral index. It is connected to how the clumsiness of dark matter varies with the size of the region analyzed. It indicates how fast the universe was expanding just moments after the Big Bang.

“The scalar spectral index sets up how the universe behaves right at the beginning. The whole idea of PRIYA is to work out the initial conditions of the universe, and how the high energy physics of the universe behaves,” Bird said.

The Impact of Supercomputing on Cosmological Studies

Supercomputers were needed for the PRIYA simulations, Bird explained, simply because they were so big.

“The memory requirements for PRIYA simulations are so big you cannot put them on anything other than a supercomputer,” Bird said.

TACC awarded Bird a Leadership Resource Allocation on the Frontera supercomputer. Additionally, analysis computations were performed using the resources of the UC Riverside High-Performance Computer Cluster.

The PRIYA simulations on Frontera are some of the largest cosmological simulations yet made, needing over 100,000 core-hours to simulate a system of 3072^3 (about 29 billion) particles in a ‘box’ 120 megaparsecs on edge, or about 3.91 million light-years across. PRIYA simulations consumed over 600,000 node hours on Frontera.

“Frontera was very important to the research because the supercomputer needed to be big enough that we could run one of these simulations fairly easily, and we needed to run a lot of them. Without something like Frontera, we wouldn’t be able to solve them. It’s not that it would take a long time — they just they wouldn’t be able to run at all,” Bird said.

In addition, TACC’s Ranch system provided long-term storage for PRIYA simulation data.

“Ranch is important, because now we can reuse PRIYA for other projects. This could double or triple our science impact,” Bird said.

“Our appetite for more compute power is insatiable,” Bird concluded. “It’s crazy that we’re sitting here on this little planet observing most of the universe.”

Reference: “PRIYA: a new suite of Lyman-α forest simulations for cosmology” by Simeon Bird, Martin Fernandez, Ming-Feng Ho, Mahdi Qezlou, Reza Monadi, Yueying Ni, Nianyi Chen, Rupert Croft and Tiziana Di Matteo, 11 October 2023, Journal of Cosmology and Astroparticle Physics.

DOI: 10.1088/1475-7516/2023/10/037

The study was funded by the National Science Foundation and NASA Headquarters

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.

6 Comments

Hmm, so in 2024, people still believe in the fallacy, dark matter? Where is the dark matter, clowns? Substantiate the existence of dark matter and get $10,000. Jokes…

How does one submit direct evidence of the matter at hand for itself for recognition minus the jokes? I believe … Let say a friend has discovered 2 years ago and is keeping to emselves till path of recognition is Available to em

Our frequency is capable to change…plasma.magnetic waves..

It’s crazy that we’re sitting here on this little planet observing most of the universe.

If the initial conditions you change are not related to the ubiquitous gravitation, it is not unreasonable to say that it is crazy.

I hope researchers are not fooled by the pseudoscientific theories of the Physical Review Letters (PRL), and hope more people dare to stand up and fight against rampant pseudoscience.

The so-called academic journals (such as Physical Review Letters, Nature, Science, etc.) firmly believe that two objects (such as two sets of cobalt-60) of high-dimensional spacetime rotating in opposite directions can be transformed into two objects that mirror each other, is a typical case of pseudoscience rampant.

If researchers are really interested in Science and Physics, you can browse https://zhuanlan.zhihu.com/p/463666584 and https://zhuanlan.zhihu.com/p/595280873.

Dark Matter is so “falsifiable” that one expert on the subject suggests they have about a hundred years of experiments to go in the search for it, before they would be presumably forced to eliminate most of the matter and gravity in the universe. So, as you can see, it’s a mental issue with popular people.

“before they would be presumably forced to eliminate most of the matter and gravity in the universe”

Because, let’s face it, admitting Einstein’s gravity is completely deceptive in effects spanning cosmological scales would be the worst thing that could possibly happen to “the smartest globalists in the room.”