How Fast Is the Universe Expanding? Galaxies Provide One Answer

Determining how rapidly the universe is expanding is key to understanding our cosmic fate, but with more precise data has come a conundrum: Estimates based on measurements within our local universe don’t agree with extrapolations from the era shortly after the Big Bang 13.8 billion years ago.

A new estimate of the local expansion rate — the Hubble constant, or H0 (H-naught) — reinforces that discrepancy.

Surface Brightness Fluctuations Offer a Precise Alternative

Using a relatively new and potentially more precise technique for measuring cosmic distances, which employs the average stellar brightness within giant elliptical galaxies as a rung on the distance ladder, astronomers calculate a rate — 73.3 kilometers per second per megaparsec, give or take 2.5 km/sec/Mpc — that lies in the middle of three other good estimates, including the gold standard estimate from Type Ia supernovae. This means that for every megaparsec — 3.3 million light years, or 3 billion trillion kilometers — from Earth, the universe is expanding an extra 73.3 ±2.5 kilometers per second. The average from the three other techniques is 73.5 ±1.4 km/sec/Mpc.

Perplexingly, estimates of the local expansion rate based on measured fluctuations in the cosmic microwave background and, independently, fluctuations in the density of normal matter in the early universe (baryon acoustic oscillations), give a very different answer: 67.4 ±0.5 km/sec/Mpc.

Astronomers are understandably concerned about this mismatch, because the expansion rate is a critical parameter in understanding the physics and evolution of the universe and is key to understanding dark energy — which accelerates the rate of expansion of the universe and thus causes the Hubble constant to change more rapidly than expected with increasing distance from Earth. Dark energy comprises about two-thirds of the mass and energy in the universe, but is still a mystery.

Elliptical Galaxies and the SBF Technique

For the new estimate, astronomers measured fluctuations in the surface brightness of 63 giant elliptical galaxies to determine the distance and plotted distance against velocity for each to obtain H0. The surface brightness fluctuation (SBF) technique is independent of other techniques and has the potential to provide more precise distance estimates than other methods within about 100 Mpc of Earth, or 330 million light-years. The 63 galaxies in the sample are at distances ranging from 15 to 99 Mpc, looking back in time a mere fraction of the age of the universe.

“For measuring distances to galaxies out to 100 megaparsecs, this is a fantastic method,” said cosmologist Chung-Pei Ma, the Judy Chandler Webb Professor in the Physical Sciences at the University of California, Berkeley, and professor of astronomy and physics. “This is the first paper that assembles a large, homogeneous set of data, on 63 galaxies, for the goal of studying H-naught using the SBF method.”

Ma leads the MASSIVE survey of local galaxies, which provided data for 43 of the galaxies — two-thirds of those employed in the new analysis.

The data on these 63 galaxies was assembled and analyzed by John Blakeslee, an astronomer with the National Science Foundation’s NOIRLab. He is first author of a paper now accepted for publication in The Astrophysical Journal that he co-authored with colleague Joseph Jensen of Utah Valley University in Orem. Blakeslee, who heads the science staff that support NSF’s optical and infrared observatories, is a pioneer in using SBF to measure distances to galaxies, and Jensen was one of the first to apply the method at infrared wavelengths. The two worked closely with Ma on the analysis.

“The whole story of astronomy is, in a sense, the effort to understand the absolute scale of the universe, which then tells us about the physics,” Blakeslee said, harkening back to James Cook’s voyage to Tahiti in 1769 to measure a transit of Venus so that scientists could calculate the true size of the solar system. “The SBF method is more broadly applicable to the general population of evolved galaxies in the local universe, and certainly if we get enough galaxies with the James Webb Space Telescope, this method has the potential to give the best local measurement of the Hubble constant.”

The James Webb Space Telescope, 100 times more powerful than the Hubble Space Telescope, is scheduled for launch in October.

Giant Elliptical Galaxies

The Hubble constant has been a bone of contention for decades, ever since Edwin Hubble first measured the local expansion rate and came up with an answer seven times too big, implying that the universe was actually younger than its oldest stars. The problem, then and now, lies in pinning down the location of objects in space that give few clues about how far away they are.

Astronomers over the years have laddered up to greater distances, starting with calculating the distance to objects close enough that they seem to move slightly, because of parallax, as the Earth orbits the sun. Variable stars called Cepheids get you farther, because their brightness is linked to their period of variability, and Type Ia supernovae get you even farther, because they are extremely powerful explosions that, at their peak, shine as bright as a whole galaxy. For both Cepheids and Type Ia supernovae, it’s possible to figure out the absolute brightness from the way they change over time, and then the distance can be calculated from their apparent brightness as seen from Earth.

The best current estimate of H0 comes from distances determined by Type Ia supernova explosions in distant galaxies, though newer methods — time delays caused by gravitational lensing of distant quasars and the brightness of water masers orbiting black holes — all give around the same number.

The technique using surface brightness fluctuations is one of the newest and relies on the fact that giant elliptical galaxies are old and have a consistent population of old stars — mostly red giant stars — that can be modeled to give an average infrared brightness across their surface. The researchers obtained high-resolution infrared images of each galaxy with the Wide Field Camera 3 on the Hubble Space Telescope and determined how much each pixel in the image differed from the “average” — the smoother the fluctuations over the entire image, the farther the galaxy, once corrections are made for blemishes like bright star-forming regions, which the authors exclude from the analysis.

Neither Blakeslee nor Ma was surprised that the expansion rate came out close to that of the other local measurements. But they are equally confounded by the glaring conflict with estimates from the early universe — a conflict that many astronomers say means that our current cosmological theories are wrong, or at least incomplete.

The extrapolations from the early universe are based on the simplest cosmological theory — called lambda cold dark matter, or ΛCDM — which employs just a few parameters to describe the evolution of the universe. Does the new estimate drive a stake into the heart of ΛCDM?

“I think it pushes that stake in a bit more,” Blakeslee said. “But it (ΛCDM) is still alive. Some people think, regarding all these local measurements, (that) the observers are wrong. But it is getting harder and harder to make that claim — it would require there to be systematic errors in the same direction for several different methods: supernovae, SBF, gravitational lensing, water masers. So, as we get more independent measurements, that stake goes a little deeper.”

Ma wonders whether the uncertainties astronomers ascribe to their measurements, which reflect both systematic errors and statistical errors, are too optimistic, and that perhaps the two ranges of estimates can still be reconciled.

“The jury is out,” she said. “I think it really is in the error bars. But assuming everyone’s error bars are not underestimated, the tension is getting uncomfortable.”

In fact, one of the giants of the field, astronomer Wendy Freedman, recently published a study pegging the Hubble constant at 69.8 ±1.9 km/sec/Mpc, roiling the waters even further. The latest result from Adam Riess, an astronomer who shared the 2011 Nobel Prize in Physics for discovering dark energy, reports 73.2 ±1.3 km/sec/Mpc. Riess was a Miller Postdoctoral Fellow at UC Berkeley when he performed this research, and he shared the prize with UC Berkeley and Berkeley Lab physicist Saul Perlmutter.

MASSIVE Galaxies

The new value of H0 is a byproduct of two other surveys of nearby galaxies — in particular, Ma’s MASSIVE survey, which uses space and ground-based telescopes to exhaustively study the 100 most massive galaxies within about 100 Mpc of Earth. A major goal is to weigh the supermassive black holes at the centers of each one.

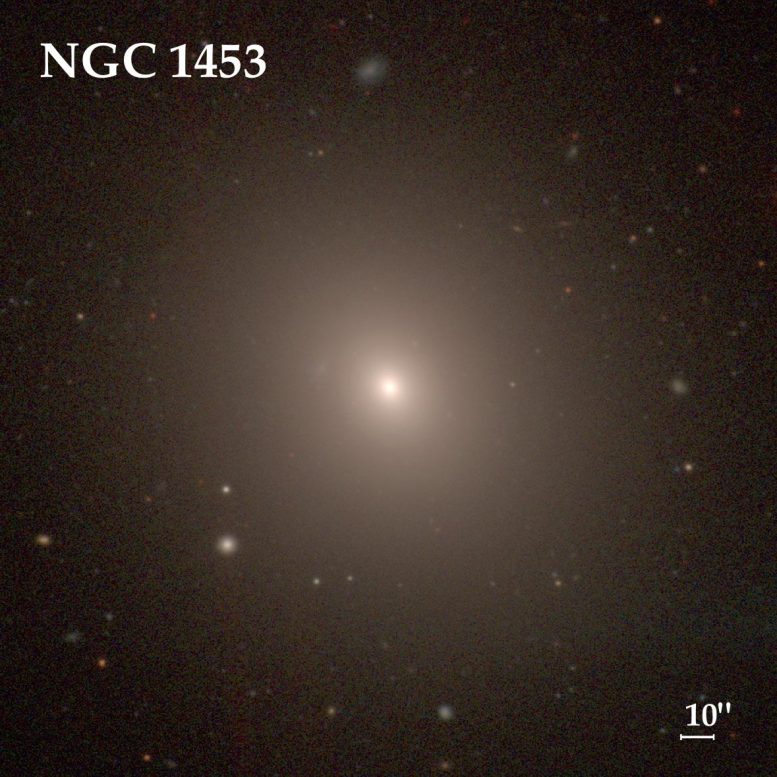

To do that, precise distances are needed, and the SBF method is the best to date, she said. The MASSIVE survey team used this method last year to determine the distance to a giant elliptical galaxy, NGC 1453, in the southern sky constellation of Eridanus. Combining that distance, 166 million light years, with extensive spectroscopic data from the Gemini and McDonald telescopes — which allowed Ma’s graduate students Chris Liepold and Matthew Quenneville to measure the velocities of the stars near the center of the galaxy — they concluded that NGC 1453 has a central black hole with a mass nearly 3 billion times that of the sun.

To determine H0, Blakeslee calculated SBF distances to 43 of the galaxies in the MASSIVE survey, based on 45 to 90 minutes of HST observing time for each galaxy. The other 20 came from another survey that employed HST to image large galaxies, specifically ones in which Type Ia supernovae have been detected.

Most of the 63 galaxies are between 8 and 12 billion years old, which means that they contain a large population of old red stars, which are key to the SBF method and can also be used to improve the precision of distance calculations. In the paper, Blakeslee employed both Cepheid variable stars and a technique that uses the brightest red giant stars in a galaxy — referred to as the tip of the red giant branch, or TRGB technique — to ladder up to galaxies at large distances. They produced consistent results. The TRGB technique takes account of the fact that the brightest red giants in galaxies have about the same absolute brightness.

“The goal is to make this SBF method completely independent of the Cepheid-calibrated Type Ia supernova method by using the James Webb Space Telescope to get a red giant branch calibration for SBFs,” he said.

“The James Webb telescope has the potential to really decrease the error bars for SBF,” Ma added. But for now, the two discordant measures of the Hubble constant will have to learn to live with one another.

“I was not setting out to measure H0; it was a great product of our survey,” she said. “But I am a cosmologist and am watching this with great interest.”

Co-authors of the paper with Blakeslee, Ma and Jensen are Jenny Greene of Princeton University, who is a leader of the MASSIVE team, and Peter Milne of the University of Arizona in Tucson, who leads the team studying Type Ia supernovae. The work was supported by the National Aeronautics and Space Administration (HST-GO-14219, HST-GO-14654, HST GO-15265) and the National Science Foundation (AST-1815417, AST-1817100).

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.

18 Comments

Figure 73.3 + or_ 2.5 Km/sec/Mpc can be granted as standard Hubble constant beside all other values having own origin with justification.

Figure 73.3 + or_ 2.5 Km/sec/Mpc can be granted as standard Hubble constant beside all other values having own origin with justification.Merge of neutron stats viewed by gravitational wave simultaneòusly calculating with cosmic microwave back ground temperature can verify the fact even supported by some form òf dark energy parþicle accumulating to add fraction of mass to total.Thus giving more uptodate value of HO.

There is no reason to prefer any of the cluster of the higher estimates – often ladder dependent and local – ovsre the lower estimates – often ladder indipendent and integrated. In fact, it is the lower ones that are more statistically robust (see my comment and its reference) and also do not suggest extraordinary new physics based on an absence (as of yet) of extraordinary data.

In fact, any or all of the meaured estimates can be wrong.

It is a fact that gravitational wave observations can give a (local and) independent estimate [“A wave to the Hubble constant”, Nature ASTRONOMY AND ASTROPHYSICS, 2017].

“Combining these gives a result of 70 kilometres per second per megaparsec, which is consistent with previous estimates and confirms a prediction from the 1980s that gravitational waves could be used to determine the Hubble constant. Although at present the error margin is large, the result could be improved as more events are observed, the authors say.”

It is also a fact that all of the cosmic background based estimates can be biased by erroneous temperature estimates.

“In conclusion, the authors showed that the Hubble tension can also be expressed as tension in CMB temperature T_0. It could, therefore, be solved when a higher T_0 is assumed as prior for estimating H_0 from Planck data. However, this explanation seems unlikely, as BAO measurements support the lower T_0 value. Nevertheless, the analysis showed that the solution to the Hubble tension might not be a change in the cosmological standard model, but rather a careful examination of the assumptions and priors that influence the measurement of H_0.”

[“Is the Hubble Tension actually a Temperature Tension?”, astrobites]

But it is also a fact that adding in primordial magnetic fields fits both the LCDM cosmology and the current field observations.

“Their calculations indicated that, indeed, the amount of primordial magnetism needed to address the Hubble tension also agrees with the blazar observations and the estimated size of initial fields needed to grow the enormous magnetic fields spanning galaxy clusters and filaments. “So it all sort of comes together,” Pogosian said, “if this turns out to be right.””

[ https://www.quantamagazine.org/the-hidden-magnetic-universe-begins-to-come-into-view-20200702/ ]

Oy. “ovsre the lower estimates – often ladder indipendent” – over the lower estimates – often ladder independent.

Slip of the fingers.

The general rule for expressing the best estimate of a measurement is to retain no more significant figures than the position of the one-sigma uncertainty, which results from the procedure from adding and subtracting numbers with different precision. That is, the new value should be expressed as 73 +/- 3 Km/sec/Mpc.

That compares to the currently accepted Hubble Constant of 73 +/- 1 Km/sec/Mpc, when expressed properly.

The research hasn’t really changed the estimate. It has only increased the uncertainty.

See Example 2 at:

https://opentextbc.ca/chemistry/chapter/measurement-uncertainty-accuracy-and-precision/

Incidentally, it should be stated explicitly whether the +/- uncertainty represents a 1-sigma or 2-sigma standard deviation. While 1-sigma is probably most commonly used, some researchers prefer using 2-sigma for high precision measurements. To avoid ambiguity, the choice should be stated along with the value and the ‘uncertainty.’

There isn’t any currently accepted Hubble Constant due to the explicit estimated uncertainties giving a range in tension [ https://sci.esa.int/web/planck/-/60504-measurements-of-the-hubble-constant ]*. Hence the title “discrepancy”.

The customs differ between areas, but it seems to be astronomical and hence cosmological standard to give 1 sigma ranges.

*It is interesting to see how the initial range, and hence the age range of the universe, differed a factor 2 before precision cosmology came. With dark energy observations (and later cosmic background as well) the precision rapidly decreased to 1 % uncertainty except in this one measure.

But as per my response to Bibhutibhusanpatel, besides bias problems there are other non-exotic explanations that could remove the tension. We’ll see.

It is also interesting, I think, to compare with historical analogies like the measurement of the light speed in vacuum, where the current value lies outside the initial estimate range. Plus ça change, plus c’est la même chose.

Oy: “the precision rapidly decreased”.

Speaking of lacking (language) precision. 😀

Torbjorn, you said, “The customs differ between areas, but it seems to be astronomical and hence cosmological standard to give 1 sigma ranges.” That is fine, but it is mostly astronomers who would be familiar with what the custom is within the astronomical community. In the spirit of furthering communication, uncertainties should be defined explicitly (i.e. 1 or 2 sigma), and acronyms should be explained at least the first time they are used, especially for acronyms that are unique to the discipline.

Of course it should be stated, I was responding to the specific query here.

“… give a very different answer: 67.4 ±0.5 km/sec/Mpc.”

Note that while there are reasons to question the accuracy, at least the implied precision is expressed properly.

Personally, when a researcher is careless or sloppy in writing up their research, and doesn’t pay attention to commonly accepted standards, I have to wonder if that behavior carries over to their experiments.

And here I fail to see your point, since the tension would be relative the amount of sigma used as would be the quality standard of the field (2 sigma? 3 sigma? 5 sigma? 7 sigma? 9 sigma?), and there is a standard of the field.

If the same standards is applied to comments, the sloppy unreferenced claim of a “standard Hubble Constant” would imply carried over behavior.

Paper not in print yet. Preprint labeled 2101.02221 is on arxiv.

Like the supernova data this is ladder dependent, data sparse and contains biased populations.

“But he thinks cosmologists will run into trouble as they put their theories to more rigorous tests that require more precise standard candles. “Supernovae could be less useful for precision cosmology,” he says.

[ https://www.sciencemag.org/news/2020/06/galaxy-s-brightest-explosions-go-nuclear-unexpected-trigger-pairs-dead-stars ]

Figure 73.3 + or_ 2.5 Km/sec/Mpc can be granted as standard Hubble constant beside all other values having own origin with justification.Merge of neutron stats viewed by gravitational wave simultaneòusly calculating with cosmic microwave back ground temperature can verify the fact even supported by some form òf dark energy parþicle accumulating to add fraction of mass to total.Thus giving more uptodate value of HO.W. Freedman suggested value of Hubble cònstant to 68.9 +or _ 1.9 Km/sec/Mpc recoiling waters even further is a valu that able to explain added mass due to some dark energy created when two black holes of151 solar mass sum

togather merge.This value is in aggrement with the recently measured value for universal gravitational constant G having an increase òf 9% is to be recorded.Again present time dìscovery at ĹHC is fòcùsed to the same line after addition of four new elementary particles.Grossly all these galaxies ŕesembĺe with our milky way ìn texyur with smll ďevìation and còmmon origìn most possible.

The first cited value can be granted as in the current range, but there is no standard constant as of yet (and the higher values, if fact, implies problems with current cosmology). I have no idea where the other claimed ” accepted Hubble Constant” comes from, for instance. The latter Freedman tip of the red giant branch method value is in the middle of the span [“MEASUREMENTS OF THE HUBBLE CONSTANT”, PLNCK, ESA] and is developed further now [“Astrophysical Distance Scale The JAGB Method: I. Calibration and a First Application”, arxiv 2005.10792].

It is fact that multimessenger gravitational mergers add another, intrinsically high qualitative way to extract the expansion rate. But the current analyses say that, with or without the new generation of grvaitational observatiories that are planned, it will be years or decades before we have a low uncertainty estimate from that method [ https://science.sciencemag.org/content/371/6534/1089 ].

I believe you mean dark matter particle when you describe it as “adding mass”. (“Dark energy” seems to be vacuum energy, but Cold Dark Matter is particulate.)

Stars and so black holes contains relatively little dark matter. The amount of dark matter within our solar system can, from its average Milky Way ratio of 6:1 to normal matter which is not far from the universe 5:1 ratio, be estimated as an average asteroid mass worth. Most of that may be concentrated to the Sun, but would still be an insignificant fraction.

Unless dark matter interacts with other matter, little to no dark matter would be created in the merger, I think.

This is of course all a waste of time until these red shift jockeys understand that the stars they measure against, which are all in different areas of the sky, exist in different plasma filiments which are themselves moving in various different directions and speeds, which is why no set of observations match up with any other. Toss in that red shift is not as dependable as expected and every different set of astronomers start fist fights amongst themselves.

The “red shift” jockey here seems to be you.

Several of the used measurement methods, for instance the cosmic background spectra, are independent of the red shift reliant cosmic distance ladder. Yet they almost agree – much better than when the field started out with a factor 2 discrepancy.