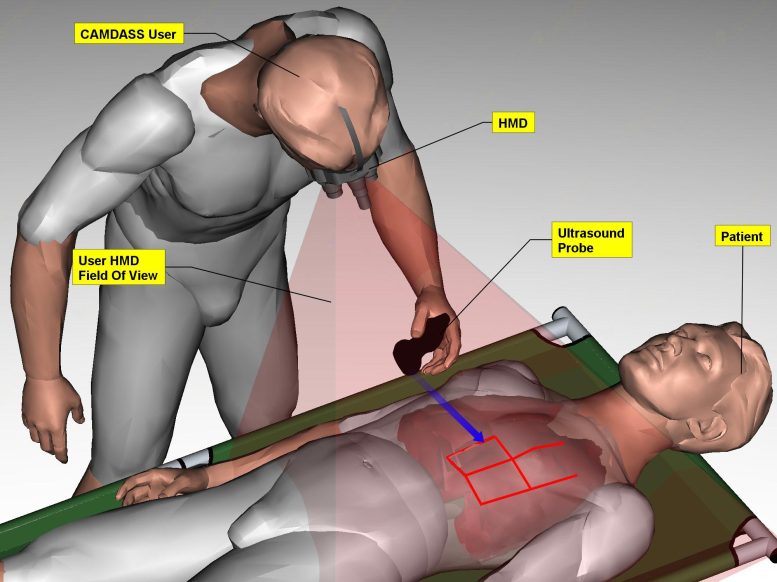

Augmented reality merges actual and virtual reality by precisely combining computer-generated graphics with the wearer’s view. CAMDASS is focused for now on ultrasound examinations but in principle could guide other procedures. Credit: ESA/Space Applications Service NV

Examining astronauts in need of medical help while in space is about to get a lot easier. Researchers at the European Space Agency developed a head-mounted display for 3D guidance in diagnosing problems and performing surgery. By using a stereo head-mounted display and an ultrasound tool tracked via an infrared camera, CAMDASS merges actual and virtual reality by precisely combining computer-generated graphics with the wearer’s view.

A new augmented reality unit developed by ESA can provide just-in-time medical expertise to astronauts. All they need to do is put on a head-mounted display for 3D guidance in diagnosing problems or even performing surgery.

The Computer Assisted Medical Diagnosis and Surgery System, CAMDASS, is a wearable augmented reality prototype.

Augmented reality merges actual and virtual reality by precisely combining computer-generated graphics with the wearer’s view.

CAMDASS is focused for now on ultrasound examinations but in principle could guide other procedures.

Ultrasound is leading the way because it is a versatile and effective medical diagnostic tool, and is already available on the International Space Station.

Future astronauts venturing further into space must be able to look after themselves. Depending on their distance from Earth, discussions with experts on the ground will involve many minutes of delay or even be blocked entirely.

“Although medical expertise will be available among the crew to some extent, astronauts cannot be trained and expected to maintain skills on all the medical procedures that might be needed,” said Arnaud Runge, a biomedical engineer overseeing the project for ESA.

CAMDASS uses a stereo head-mounted display and an ultrasound tool tracked via an infrared camera. The patient is tracked using markers placed at the site of interest.

An ultrasound device is linked with CAMDASS and the system allows the patient’s body to be ‘registered’ to the camera and the display calibrated to each wearer’s vision.

3D augmented reality cue cards are then displayed in the headset to guide the wearer. These are provided by matching points on a ‘virtual human’ and the registered patient.

This guides the wearer to position and move the ultrasound probe.

Reference ultrasound images give users an indication of what they should be seeing, and speech recognition allows hands-free control.

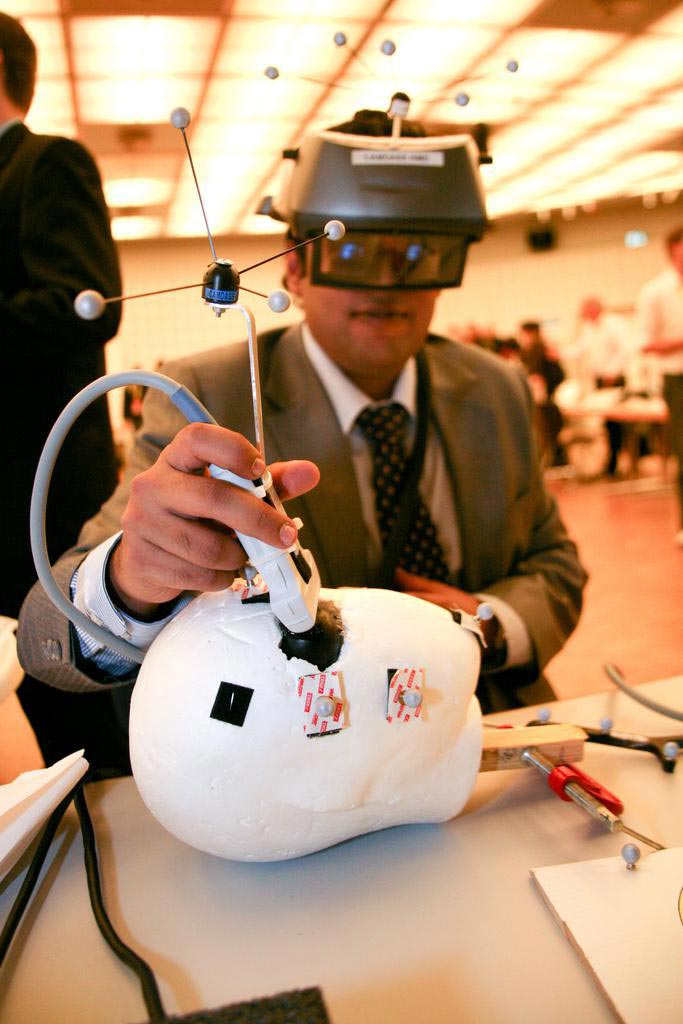

The prototype has been tested for usability at Saint-Pierre University Hospital in Brussels, Belgium, with medical and nursing students, Belgian Red Cross, and paramedic staff.

Untrained users found they could perform a reasonably difficult procedure without other help, with effective probe positioning.

“Based on that experience, we are looking at refining the system – for instance, reducing the weight of the head-mounted display as well as the overall bulkiness of the prototype,” explained Arnaud.

“Once it reaches maturity, the system might also be used as part of a telemedicine system to provide remote medical assistance via satellite.

“It could be deployed as a self-sufficient tool for emergency responders as well.

“It would be interesting to perform more testing in remote locations, in the developing world, and potentially in the Concordia Antarctic base. Eventually, it could be used in space.”

Funded by ESA’s Basic Technology Research Programme, the prototype was developed for the Agency by a consortium led by Space Applications Services NV in Belgium with support from the Technical University of Munich and the DKFZ German Cancer Research Center.

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.