With thousands of satellites, each network could beam down tens of terabits per second, filling gaps left by land-based services.

In recent months, people have reported seeing a parade of star-like points passing across the night sky. The formation is not extraterrestrial, or even astrophysical in origin, but is in fact a line of satellites, recently launched by SpaceX, that will eventually be joined by many more to form Starlink, a “megaconstellation” that will wrap around the Earth as a global network designed to beam high-speed internet to users anywhere in the world.

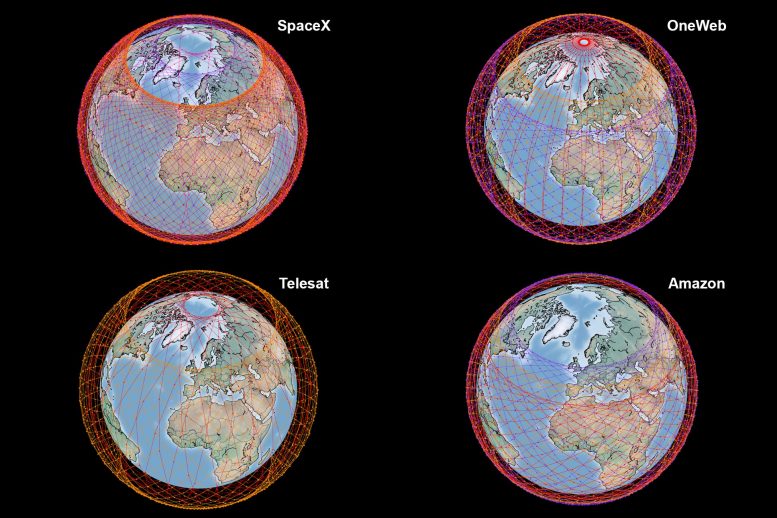

Starlink is among a handful of global satellite networks currently in development (though not without controversy, due to effects on our view of the night sky). Each is designed to deploy thousands of satellites at various altitudes and inclination angles to the Earth, to connect remote and rural users to the internet.

Now researchers in MIT’s Department of Aeronautics and Astronautics have run a comparison of the four largest global satellite network proposals, from SpaceX, Telesat, OneWeb, and Amazon. The researchers calculated each network’s throughput, or global data capacity, based on their technical specifications as reported to the Federal Communications Commission.

While the networks vary in their proposed number and configuration of satellites, ground stations, and communication capabilities, the team found that each constellation could provide a total capacity of around tens of terabits per second.

As proposed, these megaconstellations would likely not replace current land-based networks, which can support thousands of terabits per second. However, the team concludes that the space-based fleets could fill in the gaps where conventional cable connections have been unfeasible or inaccessible, such as in rural areas, remote polar and coastal regions, and even in the air and overseas.

“We won’t be in a situation where densely populated regions like New York City or Los Angeles will be served entirely by satellite capability,” says Inigo Del Portillo, a former graduate student in MIT’s System Architecture Group. “But these constellations can bring a lot of throughput to areas where right now there is no service whatsoever, no fibers. It can be really life-changing for those areas.”

Del Portillo and his colleagues will present a paper detailing their results next week at the IEEE International Conference on Communications. The paper’s co-authors at MIT include graduate student and lead author Nils Pachler, along with Edward Crawley, the Ford Foundation Professor of Engineering, and Bruce Cameron, Director of the System Architecture Group.

A Race, Renewed

The vast majority of the world’s high-speed internet access comes from land-based networks — cable, DSL, fiber optics, and wireless towers — with a minority delivered through regional satellite networks. Since the 1990s, there have been various efforts to launch satellite constellations into low-Earth orbit to provide global broadband service. These efforts, however, were quickly eclipsed by a rapidly expanding land-based infrastructure.

“There was a huge bubble burst 20 years ago, and now we’re asking the question whether the massive growth in data needs can support one, or perhaps even several competitors providing global internet,” Cameron says.

In recent years, satellite hardware and software technology has advanced, and demand for broadband has grown, such that the idea for global internet coverage from space has resurfaced in a big way. SpaceX and OneWeb are deploying the first strings of satellites as part of separately proposed networks, while Telesat and Amazon are moving forward with constellations of their own.

Such meganetwork proposals have drawn criticism from the astronomy community, as the thousands of satellites launched into space would potentially obscure astronomers’ observations of astrophysical sources. For his part, Del Portillo wondered whether the new proposals would be a viable, reliable service for regions of the world where internet has been either inaccessible or unaffordable.

“I was interested in how to connect underserved populations across the world, focusing on emerging countries, and satellite constellations was one technology I was looking at, along with balloons, drones, and millimeter-wave cellphone towers,” Del Portillo says. “When I was doing my research, this whole megaconstellation idea exploded, and I was interested in knowing what were the real capabilities of these systems.”

Satellite Snapshots

In 2018, as part of his PhD work, Del Portillo calculated the throughput of the three largest constellations proposed at the time, by SpaceX, OneWeb, and Telesat. Since then, all three companies have modified their initial proposals, and Amazon announced its own megaconstellation. In the new study, he aimed to update the throughput estimates for all four networks.

The team estimated each network’s total throughput based on the most recent petitions filed by each company to the FCC. Petitions include technical specifications such as the total number of satellites, the planes and inclination angles at which they will orbit, and the communication capabilities between satellites. Using these data, the team created simulations of each network’s satellite configuration and ran the simulations over a single day, taking “snapshots” every minute of each satellite’s position in the sky. They also recorded its cone of coverage, or the volume of space over which a satellite could communicate in that moment.

The researchers used an atmospheric model to vary the surrounding conditions in the moment, as well as a demand model that estimated the number of users within the satellite’s coverage area, based on a grid map of world population. They also used an algorithm to compute the number of gateways, or ground stations that the satellite would need to relay to in order to reach the most number of users. Finally, they used a link budget model to compute the satellite’s throughput.

“For each of these frozen snapshots, we run a link budget 10,000 times, each time using a different atmospheric condition, like rainy versus cloudy, and we see how the throughput, or data-rate changes,” Pachler explains. “In the end we put this together, see what the minimum throughput is, which is the bottleneck, then over all these different samples we take during the day, we get an average throughput for the entire network.”

Overall, they found that all four networks had comparable throughputs of tens of terabits per second, though each network achieves this through different configurations. For instance, Telesat has fewer satellites in its network (around 1,600), each with advanced capabilities compared to satellites in OneWeb’s network, which plans to compensate with many more satellites (more than 6,000).

SpaceX’s Starlink constellation is the closest to becoming operational, having launched more than 1,000 of its planned 4,400 satellites. In its most recent FCC filing, the company reduced the altitude of the satellites’ orbits, which the team found increased its overall throughput.

The team found that Amazon’s satellite configuration would provide the highest data rates of the four networks, if it were to also build out a disproportionately large number of gateway antennas, which the team estimates to be about 4,000 around the world. “On paper, Amazon has a higher throughput. But these companies are filing new iterations to outdo themselves and get more capable systems. So these are exciting times,” Del Portillo says. “Everyone is talking about these constellations in the space industry. Some people think they will change the world, others think they’ll fail. But there’s a lot of innovation going on.”

Reference: “An Updated Comparison of Four Low Earth Orbit Satellite Constellation Systems to Provide Global Broadband” by Nils Pachler, Inigo del Portillo, Edward F. Crawley and Bruce G. Cameron.

PDF

This research was funded, in part, by satellite and telecommunications company SES S.A.

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.

2 Comments

Someone needs to create a metric that is comparable like mpg. Its the same crap with mobile phones, I dont care about the number of lines, I want the lines per inch.

For satellites we should do the calculation based on average connections maintainable and average up and down speed per device in a square mile that they service. That way if providers are dense in the middle latitudes and sparse in the poles they can provide regional numbers.

Would agree wholeheartedly with Mr. Ryan’s comment and include the observation that there is zero practical information about the throughput rates of any of these systems (except perhaps StarLink) based on the angle of orbit of the satellite “constellation” to the dish(es) on the ground.

Those of us who live in the mountains with lots of trees can expect a current average (ViaSAT provider) up/down speed of nine(9) mbps assuming there is no wind, rain or extremes of heart or cold.