Despite AI’s impressive track record, its computational power pales in comparison with a human brain. Now, scientists unveil a revolutionary path to drive computing forward: organoid intelligence, where lab-grown brain organoids act as biological hardware.

Artificial intelligence (AI) has long been inspired by the human brain. This approach proved highly successful: AI boasts impressive achievements – from diagnosing medical conditions to composing poetry. Still, the original model continues to outperform machines in many ways. This is why, for example, we can ‘prove our humanity’ with trivial image tests online. What if instead of trying to make AI more brain-like, we went straight to the source?

Scientists across multiple disciplines are working to create revolutionary biocomputers where three-dimensional cultures of brain cells, called brain organoids, serve as biological hardware. They describe their roadmap for realizing this vision in the journal Frontiers in Science.

“We call this new interdisciplinary field ‘organoid intelligence’ (OI),” said Prof Thomas Hartung of Johns Hopkins University. “A community of top scientists has gathered to develop this technology, which we believe will launch a new era of fast, powerful, and efficient biocomputing.”

What Are Brain Organoids, and Why Would They Make Good Computers?

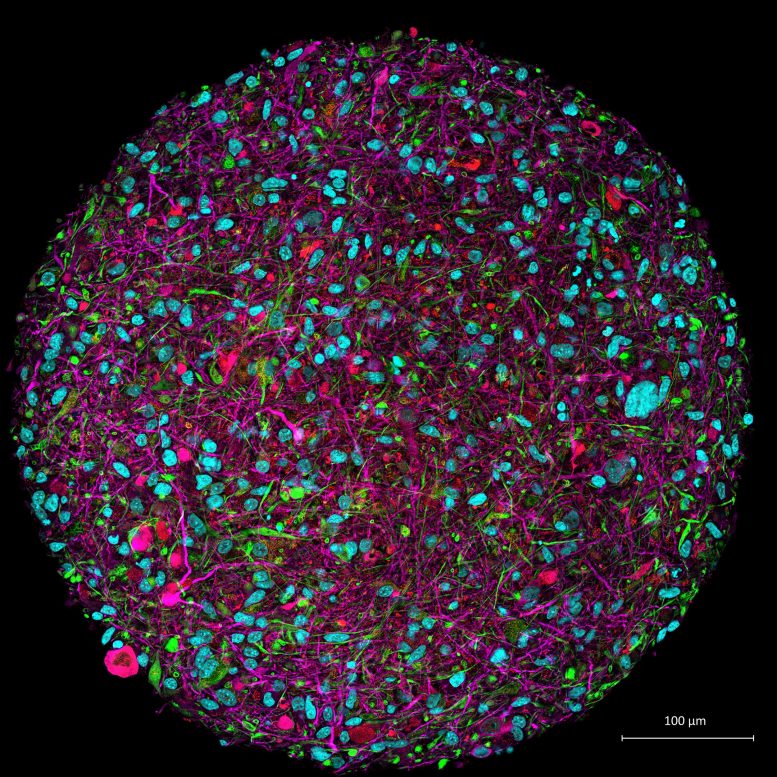

Brain organoids are a type of lab-grown cell-culture. Even though brain organoids aren’t ‘mini brains’, they share key aspects of brain function and structure such as neurons and other brain cells that are essential for cognitive functions like learning and memory. Also, whereas most cell cultures are flat, organoids have a three-dimensional structure. This increases the culture’s cell density 1,000-fold, meaning that neurons can form many more connections.

But even if brain organoids are a good imitation of brains, why would they make good computers? After all, aren’t computers smarter and faster than brains?

“While silicon-based computers are certainly better with numbers, brains are better at learning,” Hartung explained. “For example, AlphaGo [the AI that beat the world’s number one Go player in 2017] was trained on data from 160,000 games. A person would have to play five hours a day for more than 175 years to experience these many games.”

Brains are not only superior learners, they are also more energy efficient. For instance, the amount of energy spent training AlphaGo is more than is needed to sustain an active adult for a decade.

“Brains also have an amazing capacity to store information, estimated at 2,500TB,” Hartung added. “We’re reaching the physical limits of silicon computers because we cannot pack more transistors into a tiny chip. But the brain is wired completely differently. It has about 100bn neurons linked through over 1015 connection points. It’s an enormous power difference compared to our current technology.”

What Would Organoid Intelligence Bio Computers Look Like?

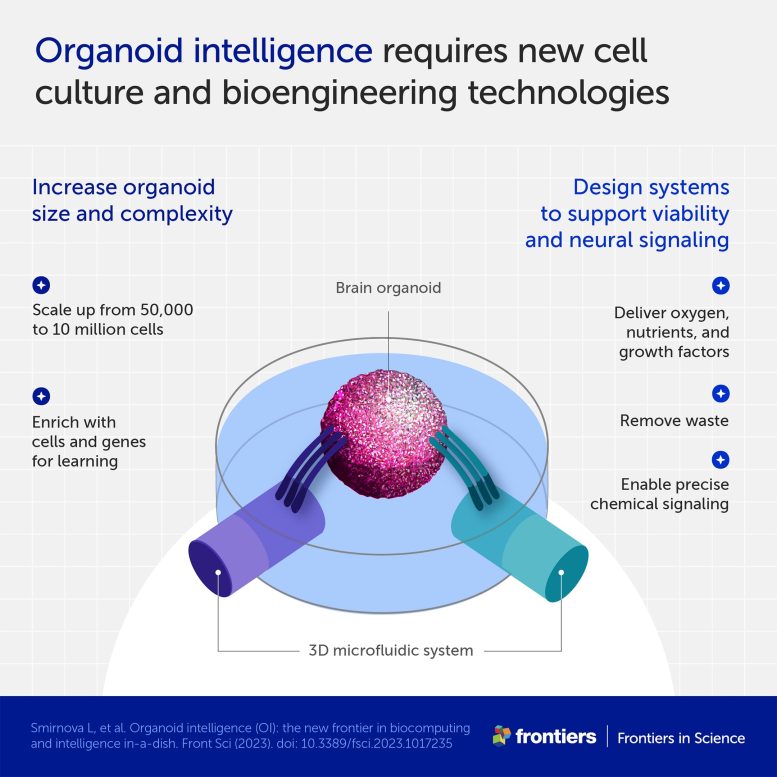

According to Hartung, current brain organoids need to be scaled-up for OI. “They are too small, each containing about 50,000 cells. For OI, we would need to increase this number to 10 million,” he explained.

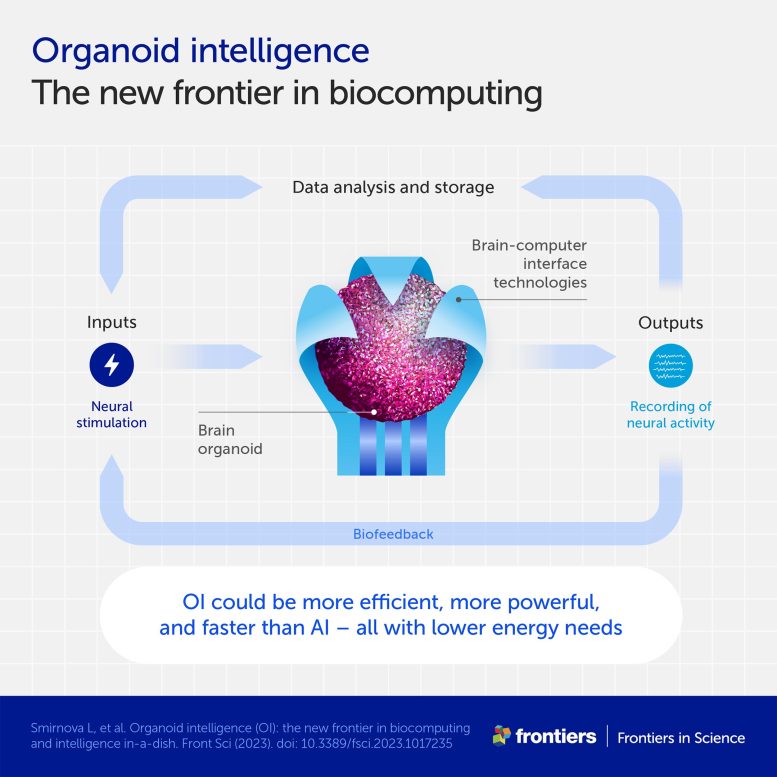

In parallel, the authors are also developing technologies to communicate with the organoids: in other words, to send them information and read out what they’re ‘thinking’. The authors plan to adapt tools from various scientific disciplines, such as bioengineering and machine learning, as well as engineer new stimulation and recording devices.

“We developed a brain-computer interface device that is a kind of an EEG cap for organoids, which we presented in an article published last August. It is a flexible shell that is densely covered with tiny electrodes that can both pick up signals from the organoid, and transmit signals to it,” said Hartung.

The authors envision that eventually, OI would integrate a wide range of stimulation and recording tools. These will orchestrate interactions across networks of interconnected organoids that implement more complex computations.

Organoid Intelligence Could Help Prevent and Treat Neurological Conditions

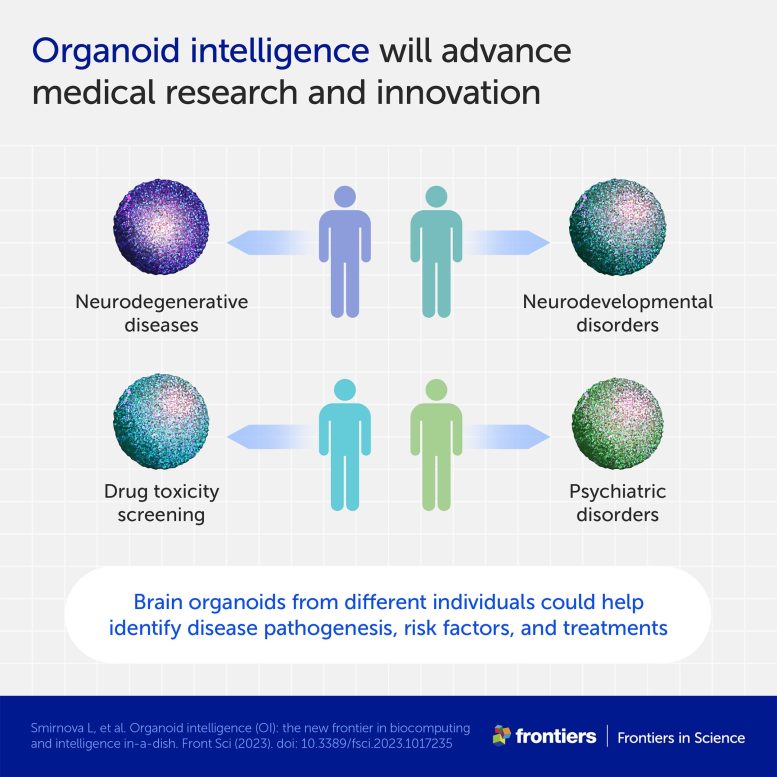

OI’s promise goes beyond computing and into medicine. Thanks to a groundbreaking technique developed by Noble Laureates John Gurdon and Shinya Yamanaka, brain organoids can be produced from adult tissues. This means that scientists can develop personalized brain organoids from skin samples of patients suffering from neural disorders, such as Alzheimer’s disease. They can then run multiple tests to investigate how genetic factors, medicines, and toxins influence these conditions.

“With OI, we could study the cognitive aspects of neurological conditions as well,” Hartung said. “For example, we could compare memory formation in organoids derived from healthy people and from Alzheimer’s patients, and try to repair relative deficits. We could also use OI to test whether certain substances, such as pesticides, cause memory or learning problems.”

Taking Ethical Considerations Into Account

Creating human brain organoids that can learn, remember, and interact with their environment raises complex ethical questions. For example, could they develop consciousness, even in a rudimentary form? Could they experience pain or suffering? And what rights would people have concerning brain organoids made from their cells?

The authors are acutely aware of these issues. “A key part of our vision is to develop OI in an ethical and socially responsible manner,” Hartung said. “For this reason, we have partnered with ethicists from the very beginning to establish an ‘embedded ethics’ approach. All ethical issues will be continuously assessed by teams made up of scientists, ethicists, and the public, as the research evolves.”

How Far Are We From the First Organoid Intelligence?

Even though OI is still in its infancy, a recently-published study by one of the article’s co-authors – Dr. Brett Kagan of the Cortical Labs – provides proof of concept. His team showed that a normal, flat brain cell culture can learn to play the video game Pong.

“Their team is already testing this with brain organoids,” Hartung added. “And I would say that replicating this experiment with organoids already fulfills the basic definition of OI. From here on, it’s just a matter of building the community, the tools, and the technologies to realize OI’s full potential,” he concluded.

Reference: “Organoid intelligence (OI): the new frontier in biocomputing and intelligence-in-a-dish” by Lena Smirnova, Brian S. Caffo, David H. Gracias, Qi Huang, Itzy E. Morales Pantoja, Bohao Tang, Donald J. Zack, Cynthia A. Berlinicke, J. Lomax Boyd, Timothy D. Harris, Erik C. Johnson, Brett J. Kagan, Jeffrey Kahn, Alysson R. Muotri, Barton L. Paulhamus, Jens C. Schwamborn, Jesse Plotkin, Alexander S. Szalay, Joshua T. Vogelstein, Paul F. Worley and Thomas Hartung, 27 February 2023, Frontiers in Science.

DOI: 10.3389/fsci.2023.1017235

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.

11 Comments

… it was obvious this was on the way!

… Now, we might even figure out the Neanderthal brain inner workings…

This article is of extremely poor quality. I have not yet read the referenced publication.

Article claim: human brains are better at learning than computers

Article argument: Go AI beat the human world champion in 2017. A human would require 5 hours per day for 175 years to get the same amount of training done.

Counterargument: There is no fundamenatal guarantee that a human would learn more, e.g. become a better player, from 175 years of practise, and the article presents absolutely no data to support that.

Conclusion: A human is hundreds of time less efficient at practising, e.g. getting experience to learn from. The article actually makes a case for computers being more efficient learners in terms of both time and results.

Article claim: The human brain is more energy efficient than a computer.

Article argument: The energy required to train the Go AI could sustain the human brain for decades.

Remark: The article claim compares brain to computer. The article argument compares human to computer. There is a big difference between a human and a brain. In relation to this claim, running a brain without the surrounding human would obviously be more energy efficient.

Conclusion: The energy spent training the Go AI would sustain a human for decades, but the human would need centuries to get the same training done. The human would need an order of magnitude more energy for the same amount of training, and there is still no data to indicate if this amount of training would suffice to perform at the level of the AI. However, if it is possible to run the human brain using a tenth of the energy required to run a full human being, the brain’s energy requirements for learning may be similar to that of a computer, assuming the organic brain can continue to operate at peek efficiency for 175 years.

Correction: The article argument citation should be “energy (…) could sustain a human for decades”, not “energy (…) could sustain the human brain for decades”

Johan while I share a small amount of your skepticism about this article, your attempts to pick apart the ‘article claims’ reveal that you know virtually nothing about neuroscience, modern computer intelligence including systems like Alpha Go, or much of anything else you talk about here. So let’s take a second look:

1. With respect to Alpha Go, you find that “A human is hundreds of time less efficient at practising, e.g. getting experience to learn from. The article actually makes a case for computers being more efficient learners in terms of both time and results.”

This is insipid and inaccurate for a number of reasons. The foremost of these is that the Alpha Go system is not ‘learning the game’ in a way remotely similar to a human player: for one thing it relies heavily on brute numerical tree search algorithms and to a lesser degree hardcoded heuristic game-specific templates to interpret the sate of the board, alongside biology-inspired deep neural networks. Strategic turn based games with discrete board states are inherently amenable to computer agents for the same reason that a database, excel spreadsheet, or even loose sheets of graph paper are better at accurately storing an array of ten digit numbers with twenty columns and fifty thousand rows than a human trying to memorize them all. Well done, your pop-sci-tier comparison neatly sidestepped the important lesson that…

2. Organic computing systems and Von Neumann computers are inherently better at different types of tasks.

Using your own example above, let’s think about Go. Presuming the Alpha Go team had twenty people on it that worked around 50 hours a week for five years, just the development time alone took about 260,000 hours or almost thirty cumulative people-years. It was then trained for an estimated 175 people-years, so just shy of 200 people-years total. It’s entirely likely 200 years of practice would not allow a human to beat Alpha Go – but let’s consider another metric: in the amount of time it would take to teach a human how to play Go, would Alpha Go be able to beat them? Organic intelligence is very *very* good at one-shot learning, adaptive and flexible problem solving, and associatively mapping solutions from one problem space to another. No existing computer intelligence comes even remotely close in those domains any more than any neural intelligence comes close to how much “””memorization””” a forty year old computer can do.

I could teach a ten year old how to play Go, Shogi, Chess, Settlers of Catan, Dungeons and Dragons, Kickball, Baseball, Tennis, and CS:GO in under a year. Would they be exceptional at any of those things? Probably not. Could I teach Alpha Go (or even an actual modern AI system) that many distinct complex tasks in that period of time? No, absolutely not. Humans are fantastically more time efficient at learning complex tasks than even the best neural networks that exist right now, not to mention much more adaptable and able to learn additional tasks trivially – your claim that “computers being more efficient learners [than humans] in terms of both time and results” is comprehensively wrong in almost every way.

3. You don’t even seem to believe your own speculation about how “the brain’s energy requirements for learning may be similar to that of a computer, assuming the organic brain can continue to operate at peek efficiency for 175 years”, but it is similarly misinformed to all of your other statements. Human brains require about 3 watts of energy, where the power consumption of a single A100 GPU (the NVIDIA current flagship AI card) uses about 350 watts. By coincidence, it would take a single V100 (the previous generation card) around 350 YEARS to train the current media darling AI model GPT3. So again, very wrong

In summary, you do not appear to have a clear understanding of how current AI functions, what it’s limitations are, how organic neural intelligence functions or anything about the differences between them. Consider actually reading primary sources and constructing sensible arguments next time you post.

1. Well that was a well-earned ¡Ouch!

2. But more importantly: Thank you responding so comprehensively in the face of Brandolini’s_Law. No indication if JM appreciated it, but many other out here do.

A-ha! You want to tune me, and train a schizophrenic anthena to move a babbler! You wouldn’t leave it in the streets wandering as scryer… would you?

This is a terrible idea. It doesn’t matter how “embedded” the ethics chain is. The more neural cells you pack together, the more likely you’ll get an actual human brain. Human brains are human and have human thought.

I love the science part of this, but this is mad science and shouldn’t happen.

Although the probability of facing any WiFi-related problem after installing a Victony range extender is minimum, still some users complain about facing some. They often say that their WiFi extender causes the internet to drop and hence they get high ping while playing online games. As a result, they have lost countless games.

If the answer to any of these questions is yes and you are exhausted with all other possible solutions, then it is time you do a Netgear WiFi extender reset. But how to reset your extender? What will happen after resetting it? What is the next process after you have reset your device? If you want answers to these questions, then continue reading further.

HELLO SCIENTIFIC FRIENDS *

It would be wonderful if in the future, artificial intelligence could analyze without inputting information and data.

******** GOOD LUCK *******