When it comes to measuring how fast the Universe is expanding, the result depends on which side of the Universe you start from. An EPFL study has calibrated the best cosmic yardsticks to unprecedented accuracy, shedding new light on the Hubble tension.

The Hubble tension, a discrepancy in the cosmic expansion rate (H0) between early Universe and late Universe measurement methods, has puzzled astrophysicists and cosmologists. A study by the Stellar Standard Candles and Distances research group at EPFL’s Institute of Physics has achieved the most accurate calibration of Cepheid stars for distance measurements, amplifying the Hubble tension. The discrepancy calls into question the basic concepts of physics and has implications for the nature of dark energy, the time-space continuum, and gravity.

The Universe is expanding – but how fast exactly? The answer appears to depend on whether you estimate the cosmic expansion rate – referred to as the Hubble’s constant, or H0 – based on the echo of the Big Bang (the cosmic microwave background, or CMB) or you measure H0 directly based on today’s stars and galaxies. This problem, known as the Hubble tension, has puzzled astrophysicists and cosmologists around the world.

A study carried out by the Stellar Standard Candles and Distances research group, lead by Richard Anderson at EPFL’s Institute of Physics, adds a new piece to the puzzle. Their research, published today (April 4) in the journal Astronomy & Astrophysics, achieved the most accurate calibration of Cepheid stars – a type of variable star whose luminosity fluctuates over a defined period – for distance measurements to date based on data collected by the European Space Agency’s (ESA’s) Gaia mission. This new calibration further amplifies the Hubble tension.

The Hubble constant (H0) is named after the astrophysicist who, together with Georges Lemaître, discovered the phenomenon in the late 1920s. It’s measured in kilometers per second per megaparsec (km/s/Mpc), where 1 Mpc is around 3.26 million light years.

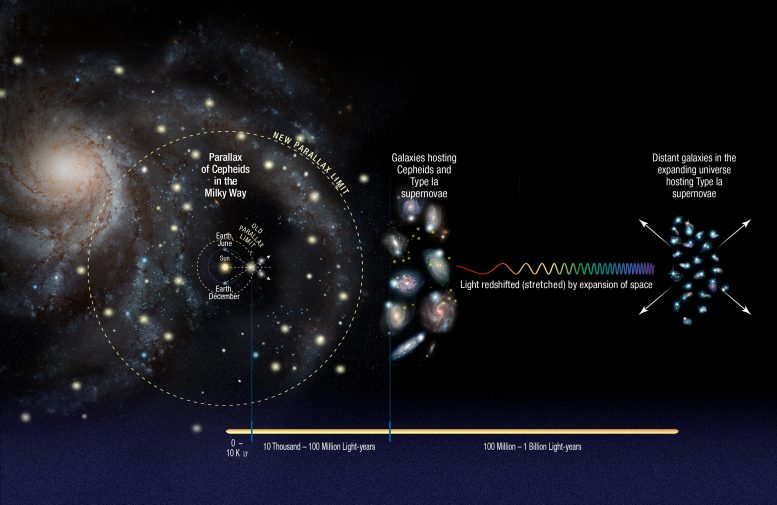

The best direct measurement of H0 uses a “cosmic distance ladder,” whose first rung is set by the absolute calibration of the brightness of Cepheids, now recalibrated by the EPFL study. In turn, Cepheids calibrate the next rung of the ladder, where supernovae – powerful explosions of stars at the end of their lives – trace the expansion of space itself. This distance ladder, measured by the Supernovae, H0, for the Equation of State of dark energy (SH0ES) team led by Adam Riess, winner of the 2011 Nobel Prize in Physics, puts H0 at 73.0 ± 1.0 km/s/Mpc.

First Radiation After the Big Bang

H0 can also be determined by interpreting the CMB – which is the ubiquitous microwave radiation left over from the Big Bang more than 13 billion years ago. However, this “early Universe” measurement method has to assume the most detailed physical understanding of how the Universe evolves, rendering it model dependent. The ESA’s Planck satellite has provided the most complete data on the CMB, and according to this method, H0 is 67.4 ± 0.5 km/s/Mpc.

The Hubble tension refers to this discrepancy of 5.6 km/s/Mpc, depending on whether the CMB (early Universe) method or the distance ladder (late Universe) method is used. The implication, provided that the measurements performed in both methods are correct, is that there is something wrong in the understanding of the basic physical laws that govern the Universe. Naturally, this major issue underscores how essential it is for astrophysicists’ methods to be reliable.

The new EPFL study is so important because it strengthens the first rung of the distance ladder by improving the calibration of Cepheids as distance tracers. Indeed, the new calibration allows us to measure astronomical distances to within ± 0.9%, and this lends strong support to the late Universe measurement. Additionally, the results obtained at EPFL, in collaboration with the SH0ES team, helped to refine the H0 measurement, resulting in improved precision and an increased significance of the Hubble tension.

“Our study confirms the 73 km/s/Mpc expansion rate, but more importantly, it also provides the most precise, reliable calibrations of Cepheids as tools to measure distances to date,” says Anderson. “We developed a method that searched for Cepheids belonging to star clusters made up of several hundreds of stars by testing whether stars are moving together through the Milky Way. Thanks to this trick, we could take advantage of the best knowledge of Gaia’s parallax measurements while benefiting from the gain in precision provided by the many cluster member stars. This has allowed us to push the accuracy of Gaia parallaxes to their limit and provides the firmest basis on which the distance ladder can be rested.”

Rethinking Basic Concepts

Why does a difference of just a few km/s/Mpc matter, given the vast scale of the Universe? “This discrepancy has a huge significance,” says Anderson. “Suppose you wanted to build a tunnel by digging into two opposite sides of a mountain. If you’ve understood the type of rock correctly and if your calculations are correct, then the two holes you’re digging will meet in the center. But if they don’t, that means you’ve made a mistake – either your calculations are wrong or you’re wrong about the type of rock. That’s what’s going on with the Hubble constant. The more confirmation we get that our calculations are accurate, the more we can conclude that the discrepancy means our understanding of the Universe is mistaken, that the Universe isn’t quite as we thought.”

The discrepancy has many other implications. It calls into question the very fundamentals, like the exact nature of dark energy, the time-space continuum, and gravity. “It means we have to rethink the basic concepts that form the foundation of our overall understanding of physics,” says Anderson.

His research group’s study makes an important contribution in other areas, too. “Because our measurements are so precise, they give us insight into the geometry of the Milky Way,” says Mauricio Cruz Reyes, a PhD student in Anderson’s research group and lead author of the study. “The highly accurate calibration we developed will let us better determine the Milky Way’s size and shape as a flat-disk galaxy and its distance from other galaxies, for example. Our work also confirmed the reliability of the Gaia data by comparing them with those taken from other telescopes.”

Reference: “A 0.9% calibration of the Galactic Cepheid luminosity scale based on Gaia DR3 data of open clusters and Cepheids” by Mauricio Cruz Reyes and Richard I. Anderson, 4 April 2023, Astronomy and Astrophysics.

DOI: 10.1051/0004-6361/202244775

This project has received funding from the European Research Council (ERC) under the European Union’s Horizon 2020 research and innovation program (grant agreement No 947660).

RIA is funded by the SNSF through an Eccellenza Professorial Fellowship, grant number PCEFP2_194638.

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.

5 Comments

These measurements are interesting and possibly useful, but as far as Dark Energy is concerned, they’re missing the point.

Another way to explain Dark Energy is suggested by String Theory. All matter and energy, including photons (light), have vibrating strings as their basis.

String and anti-string pairs are speculated to be created in the quantum foam, a roiling energy field suggested by quantum mechanics, and they immediately annihilate each other. If light passes near these string/anti-string annihilations, perhaps some of that annihilation energy is absorbed by the string in the light. Then the Fraunhofer lines in that light will move a bit towards the blue and away from the red shift. As this continues in an expanding universe we get the same curve displayed by Perlmutter and colleagues at their Nobel Prize lecture, without the need for Dark Energy.

This speculation has the universe behaving in a much more direct way. Specifics on this can be found by searching YouTube for “Dark Energy – a String Theory Way”

The more detections that are becoming corrected (the size of a proton without the field energy scalar cloud ) the better we understand that the quantum is very allusive. I would like to do an experiment that I think has never been done, the next Artemis space flight take samples of the space vacuum in three different areas first a sample from close to Earth then a sample from half the distance to the moon then a third from moon orbit. the failsafe of collection would be that two chances are available on the way and on the way back these samples could be an eye opener. I don’t think we have sampled space vacuum and the properties, you could use a sample container that would hold the true value of vacuum space. Maybe a clue to dark matter

The discrepancy is caused by the fact not recognized by astronomers that the Universe is NOT expanding AT ALL. Red Shift is the WORST MISTAKE EVER MADE akin “Flat Earth Theory” in the magnitude of its error. It was based on the early 20th Century ASSUMPTION that space is EMPTY. Of course, we now know that it is NOT EMPTY AT ALL, but contains dust, gas and other elements. There was an alternate explanation for Red Shift and that was light dims over vast distances and thus appears to shift red from our vantage point. That doesn’t mean it’s moving away from us! It means it’s DIMMING and that dimming can be caused by the matter floating in the void between us and the target.

Since ALL our “distance” observations are BASED ON RED SHIFT, that means we are not only NOT EXPANDING or ACCELERATING, but in fact, we are measuring DISTANCES WRONGLY AS WELL!!!! That galaxy we thought was 2 Billion light years away may in fact only be 2000 light years away if there’s substantial dust between us and it, causing excessive red shift that these people then interpret as far away and even accelerating away from us. It also means distortions in gravity are caused by the same and that there is NO DARK MATTER or DARK ENERGY!!!! (which is of course why we can’t detect either one since they do NOT exist!)

The problem is a human one. We do not like to admit we are wrong and demand far more proof to disprove something than to assume it exists. Dark Matter and Dark Energy are both MATH KLUDGES designed to “fix” and “explain” measurement errors when the error is due to nonsensical assumptions about the cause of Red Shift. There is a sort of “dark matter” and it’s dust that isn’t being picked up directly due to its size and distance across vast tracks of space, but IS causing dimming and we then think the object is further away than it really is causing gross distortions in our measurements, our assumptions and our disconnect with some galaxies that appear to have “large amounts of dark matter” and those that “have none” (no dust between us and them).

This is so fundamentally basic and so simple and historically documented that the dimming explanation was rejected in a time period when space was assumed to be empty, but it’s now so INGRAINED that it’s almost like a religion and anyone who contradicts the edict should be “excommunicated” (we call that “canceled” today). The same problems exist in everything from Alzheimer’s research (the gospel is amyloid plaques and nothing else shall even be considered, let alone funded despite no major real progress along those lines). Until humans recognize that true science means considering the fact their assumptions and beliefs can be WRONG, we’ll keep wasting time looking for something that DOES NOT EXIST.

Of course! The problem is that scientists disagree with [ME, who alone knows the truth]!

Proper time from coordinate time versus the obverse? Creation and destruction of test particles not in the model? Perhaps:

proper time = ( possibility->fact , proper time )

When the test particle runs out of possibilities it ceases to exist, after which the stream of facts in which it’s involved stops.

Would this eliminate all the empty proper times no longer attached to particles?