Much of what biologists have learned about animal behavior over the years has come from careful observation and painstaking notes. There could soon be an easier way.

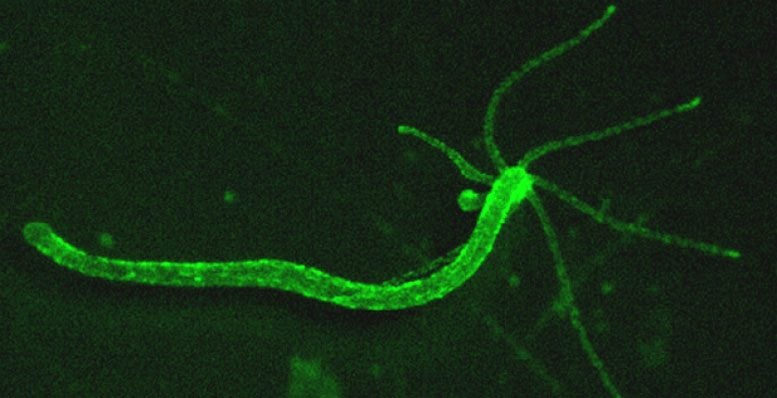

In a new study in the journal eLife, researchers at Columbia University show how an algorithm for filtering spam can learn to pick out, from hours of video footage, the full behavioral repertoire of a tiny, pond-dwelling Hydra. A close relative of coral, jellies, and sea anemones, Hydra is so primitive that it lacks a backbone or brain. But when it moves, feeds, and evades predators, it behaves in predictable ways that a computer can recognize.

By comparing Hydra’s behaviors to the firing of its neurons, the researchers hope to eventually understand how its nervous system, and that of more complex animals, works. “People have used machine learning algorithms to partly analyze how a fruit fly flies, and how a worm crawls, but this is the first systematic description of an animal’s behavior,” said the study’s senior author, Rafael Yuste, a neuroscientist at Columbia University and a member of Columbia’s Data Science Institute. “Now that we can measure the entirety of Hydra’s behavior in real-time, we can see if it can learn, and if so, how its neurons respond.”

When researchers manipulated Hydra’s environmental conditions, they found that six common behaviors, captured in the above movie, hardly changed at all. (Yuste Lab/Columbia University).

Hydra’s ancestors appeared on Earth some 700 million years ago, before the Cambrian explosion that gave rise to most modern species. Instead of a brain, hundreds of neurons run along its narrow, translucent body coordinating behaviors that range from basic — curling into a ball to avoid predators — to sophisticated — somersaulting to get around.

In an earlier study in Current Biology, Yuste and his colleagues recorded all of its neurons firing in real-time and discovered four sets of neural circuits that control four distinct elongation and bending behaviors, paving the way to understand how Hydra’s nervous system regulates its behavior.

In the current study, the team went a step further by attempting to catalog Hydra’s complete set of behaviors. To do so, they applied the popular “bag of words” classification algorithm to hours of footage tracking Hydra’s every move. Just as the algorithm analyzes how often words appear in a body of text to pick out topics (and flag, for example, patterns resembling spam), it cycled through the Hydra video and identified repetitive movements.

Their algorithm recognized 10 previously described behaviors and measured how six of those behaviors responded to varying environmental conditions. To the researchers’ surprise, Hydra’s behavior barely changed. “Whether you fed it or not, turned the light on or off, it did the same thing over and over again like an Energizer bunny,” said Yuste.

The researchers think Hydra may have evolved a way of adjusting to its environment as if on auto-pilot. They are now experimenting with other stimuli to see if Hydra will respond and learn. Eventually, they hope to crack its neural code with a model that shows how its networks of neurons create behavior.

Lessons learned from Hydra may also be useful to a branch of engineering concerned with maintaining stability and precise control in machines, from ships to planes, navigating in highly variable conditions.

The nervous systems of even simple animals like Hydra have evolved to maintain constancy in their behaviors, said Yuste. If engineers could unlock their secret, technology could be infused with biologically-inspired controls that have evolved over hundreds of millions of years.

“Reverse engineering Hydra has the potential to teach us so many things,” said the study’s lead author, Shuting Han, a graduate student at Columbia. Formerly in Yuste’s lab, the study’s other authors, Ekaterina Taralova and Christophe Dupré, are now at the startup Zoox Inc. and Harvard.

Reference: “Comprehensive machine learning analysis of Hydra behavior reveals a stable basal behavioral repertoire” by Shuting Han, Ekaterina Taralova, Christophe Dupre and Rafael Yuste, 28 March 2018, eLife.

DOI: 10.7554/eLife.32605

Never miss a breakthrough: Join the SciTechDaily newsletter.

Follow us on Google and Google News.